Multi-stage framework using transformer models, feature fusion and ensemble learning for enhancing eye disease classification

Experimental setup

This study implemented models using Python, an NVIDIA RTX-3090 GPU, Windows 10 Professional, and an Intel i7 processor with 3.2 GHz. Swin, ViT, and DeiT were implemented using Monai, PyTorch, and Sklearn.

We conducted different experiments based on various approaches. The first approach is standalone transformer models (Swin, ViT, and DeiT). The second approach integrates transformer models, Swin, ViT, and Diet, as feature extraction with ML as a classifier. The second approach is based on Swin, ViT, and DieT, which are used for feature extraction; PCA is used for feature reduction to reduce the complexity of features; and ML models, including RF, SVM, and LR, are used as classifiers. The third approach is hybrid models based on Swin, ViT, and Diet, which are used for feature extraction; PCA is used for feature reduction to reduce the complexity of features; and ML models, including RF, SVM, and LR, are used as classifiers. The fourth approach is the proposed model MST-EDS, which is trained by stacking training that is stacked by the output predictions from the training set based on the best hybrid models, and evaluated by stacking testing that is stacked from the testing set based on the best hybrid models.

The PCA method is employed with a preserved variance of 95% to reduce the data’s dimensionality. It is applied to training features, and then the number of components is used to testing features, resulting in DeiT reducing from (2949, 37824) to (2949, 2757) with 2757 principal components, Swin reducing from (2949, 768) to (2949, 500) with 500 principal components (500), and ViT reducing from (2949, 768) to (2949, 580) with 580 principal components.

For the setting of ML, we stated that ML classifiers were trained using a 5-fold cross-validation strategy to ensure generalizability and avoid overfitting with key hyperparameters of RF (number of estimators = 100, max depth = 10, criterion = gini), LR (C = 1.0, max_iter = 100), and SVM (kernel = rbf, C = 1). The hyperparameters of Transformer models as shown in Table 3.

Data splitting

The models were trained using 70% of the total number of images, validated using 10%, and tested with 20%. The dataset is balanced and divided using stratified methods, which means the number of classes in training, testing, and validation sets is approximately nearby. Table 4 shows the number of images in each class.

The performance of models across all classes of eye disease

Tables 5 and 6 present the performance of different models for each class across three approaches: standalone models, hybrid models, and MST-EDS based on various evaluation matrices precision, recall, and F1-score. As shown in Tables, all models based on diabetic_retinopathy classes recorded the highest performance compared to glaucoma or cataracts because the features in diabetic_retinopathy, such as microaneurysms, hemorrhages, and exudates, are easy to detect by models. MST-EDS recorded the best performance across all classes, especially with RF, because Swin captures local and global features using hierarchical representation and attention mechanisms. RF provides insights into the importance of different features, which is useful in understanding the underlying relationships in the data.

In standalone models, Swin models achieved the highest performance across all classes with 99.099 precision and 93.548 F1-score for diabetic_retinopathy. ViT scored the lowest across all classes with 75.102 precision and 79.826 F1-score for normal classes.

For integrating transformer models with ML, for ViT-ML models, ViT-RF achieved the highest recall, with a score of 98.636 for diabetic_retinopathy. Meanwhile, ViT-SVM with glaucoma had the lowest precision of 79.126. For DieT-ML, DeiT-RF had the highest recall for diabetic_retinopathy, scoring 98.182. and DeiT-SVM recorded the lowest recall with 80.198 for glaucoma. Swin-RF had the highest precision, recall, and F1 Score for Swin-PCA-ML models in every class, with a score of 99.087 for diabetic_retinopathy.

Similarly, combining transformer models with PCA and ML yields better results than combining transformer models with ML since PCA chooses the best features from the feature representation matrix. The integration of Swin-PCA-RF scored the highest because Swin captures local and global features. RF provides insights into the importance of different features, which is useful in understanding the underlying relationships in the data.

For ViT-PCA-ML models, ViT-PCA-RF scored the highest recall, with 99.545 for diabetic_retinopathy. It also had the highest precision and recall for glaucoma and normal, with 83.575 and 87.273, respectively. ViT-PCA-SVM scored the lowest recall, with 81.683 for glaucoma. It had the same recall for cataracts and normal, around 87. For DieT-PCA-ML, DeiT-PCA-RF had the highest recall for diabetic retinopathy, scoring 99.545. At 87.624 and 90.741, respectively, it likewise had the highest recall for glaucoma and normal. For Swin-PCA-ML models, Swin-PCA-RF recorded the highest precision, recall and F1 Score across all classes, with 100 for diabetic retinopathy and 95.673 for cataract. Swin-PCA-SVM scored the worst precision, with 86.667 for glaucoma and the same F1-score, round 88, for normal and glaucoma.

The proposed model (MST-EDS), MST-EDS-RF enhanced results and scored the best performance across all classes, with 100 precision for diabetic_retinopathy and 97.573 precision for cataract. MST-EDS-SVM recorded the lowest precision, at 88.995 and 90.511 F1-score for glaucoma.

Results of the average performance of the models

Table 7 presents the average of accuracy, precision, recall, and F1-score of different models for each class across approaches: standalone models, hybrid models, and MST-EDS.

As shown in Table 7, standalone transformer models were evaluated, where Swin achieved the highest performance with 91.962 accuracy, 92.075 precision, 91.962 recall, and 91.954 F1-score because Swin captures local and global features using hierarchical representation and attention mechanisms. While, ViT presented poorly with 87.116 accuracy, 87.511 precision, 87.116 recall, and 87.223 F1-score.

For integrating transformer models with ML, transformer models integrating with RF recorded the best performance because RF provides insights into the importance of different features, which helps understand the underlying relationships in the data. As a result, Swin-RF recorded the best performance at 93.144 accuracy, 93.180 precision, 93.144 recall, and 93.148 F1-score. Meanwhile, ViT-SVM recorded the lowest performance, with 87.707 accuracy and 87.717 F1-score.

In the same way, integrating transformer models with PCA and ML results in the best performance compared to integrating transformer models with ML because PCA selects the best features from the feature representation matrix, as shown in the Table 7. As a result, Swin-PCA-RF performed scientifically with 94.799 accuracy, 94.797 precision, 94.799 recall, and 94.793 F1-score, resulting in Swin using attention processes and hierarchical representation to capture both local and global features. While transformer models integrating with SVM scored the worst, due to SVM’s limitations with high-dimensional embeddings and difficulty handling large datasets, as a result, ViT-PCA-SVM registered the worst results with 88.889 accuracy, 88.925 precision, 88.889 recall, and 88.885 F1-score.

The proposed model (MST-EDS) enhanced performance by 2% compared to Swin-RF because stacking the outputs of Swin-PCA-RF, ViT-PCA-RF, and DeiT-PCA-RF with RF as a meta-learner effectively learns optimal feature combinations, enhancing generalization and performance. MST-EDS-RF records the best performances with 97.163 accuracy, 97.168 recall, 97.163 precision, and 97.164 F1-score compared to other models.

The experimental results showed that ML models improve the performance of the MST-EDS system by effectively leveraging deep, high-dimensional feature representations created by transformer models: ViT, Swin, and DeiT. Unlike the traditional SoftMax classifier, which applies a shallow, linear decision layer, ML models such as RF and SVM can capture complex, non-linear patterns within the reduced feature space by PCA, which reduces redundancy and highlights the most informative features. These models offer greater robustness and improved classification accuracy.

Overall, the combination of transformer model, feature fusion, and ensemble learning in the multi-stage framework provides a more complicated and effective method for eye disease classification, which can overcome the shortcomings of the traditional SoftMax classification methods.

Discussion

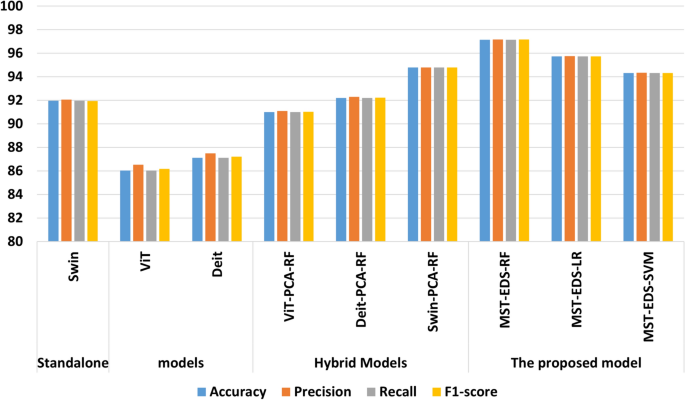

Looking at Fig. 7, it is clear that MST-EDS-RF recorded the highest performance because, by stacking the outputs of hybrid models with RF, optimal feature combinations are effectively learned, and generalization and performance are enhanced. This suggests that adopting sophisticated AI tools in the clinic might improve diagnostic accuracy in eye diseases. The experimental results of MST-EDS, which integrates advanced transformer-based models (Swin, ViT, and DeiT) along with dimensionality reduction (PCA) and ML classifiers, have several implications for the future development of AI tools in medical diagnostics, particularly for eye disease detection: (1) Multimodal and hybrid design potential: By integrating deep transformer architectures with ML, MST-EDS proves that the hybrid approach can improve performance and generalizability, especially when dealing with image medical data. (2) Scalable architecture: MST-EDS’s modularity promotes the method’s transferability by enabling future researchers and developers to modify the pipeline to other imaging modalities or medical areas outside of ophthalmology. (3) Clinical Value and decision support: MST-EDS has the potential to be a dependable decision support system that can help ophthalmologists detect diseases early and accurately, especially in areas with limited access to experts, thanks to its strong diagnostic performance and capacity to incorporate multi-input feature representation. These implications emphasize that MST-EDS advances technical performance and aligns with the practical needs of scalable, interpretable, and clinically deployable AI solutions.

Performance comparison of standalone, hybrid, and proposed models using accuracy, precision, recall, and F1-Score.

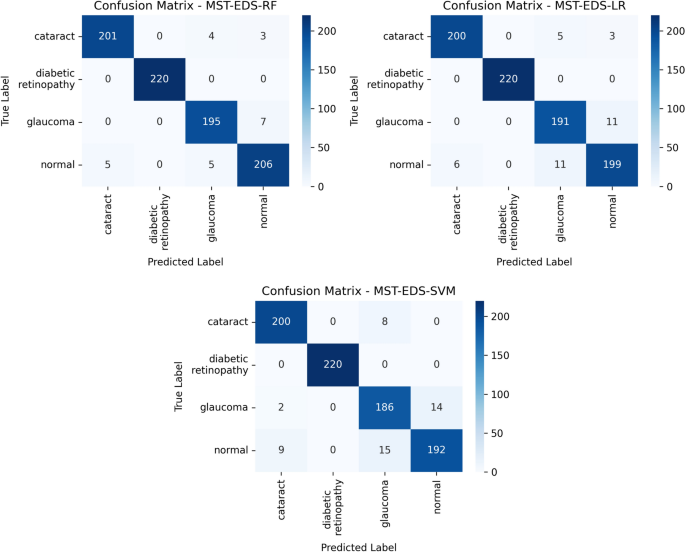

Confusion matrixs of MST-EDS-RF, MST-EDS-LR and MST-EDS-SVM transformers for four classes blue color presents the percentage of True Positive (TP) and percentage of True Negative (TN) and white color presents percentage of False Positive (FP) and percentage of False Negatives.

Figure 8 shows three confusion matrices for MST-EDS-RF, MST-EDS-LR, MST-EDS-SVM. Each confusion matrix evaluates the performance of a classification task involving four classes: cataract, diabetic retinopathy, glaucoma, and normal. All three models show high classification accuracy for diabetic retinopathy. Glaucoma appears to be the most challenging to classify accurately, with a higher number of misclassifications. The MST-EDS-RF model seems to have slightly better performance compared to LR and SVM in terms of lower misclassification rates.

Comparison with literature studies

Table 8 compares the proposed model and literature studies using EDC. The EDC dataset was collected from various sources such as IDRiD, Ocular recognition, HRF, retinal_dataset, and DRIVE. The MST-EDS-RF model improves performance by integrating the hybrid models as base models with a meta-model (RF), achieving the highest accuracy at 97.163. Advantages of this design: (1) Diversity in models: Different transformer models, such as ViT, DeiT, and Swin, can be applied to ensure varied feature representations. (2) PCA is applied to minimize dimensionality by selecting the most informative aspects of the data. (3) Stacking Ensemble to improve performance and make generalizations. While the proposed model offers high accuracy and reliability, its reliance on large labeled datasets and computational complexity poses challenges for real-time deployment. The table shows that MST-EDS-RF recorded the best performance compared to other studies using the EDC dataset. In26, the authors applied the EfficientNet and CNN models and achieved an accuracy of 94 and 84, respectively. In27, the authors applied BayeSVM500 and achieved an accuracy 95.33. An ensemble approach was deployed in the study by28, leading to an accuracy of 96.1, and they compared it with EfficientNetB6 and DenseNet169, which recorded 88.3 and 93.9 of accuracy, respectively. In53, EfficientNetB3 was utilized, yielding an accuracy 93.8.

link