Machine learning for improved density functional theory thermodynamics

Enthalpy of formation

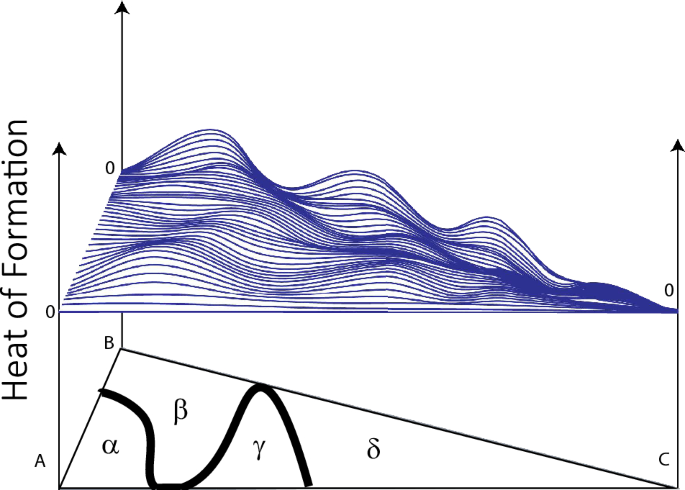

Though it is straightforward to calculate phase equilibria at given external conditions (temperature and pressure) through Gibbs or Helmholtz free energy calculations, such calculations can be complex and time-consuming at high temperatures due to contributions from phonons, anharmonic atomic vibrations, and other effects. Additionally, treating phonons in alloys requires a sophisticated approach. Therefore, in this paper, we focus on the ambient-temperature regions of phase diagrams, which requires only fast calculations without treatment of phonons (or magnons) that typically are done at 0 K.

To determine phase stability at ambient conditions, one needs the total energy of a specific phase as well as all competing phases that may form. We consider the simplest case prone to DFT errors relative to experimental values-namely, the enthalpy of formation (\(H_f\)) of each material. This enthalpy is determined from the DFT total energy relative to the most stable elemental structures as follows:

$$\begin{aligned} H_f (A_{x_A}B_{x_B}C_{x_C}\cdots ) = H(A_{x_A}B_{x_B}C_{x_C}\cdots ) – x_A H(A) -x_B H(B) – x_C H(C) – \cdots \end{aligned}$$

(1)

where \(H(A_{x_{A}}B_{x_{B}}C_{x_{C}})\) is the enthalpy per atom of the intermetallic compound or alloy, and H(A), H(B), and H(C) are the enthalpies per atom of the elements A, B and C in their ground-state structures. In this work we consider systems with maximum three elements, with A, B and C to be among the Al, Ni, Ti, and Pd. The ground-state structures of these elements are fcc-Al, fcc-Ni, fcc-Pd, and hcp-Ti, where fcc stands for face-centered cubic and hcp stands for hexagonal close-packed. Furthermore, \(x_{A}\), \(x_{B}\), and \(x_{C}=1-x_A-{x}_{B}\) are the concentration of elements A, B, and C, respectively. When compared to experimental values of the enthalpy of formation, the error inherent in DFT based calculations of \(H_f\) is unfortunately too large to enable a predictive capability to determine the relative stability of competing phases. It is the purpose of this work to outline a way to reduce this error.

Total energy calculations

Total energies based on DFT are calculated using the exact muffin-tin orbital (EMTO) method16,17 in combination with the full charge density technique18 at zero temperature and pressure and without zero-point motion. The chemical disorder is treated within the coherent potential approximation (CPA)19,20 (EMTO-CPA21). The electrostatic correction to the single-site CPA is considered as implemented in the Lyngby version of the EMTO code22. For details, the reader is referred to Refs22,23,24.. The one-electron Kohn–Sham equations are solved within the soft-core and scalar-relativistic approximations, with \(l_\textrm{max} = 3\) for partial waves and \(l_\textrm{max} = 5\) for their “tails”. The Green’s function is calculated for 16 complex energy points distributed exponentially on a semi-circular contour including states within 1 Ry below the Fermi level. The exchange-correlation effects are described within the Perdew–Burke–Ernzerhof25 version of the generalized gradient approximation. The 0 K theoretical equilibrium lattice parameter for each system is determined from a Morse type of equation of state26 fitted to the ab initio total energies of the experimentally reported structures for five different atomic volumes. The heat of formation is calculated at the theoretical equilibrium volume of all systems used in Eq. 1. To ensure the convergence of total energy and volume calculations, the Monkhorst–Pack k-point mesh27 is set to \(17 \times 17 \times 17\) within the irreducible wedge of the Brillouin zone for the cubic systems. For non-cubic structures, the k-point mesh is scaled according to the b/a and c/a ratios.

Machine learning

To improve the accuracy of first-principles calculations for a multicomponent compound or alloy formation enthalpies, we have developed a simple linear and a more involved neural network model, that are used here to predict the errors between computed and experimental enthalpies of formation for binary and ternary alloys. Each material is characterized using a structured set of input features, including elemental concentrations, atomic numbers, and interaction terms. A training dataset of reliable experimental values of the enthalphy of formation is initially filtered to exclude missing or unreliable enthalpy values, ensuring that only well-defined data points are used for training of the neural network. The input features are also normalized to prevent variations in scale from affecting model performance. The details of this are outlined below.

For a given material composed of elements \(A, B, C, \ldots\), the elemental concentration vector is defined as:

$$\begin{aligned} \mathbf{x} = [x_A, x_B, x_C, \ldots ] \end{aligned}$$

(2)

where \(x_i\) represents the atomic fraction of element \(i\). Additionally, atomic numbers are incorporated as weighted features:

$$\begin{aligned} \mathbf{z} = [x_A Z_A, x_B Z_B, x_C Z_C, \ldots ] \end{aligned}$$

(3)

where \(Z_i\) is the atomic number of element \(i\). To capture interatomic effects, second-order (pairwise) and third-order (triplet) interaction terms are introduced:

$$\begin{aligned} x_{ij} = x_i x_j, \quad x_{ijk} = x_i x_j x_k \end{aligned}$$

(4)

for all unique pairs and triplets of elements. The final feature set consists of the original concentrations, weighted atomic numbers, and interaction terms:

$$\begin{aligned} \mathbf{X} = [x_A, x_B, x_C, \ldots , x_A Z_A, x_B Z_B, x_C Z_C, \ldots , x_{AB}, x_{AC}, x_{BC}, \ldots , x_{ABC}, x_{ABD}, \ldots ] \end{aligned}$$

(5)

The error inherent in DFT calculations (that has its origin from the approximation used for the exchange and correlation functional) can be quantified as the difference between the experimental and theoretical determined enthalpy of formation. Hence, we introduce the term \(H_{\text {corr}}\) as

$$\begin{aligned} H_{\text {corr}}=H_{\text {DFT}} – H_{\text {expt}}, \end{aligned}$$

(6)

and we strive here to use machine learning algorithms to make good estimates of \(H_{\text {corr}}\) when experimental data (\(H_{\text {expt}}\)) are missing. For a simple linear model, the predicted enthalpy correction \(H_{\text {corr}}\) is obtained as a linear combination of the features \(\mathbf{X}\) and the model parameters \(\theta\), which include the weight coefficients \(w_i\) and the bias term \(b\), extracted via a standard least-squares fit:

$$\begin{aligned} H_{\text {corr}} &= b + w_1 x_A + w_2 x_B + w_3 x_C + \cdots , w_4 (x_A Z_A) + w_5 (x_B Z_B) + w_6 (x_C Z_C)\\ &\quad+ \cdots , w_7 x_{AB} + w_8 x_{AC} + w_9 x_{BC} + \cdots , w_{10} x_{ABC} + w_{11} x_{ABD} + \cdots \end{aligned}$$

(7)

This can be expressed more compactly in matrix notation:

$$\begin{aligned} H_{\text {corr}} = \mathbf{w}^T \mathbf{X} + b \end{aligned}$$

(8)

where \(\mathbf{w}\) is the vector of weight coefficients, \(\mathbf{X}\) is the vector of input features, \(b\) is the bias term. Results from this simplistic approach to estimating \(H_{\text {corr}}\) are analyzed below and compared to data obtained from more advanced ML algorithms that undergo supervised training. The details of one such ML method are described below.

A neural network model has been implemented as a multi-layer perceptron (MLP) regressor with three hidden layers containing up to 250, 150, and 100 neurons, respectively. The predicted enthalpy correction, \(H_{\text {corr}}\), as defined in Eq. 6, is obtained as:

$$\begin{aligned} H_{\text {corr}} = f(\mathbf{X}, \theta ), \end{aligned}$$

(9)

where \(f\) represents the neural network function with learnable network parameters \(\theta\). We investigate here if a neural network can result in values of \(H_{\text {corr}}\) as given by Eq. 9 that capture the values given in Eq. 6, and if such a neural network can make accurate predictions of \(H_{\text {corr}}\) when experimental data are missing. The total DFT corrected enthalpy is given by

$$\begin{aligned} H_{\text {pred}} = H_{\text {DFT}} -H_{\text {corr}}, \end{aligned}$$

(10)

where \(H_{\text {DFT}}\) is the enthalpy from DFT calculations. As is demonstrated below, a neural network that is trained for certain concentrations of a ternary system where \(H_{\text {expt}}\) is known, can give reliable values for \(H_{\text {pred}}\) even for concentrations where \(H_{\text {expt}}\) is missing.

Overfitting in the training steps has been controlled through several strategies: 1. Leave-one-out cross-validation (LOOCV) that ensures that each data point is tested individually, preventing memorization of training data; 2. Feature selection that ensures avoidance redundant descriptors; and 3. Early stopping that prevents excessive weight updates once validation performance stabilizes, avoiding unnecessary complexity. Although feature selection was not performed through explicit statistical filtering methods such as variance thresholding, mutual information ranking, or regularization-based elimination, the neural network architecture (MLPRegressor) performs implicit feature selection during training. Features that contribute less to reducing the loss function naturally receive lower weights through internal weight optimization. Furthermore, the set of features used in this study is physically motivated, based on stoichiometric descriptors and elemental properties such as atomic number. This compact and meaningful feature design further enhances interpretability.

The model’s predictive performance has been evaluated using the root-mean-square error (RMSE) across both LOOCV and k-fold cross-validation (in this work we used five folds). The final trained model, along with feature scaling parameters, has been saved for future predictions. This approach enhances the accuracy of computed formation enthalpies while maintaining interpretability in terms of elemental interactions, providing a physics-informed correction to DFT calculations for alloy thermodynamics.

link