Spatial gene expression at single-cell resolution from histology using deep learning with GHIST

Datasets and preprocessing

Subcellular in situ spatial transcriptomics data

-

(i)

BreastCancer1 and BreastCancer2—The 10x Xenium breast cancer datasets included in this study were downloaded from (accessed 14 March 2024). BreastCancer1 was characterized as T2N1M0, stage II-B, ER+/HER2+/PR−. BreastCancer2 was characterized as stage pT2 pN1a pMX, ER−/HER2+/PR−. The H&E image was aligned with its corresponding DAPI image for each dataset and visually verified. The overall pixel dimensions (h × w) of the H&E images were 25,778 × 35,416 for BreastCancer1, and 24,890 × 34,142 for BreastCancer2.

Nuclei were segmented from the H&E images using Hover-Net41 that was pretrained on the CoNSeP dataset. The PyTorch weights of the segmentation-only model were downloaded from the official GitHub repository. The H&E WSIs were divided into nonoverlapping patches that were 3,000 × 3,000 pixels in size (or smaller patch sizes along the WSI border for the remaining pixels), and Hover-Net was applied to the patches. Nuclei detected from two adjacent patches that overlap along the patch borders were merged to form a single nucleus, to avoid division of a nucleus into more than one segment. Other segmentation algorithms including Otsu and StarDist45 were also compared, and best results were obtained using Hover-Net (Supplementary Fig. 18).

BIDCell46 was applied to the transcripts and DAPI data to segment cells in the Xenium datasets and extract the gene expression within whole cells. Low-quality transcripts for Xenium data with a phred-scaled quality value score below 20 were removed, as suggested by the vendor5. Irrelevant data including negative control transcripts, blanks and antisense transcripts were filtered out. There were 313 unique genes for BreastCancer1 and 280 genes for BreastCancer2. For BIDCell, default parameter values from the exemplar file for Xenium and the provided single-cell reference file were used (both files were downloaded from the official BIDCell repository ( version 1.0.3).

To find the corresponding gene expression of cells detected from the subcellular SGE data and cells detected from H&E, the amount of overlap between nuclei from the two data types was computed. An H&E nucleus had a matching subcellular SGE nucleus if the amount of overlap between two corresponding nuclei was at least 50% of the area of the H&E nucleus. Cells with zero total expression counts, cells classified as ‘unassigned’ by scClassify and those with a nucleus smaller than 10 μm2 were filtered out. This resulted in a total of 94,000 cells with single-cell expression for BreastCancer1, and 80,000 cells for BreastCancer2. The count matrix output for the segmented cells from BIDCell was used to classify the cell types.

-

(ii)

LungAdenocarcinoma—The 10x Xenium lung adenocarcinoma dataset with multimodal cell segmentation was downloaded from (accessed 21 February 2024). The H&E image was aligned with its corresponding DAPI image for each dataset and visually verified. The overall pixel dimensions (h × w) of the H&E image were 17,098 × 51,187. There were 377 unique genes in this dataset.

The same process used to segment nuclei from the H&E images for the breast cancer datasets was applied to the LungAdenocarcinoma dataset. We used the cell segmentation provided in the downloaded data bundle, which was achieved through the multimodal cell segmentation pipeline from 10x Genomics.

The same process was used to determine the corresponding cells detected in H&E and subcellular SGE data and perform filtering of cells for the LungAdenocarcinoma dataset as the breast cancer datasets. For LungAdenocarcinoma, overlap was calculated with cells in the SGE data, rather than nuclei. This resulted in a total of 89,000 H&E cells with single-cell expression for LungAdenocarcinoma. The count matrix provided in the downloaded data bundle was used to classify the cell types.

-

(iii)

Melanoma—The 10x Xenium melanoma dataset was downloaded from (accessed 4 April 2024). The H&E image was aligned with its corresponding DAPI image for each dataset and visually verified. The overall pixel dimensions (h × w) of the H&E image were 27,276 × 31,262. There were 382 unique genes in this dataset.

-

(iv)

BreastCancerILC and BreastCancerIDC—The 10x Xenium invasive lobular carcinoma (ILC) and invasive ductal carcinoma (IDC) datasets were downloaded from (accessed 22 January 2025). The overall pixel dimensions (h × w) of the H&E image were 41,405 × 48,511 for BreastCancerILC and 53,738 × 48,376 for BreastCancerIDC. There were 280 unique genes in these two datasets.

The same data processing steps as for the other Xenium datasets were applied to the melanoma dataset. For this dataset, we used the gene expression within nuclei. After filtering, there were 47,000 H&E cells with single-cell expression. The count matrix for the segmented nuclei was used to classify the cell types.

scRNA-seq reference datasets used in cell-type classification

For each dataset, we used scClassify47 version 1.12.0, a supervised single-cell annotation tool, to annotate the cell types of each cell, which uses reference data with known cell types. We compared scClassify with other cell-type annotation tools including ScType48, SingleR49 and clustifyr50 and found scClassify produced the best result (Supplementary Fig. 19). The following scRNA-seq references are used for building the pretrained model for cell annotations.

-

Single-cell breast reference—a publicly available breast cancer dataset from 10x Genomics () as the reference to annotate the breast sample slides. The processing of the dataset is the same as detailed in our previous publication46. We compared the result using this reference with another breast cancer reference dataset51 that we obtained from the CZI CELLxGENE single-cell data portal ( We compared two different settings using a broader cell-type category provided in the Wu et al. data that contains 7 cell types and a finer cell-type category provided in the Wu et al. data that contains 27 cell types. We named the data as Wu et al. major and Wu et al. minor, respectively. We found no noticeable differences compared to using the default reference data46 (Supplementary Fig. 20).

-

Single-cell melanoma reference—we used the preprocessed melanoma dataset obtained from Durante et al.52 as it contains comprehensive annotations. We used the annotation provided, and grouped ‘class 1A primary tumor cells’, ‘class 2 PRAME+ primary tumor cells’, ‘class 1B PRAME+ Met tumor cells’ and ‘class 2 PRAME- primary tumor cells’ into ‘tumor’; ‘M2 macrophages’, ‘M1 macrophages’ and ‘monocyte’ into ‘macrophages’; ‘CD8, T effector memory’, ‘CD8, T resident memory’, ‘cytotoxic CD8’, ‘naive T cells’ and ‘regulatory T cells’ into ‘T cells’.

-

Single-cell lung reference—we used the preprocessed lung atlas data, published by Sikkema et al.53, obtained from the CZI CELLxGENE data portal ( It is one of the largest collections of single-cell lung data. We used the level 2 annotation in the ann_level_2 metadata column. We selected the main cell types: ‘blood vessels’, ‘airway epithelium’, ‘alveolar epithelium’, ‘fibroblast lineage’, ‘lymphatic endothelial cells’, ‘lymphoid’, ‘myeloid’ and ‘smooth muscle’. We grouped ‘airway epithelium’ and ‘alveolar epithelium’ into ‘epithelium’.

HER2ST spatial transcriptomics dataset

The HER2ST dataset23 was measured using SRT to investigate SGE in HER2+ breast tumors, from which the original investigators discovered shared gene signatures for immune and tumor processes. The dataset consists of 36 samples of HER2+ breast tissue sections from eight individuals.

For the histology images, 224 × 224-pixel patches were extracted around each sequencing spot. For GHIST, the patches were center-cropped at 112 × 112 pixels and resized to 256 × 256 pixels, so that the resolution is suitable for the nuclei segmentation model trained on NuCLS, and to be consistent with single-cell settings. For the SGE data of each tissue section, the top 1,000 HVGs for each section were considered and those genes that were expressed in less than 1,000 spots across all tissue sections were excluded. This resulted in 785 genes for the training of all models.

NuCLS datasets

The NuCLS dataset54 was used to provide cell segmentation and cell-type labels for the HER2ST dataset, to enable the training of GHIST to predict spot-level gene expression. The NuCLS dataset was downloaded from (accessed 18 February 2024), and contains annotations of over 220,000 nuclei from breast cancer H&E images from TCGA. The annotations include a mixture of region-level (rectangular) masks and nuclei-level (outlining nuclei boundary) masks for individual nuclei. The annotations were created through a crowdsourcing approach involving pathologists, pathology residents and medical students. There were 1,744 H&E patches in the dataset. The ‘classes’ level of annotations were used as cell-type labels for nuclei. The classes include six cell types: ‘lymphocytes’, ‘macrophages’, ‘stromal cells’, ‘plasma cells’, ‘tumor cells’ and ‘other’.

The original nuclei masks provided in the NuCLS were unsuitable to be directly used for segmentation training, due to the presence of coarse rectangular nuclei masks. Hover-Net (pretrained on the CoNSeP dataset, weights downloaded from the official GitHub repository) was applied to the NuCLS H&E patches to generate fine-grained nuclei-level masks (as pseudo ground-truth masks). Due to inconsistencies in the sizes of H&E patches, four 270 × 270 patches were cropped from each image, from each corner of the original patch, and used as input to perform segmentation.

For every ground-truth nucleus, centroids of pseudo nuclei within the area of the ground-truth nucleus mask were found. The pseudo nucleus with the centroid closest by Euclidean distance to the centroid of the ground-truth nucleus was deemed the corresponding nucleus. The ground-truth cell-type label was assigned to the matched pseudo nucleus mask and used to train Hover-Net from scratch to perform simultaneous nuclei segmentation and predict the cell type of the six types in the labels. Training was performed with a random training split of 90% of available patches, and default model hyperparameters were applied. The model was trained for a total of 100 epochs; only decoders for the first 50 epochs, and all layers for another 50 epochs, per the default settings. The model from the final epoch was applied to the HER2ST data to generate labels to train GHIST.

TCGA-BRCA dataset

Data from TCGA-BRCA55 were used to evaluate model generalizability. This dataset was chosen as the predictions on these H&E images could be evaluated through the matched gene expression, survival data and other ‘omics’. TCGA-BRCA data were downloaded using the TCGAbiolinks package56 version 2.29.6.

RNA-seq data were obtained by the following query options: project = ‘TCGA-BRCA’, data.category = ‘transcriptome profiling’, data.type = ‘gene expression quantification’, experimental.strategy = ‘RNA-seq’ and workflow.type = ‘STAR – counts’. Histology images were obtained by the following query: project = ‘TCGA-BRCA’, data.category = ‘biospecimen’, data.type = ‘slide image’, and experimental.strategy = ‘diagnostic slide’. Clinical (metadata) with variables for defining breast cancer subtypes for samples were downloaded from the TCGAretriever package version 1.9.1. SCNAs were downloaded from UCSC Xena (Genomic Data Commons (GDC) TCGA Breast Cancer).

In our study, we mostly focused on the HER2+ breast cancer subtype from TCGA-BRCA, as this was the subtype of the data (BreastCancer2 and HER2ST) used to train the model and is of clinical interest due to its aggressive nature. We selected the HER2+ individuals based on a positive entry in the ‘lab_proc_her2_neu_immunohistochemistry_receptor_status’ metadata column57. We supplemented our analysis using the luminal breast cancer subtype, which are selected based on either a positive entry in ‘breast_carcinoma_estrogen_receptor_status’ with a positive entry in ‘breast_carcinoma_progesterone_receptor_status’ or a positive entry in ‘breast_carcinoma_estrogen_receptor_status’ with a negative entry in ‘breast_carcinoma_progesterone_receptor_status’.

Overall we considered 92 TCGA HER2+ samples and 461 TCGA individuals with the luminal subtype consisting of matched images, RNA-seq data, somatic copy number data and clinical data. For H&E processing, images were read using the OpenSlide-Python library, and nonoverlapping 256 × 256 patches were then cropped from the highest resolution level of the WSIs. We also compared different patch sizes and found 256 achieved the best results (Supplementary Fig. 21). The patches were filtered out if the sum of the RGB values were greater (or more white) than a patch with RGB (220, 220, 220). Nuclei were segmented from the patches using Hover-Net.

During inference, we found that it was necessary to apply normalization to the H&E images for both nuclei segmentation (Hover-Net) and single-cell gene expression prediction (GHIST). We found that the stain color affected the number of nuclei that Hover-Net predicted. The torchstain package (version 1.3.0)58 was used to apply the Macenko normalization method59 to each H&E patch. The reference was an unnormalized patch from a TCGA-BRCA H&E WSI for which Hover-Net performed well visually. Macenko stain normalization was also applied to the original TCGA H&E patches when input to GHIST. Here, the reference image was the whole H&E image from Xenium, downsampled such that 1 pixel = 10 μm.

Mixed DCIS cohort

Ductal carcinoma in situ (DCIS) and invasive breast cancer tissue from 44 females who were diagnosed with invasive breast cancer was used to construct duplicate tumor microarray data (Supplementary Table 4). The breast cancer tissue was obtained from the Australian Breast Cancer Tissue Bank. This tissue was obtained with informed consent from the donors and the use of these tissues for unspecified future research use received ethical and governance approval. Their use in this project was reviewed and approved by the Western Sydney Local Health District Human Research Ethics Committee (approval number 2019/ETH02688).

These cases are considered mixed DCIS because the patient was diagnosed with DCIS tissue and adjacent invasive breast cancer at the same time. Separate cores containing DCIS only and IDC only were both included on this tumor microarray. The histological status in each core and section was confirmed by an experienced specialist breast pathologist. These individuals were diagnosed between 2006 and 2010, and their ages ranged from 29 to 79 years at the time of diagnosis. It was the first breast disease event for 43 of the 44 patients. It was the second breast disease event for one patient whose prior breast cancer details are unknown. Having had prior breast cancer did not exclude a patient from eligibility for this cohort. All 44 individuals were diagnosed with invasive breast cancer, with DCIS also present. Twenty-nine of the individuals had a primary histologic diagnosis of invasive ductal carcinoma, one had a primary histologic diagnosis of apocrine carcinoma, and 14 were given a primary histologic diagnosis of infiltrating carcinoma not otherwise specified. One of the individuals had bilateral invasive carcinoma whereby both breasts were affected with infiltrating carcinoma not otherwise specified. Regarding the histopathological grade of the 44 invasive carcinomas, 2 tumors were classed grade 1, 15 were classed as grade 2 and 27 were classed as grade 3. Tumors had varying ER, PR and HER2 status.

Spatially resolved single-cell Gene expression from HISTology

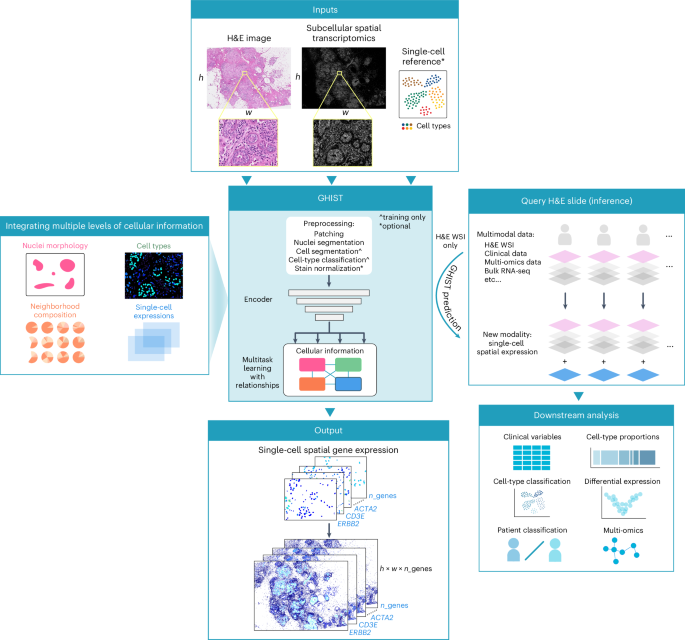

Overview of GHIST

GHIST is a deep learning framework that considers the relationships between multiple layers of biological information to predict spatially resolved gene expression within individual cells from visual information captured in H&E-stained histology images. GHIST uses two types of input data at inference: (i) H&E images, and (ii), optionally, average gene expression profiles of cell types from reference datasets, such as the Human Cell Atlas. During training, subcellular SGE data are used to quantify single-cell gene expression for the paired H&E image to enable the framework to learn relationships between H&E images and gene expression within cells. Once trained, the framework can infer single-cell SGE from H&E images without requiring SGE data.

A major innovation in developing GHIST is the harnessing of the complex relationships between the histological appearance of cells, gene expression within cells, cell type and composition of neighboring cell types. This multilayered approach enhances the capacity of the framework to better discern the SGE patterns reflected by H&E, thereby facilitating greater biological insights. During training, cell-type and neighborhood composition information are derived from subcellular SGE data, which form the labels to train GHIST to predict this information from H&E-stained images. Hence, their interrelationships become captured in the trained model. During inference, GHIST predicts this additional information from H&E-stained images and uses it to improve predicted gene expression. The primary task of GHIST is spatially resolved gene expression prediction within individual cells, and this is supported by three auxiliary helper prediction tasks (morphology, cell-type and neighborhood composition):

-

(A)

Visual information that describes nuclei morphology and cell type. The visual appearance of tissues, cells and nuclei reflects underlying biological information. GHIST extracts information regarding nuclei morphology, texture and the appearance of the environment and neighboring cells from H&E images. This is learned through a backbone network that performs joint nuclei segmentation and classification. Nuclei-level and patch-level features from the backbone are combined. These features are fed into two auxiliary (cell-type and neighborhood composition) and primary prediction tasks downstream, so that these tasks receive a comprehensive embedding of information from H&E images.

The backbone is parameterized by a set of learnable parameters θ of a deep learning segmentation model. The backbone predicts the probability of cell-type classes and background at each pixel. In this way, the model learns the relationships between pixels with morphology and cell type. We used the popular UNet 3+60 (without the deep supervision component) as the backbone of our framework to perform nuclei segmentation and classification. This backbone architecture may be swapped out for other segmentation architectures. The architecture comprised an encoding branch and decoding branch with five levels of feature scales, and incorporated full-scale skip connections that combined low-level details with high-level features across different scales (resolutions).

-

(B)

Cell-type information. The level of expression of genes in a cell is directly related to its cell type, as different cell types are known to be associated with distinct expression profiles and different marker genes. GHIST harnesses the relationship between gene expression and cell type by carrying out cell-type prediction as an auxiliary task in its multitask framework. This serves three key purposes: (i) incentivizes the extraction of visual features that target cell type, which implicitly informs the gene expression profile of the cell; (ii) provides an alternative means of assessing and penalizing the predicted expression during training; and (iii) assists with the estimation of the composition of cell types within a local neighborhood (see ‘Neighborhood characteristics’ below). As a result, the framework can also better extract meaningful features that improve the prediction of gene expression.

To achieve point (ii), during training, the predicted gene expression is used as input to another component in the framework to predict cell type. This allows the framework to learn single-cell expression values that reflect characteristic profiles that correspond to different cell types.

-

(C)

Neighborhood characteristics. Cells are known to exhibit particular patterns of spatial organization in tumors, with different compositional patterns in local neighborhoods61,62. Thus, we incorporate a module that learns to estimate the neighborhood cell-type composition of a given input patch as a further type of auxiliary output prediction. This provides two key benefits: (i) ensures that the combination of low-level features from individual cells is coherent with the local neighborhood, and (ii) aids with discerning cell types that are visually challenging to distinguish in H&E images. As an example in breast cancer, B cell and T cell nuclei exhibit remarkably similar visual characteristics. B cells tend to be mistaken for T cells when the frequency of T cells is relatively dominant. These cell types often occur together in local neighborhoods. We leverage this knowledge in GHIST by using the estimated neighborhood composition to capture a more comprehensive view of the cellular environment in H&E images.

-

(D)

Spatially resolved gene expression in individual cells. The integration of the three components above allows the main task, single-cell SGE prediction, to be facilitated by the different interrelated biological information. Once trained, GHIST predicts the expression of hundreds of genes for every nuclei detected in an H&E image, without requiring additional cell-type, neighborhood composition or SRT information as inputs. GHIST can leverage averaged gene expression profiles of various cell types from single-cell references of the target tissue type as an optional input (ablation results in Supplementary Figs. 1 and 2). The selection of the reference data is flexible, as GHIST does not require a matched single-cell reference for the same sample of interest, the cell types do not need to align with the cell types being predicted, multiple reference datasets may be used, and some reference datasets may contain different cell types.

Extraction of visual information

The input to the model is a cropped patch from the H&E WSIs, \(x\in ^\), where h is the height of the patch and w is the width of the patch, and 3 corresponds to the RGB color channels. All the cells in an input patch are processed simultaneously during one forward pass, thereby allowing the model to flexibly support patches containing an arbitrary number of cells.

The backbone predicts an output of shape \([h,w,_{{}}+1]\), where each element represents the probability of each cell type or background class at each pixel. During training, the backbone is trained to carry out nuclei segmentation and cell-type classification by minimizing the loss function as given by equation (1):

$$_{\rm{Morph}}=-\frac{1}{N}\mathop{\sum }\limits_{i=1}^{N}\mathop{\sum }\limits_{j=1}^{M}{I}_{{ij}}\log ({p}_{{ij}}),$$

(1)

where N is the number of pixels, M is the number of classes (cell types, and background), \({I}_{{ij}}\) is a binary indicator of whether class j is the correct classification for pixel i (1 if true, 0 otherwise), and \({p}_{{ij}}\) is the predicted probability that pixel i belongs to class j. M is only relevant during training, and depends on the granularity of the cell types of interest. In our experiments, we found PCC of predicted SGE with ground truth to reduce for large M (‘Discussion’).

Nuclei-level features are extracted by multiplying nuclei masks for all valid nuclei in the input patch in an element-wise manner to the feature volumes produced by the first and last convolutional layers within the backbone architecture. The resulting features are concatenated along the feature dimension, and then the features within each nuclei are summed and normalized by the size of the nucleus in pixels. This is done to counter the variability in nuclei sizes. This yields a unique feature vector with a dimension of 384. Furthermore, patch-level features are calculated by averaging the same two feature volumes across the patch. Each nucleus-level feature vector is concatenated with the patch-level feature vector of its corresponding patch. This is then processed through two fully connected layers with ReLU activation, to generate the resulting final feature vector that describes the morphology of each nucleus \({x}_{{{\mathrm{nucleus}}}}\in {{\mathfrak{R}}}^{1\times 256}\). This describes nuclei-level and patch-level visual features that are relevant to nuclei morphology, cell type and the local neighborhood from H&E images.

Leveraging cell-type information

The cell-type prediction module uses the extracted visual features of each nucleus, \({x}_{\rm{nucleus}}\), to predict the cell type of the nucleus. The module comprises a fully connected layer (256-dimensional intermediate features), followed by ReLU activation, and another fully connected layer that predicts the probability of each cell type. The argmax function is applied to obtain the predicted classes. This module is trained by minimizing, as given by equation (2):

$${L}_{{{\mathrm{CT}}},{{\mathrm{class}}}}=-\frac{1}{C}\mathop{\sum }\limits_{i=1}^{C}\mathop{\sum }\limits_{j=1}^{M}{I}_{{ij}}\log \left({p}_{{ij}}\right),$$

(2)

where C is the number of cells (that is, nuclei), M is the number of classes (cell types), Iij is a binary indicator of whether class j is the correct classification for cell i (1 if true, 0 otherwise) and \({p}_{{ij}}\) is the predicted probability that cell i belongs to class j.

We introduce another module with the same composition of layers as the cell-type prediction module, with three associated training losses, \({L}_{{{\mathrm{CT}}},{\rm{embed}}}\), \({L}_{{{\mathrm{CT}}},{\rm{logits}}}\) and \({L}_{{{\mathrm{CT}}},{\rm{expr}}}\) (defined below) that work in tandem. This module separately takes as input the predicted expression and ground-truth expression, and predicts the corresponding cell type for each set of input expression values.

-

\({L}_{{{\mathrm{CT}}},{\rm{expr}}}\) is the cross-entropy loss (equation (2)), computed exclusively when the inputs are the predicted expression.

-

\({L}_{{{\mathrm{CT}}},{\rm{embed}}}\) addresses the consistency of intermediate representations derived from the ground-truth and predicted input expression, and is written as shown in equation (3):

$${L}_{{{\mathrm{CT}}},{\rm{embed}}}=\frac{1}{C}\mathop{\sum }\limits_{i=1}^{C}\left(1-\cos ({x}_{{{\mathrm{pr}}},i},{x}_{{{\mathrm{gt}}},i})\right),$$

(3)

where \({x}_{{pr},i}\) is the embedding for the predicted (‘pr’) expression of cell i, and \({x}_{{{\mathrm{gt}}},i}\) is the embedding for the ground-truth (‘gt’) expression of the cell.

-

\({L}_{{{\mathrm{CT}}},{\rm{logits}}}\) addresses the consistency between the predicted cell types derived from predicted and ground-truth gene expression for a given cell as shown in equation (4):

$${L}_{{{\mathrm{CT}}},{\rm{logits}}}=\frac{1}{C}\mathop{\sum }\limits_{i=1}^{C}{\left({p}_{{{\mathrm{pr}}},i}-{p}_{{{\mathrm{gt}}},i}\right)}^{2},$$

(4)

where \({p}_{{{\mathrm{pr}}},i}\) is the output cell-type logits given the predicted expression of cell i, and \({p}_{{{\mathrm{gt}}},i}\) is the output cell-type logits given the ground-truth expression of the cell.

Taken together, predicted single-cell expression is encouraged to resemble profiles that better correspond to cell types. GHIST can work without cell-type labels, but at a cost to performance (Supplementary Figs. 1 and 2). Contributions of LMorph and LCT, class are shown in Supplementary Fig. 22. Generally, LMorph has a small value relative to the other losses and, therefore, a smaller contribution to performance.

Leveraging neighborhood characteristics

GHIST estimates the composition of cell types in each input H&E patch. This module comprises two fully connected layers with ReLU activation, with the last layer outputting the same number of elements as the number of cell types in the training data. Each value represents the proportion of cells of a particular cell type in a patch. SoftMax activation is used to ensure the compositions sum to 1 for each patch. The input to this module is the average of \({x}_{{fv},{\rm{nucleus}}}\) for all nuclei in a patch.

This neighborhood composition (NC) module is trained by minimizing \({L}_{{{\mathrm{NC}}},{\rm{est}}}\) and \({L}_{{{\mathrm{NC}}},{\rm{pr}}}\), calculated for a given patch defined as given by equation (5):

$${L}_{{{\mathrm{NC}}},{\rm{est}}}={D}_{{{\mathrm{KL}}}}\left({P}_{{{\mathrm{est}}}}{\rm{||}}Q\right)=\mathop{\sum }\limits_{j=1}^{M}{p}_{\rm{est}}\log \frac{{p}_{\rm{est}}(j)}{Q(j)},$$

(5)

where \({D}_{{KL}}\) is the Kullback–Leibler (KL) divergence between the cell-type compositions estimated from the neighborhood composition module \({P}_{\rm{est}}\), and the ground-truth compositions Q, and j ranges over all the cell types. Similarly, for a given patch, the loss associated with the neighborhood composition module given the auxiliary predicted cell types according to equation (6):

$${L}_{{{\mathrm{NC}}},{{\mathrm{pr}}}}={D}_{{{\mathrm{KL}}}}\left({P}_{{{\mathrm{CT}}}}{\rm{||}}Q\right)=\mathop{\sum }\limits_{j=1}^{M}{p}_{{{\mathrm{CT}}}}\log \frac{{p}_{{{\mathrm{CT}}}}(j)}{Q(j)},$$

(6)

where \({P}_{{{\mathrm{CT}}}}\) is the composition calculated by summing the predicted cell types (‘Leveraging cell-type information’) and dividing by the total number of cells in a given patch. Thus, further consistency is imposed between the different layers of biological information within the model.

The estimated neighborhood composition provides further utility in accounting for cell types that are difficult to discern from H&E images. For example, B cells and T cells have similar morphology, and thus the model becomes biased toward the dominant cell type (usually T cells), while ignoring the minority cell type (B cells). Based on this, during inference, we use the estimated compositions to recover missing cell types. For cell type t (for example, immune cell types including B cells, myeloid cells and T cells), per equation (7):

$${v}_{t}=\alpha {n}_{\rm{cells}}(\;{p}_{{{\rm{est,t}}}}-{p}_{{{\mathrm{CT}}},t})|{\varPhi }_{t}|,$$

(7)

where \({n}_{\rm{cells}}\) is the number of cells in the patch, \({\varPhi }_{t} \sim N({p}_{\rm{est,t}},1)\) is sampled from a normal distribution, and α is an adjustable hyperparameter to allow different recovery rates. We set α to 2 in our experiments for B, myeloid and T cell types, and T cells initially predicted with high confidence (probability above 0.6) were masked from this mechanism. These values were selected empirically, according to performance on the validation sets. Next, the logits of the initial predictions \({y{\prime} }_{{{\mathrm{CT}}},t}\) for each cell are revised as given by equation (8):

$${y{\prime} }_{{{\mathrm{NC}}},t}={v}_{t}+{y{\prime} }_{{{\mathrm{CT}}},t}.$$

(8)

The final cell type is found by applying argmax across the cell types being refined, while not affecting other cell types. To ensure consistency, the final expression values are taken from the corresponding cell-type-specific prediction module (‘Gene expression prediction’). Disease-specific knowledge may also be incorporated into this mechanism (equations (7) and (8)), for example, decreasing the relative proportion of epithelial cells to malignant cells in invasive breast cancer. We only demonstrate this mechanism on the breast cancer datasets; it was not applied for the melanoma or lung adenocarcinoma datasets.

Spatially resolved gene expression prediction for individual cells

GHIST predicts the expression value of every gene in each cell in the H&E image, \(y{\prime} \in {{\mathfrak{R}}}^{{n}_{\rm{genes}}}\). The number of genes predicted varies according to the size of the gene panel in the available SST data. The datasets used in our study had panel sizes ranging from 280 to 382 genes.

The input to this module is the embedding vector \({x}_{\rm{nucleus}}\in {{\mathfrak{R}}}^{256}\) for all nuclei in a patch (with dimensions = 256), and if used, a set of averaged gene expression profiles of various cell types from single-cell references of the target tissue type \(R\in {{\mathfrak{R}}}^{{n}_{\rm{AvgExp}}\times {n}_{\rm{genes}}}\). The input averaged profiles are optional (see ablation study results in Supplementary Figs. 1 and 2). The reference data do not need to be matched for the same sample of interest, and \({n}_{\rm{AvgExp}}\) can represent a flexible number and categories of cell types from a single or multiple reference datasets (Supplementary Fig. 20). A higher number of cell-type expression profiles provided may improve performance. R is kept the same during training and inference.

GHIST carries out a linear regression to predict y′ by first predicting \(W\in {{\mathfrak{R}}}^{{n}_{{{\mathrm{ref}}}}}\) as given by equation (9):

$$W({x}_{\rm{nucleus}})=({\rm{ReLU}}({x}_{\rm{nucleus}}{W\;}_{r}^{(1)}+{b}_{r}^{(1)})){W}_{r}^{\;(2)}+{b}_{r}^{(2)},$$

(9)

followed by applying SoftMax along the \({n}_{\rm{AvgExp}}\) dimension, where \({W}_{r}^{\;(1)}\in {{\mathfrak{R}}}^{256\times 256},{b}_{r}^{(1)}\in {{\mathfrak{R}}}^{1\times 256},{W}_{r}^{\;(2)}\in {{\mathfrak{R}}}^{256\times {n}_{{{\mathrm{AvgExp}}}}}\) and \({b}_{r}^{(2)}\in {{\mathfrak{R}}}^{1\times {n}_{{{\mathrm{AvgExp}}}}}\). The \({S}_{j}\) is the expression value of gene j calculated by the weighted sum of profiles for each cell as given by equation (10):

$${S}_{j}=\mathop{\sum }\limits_{k=1}^{K}{W}_{k} {R}_{{kj}},$$

(10)

In the setting without using averaged expression profiles as an input is given by equation (11):

$$S\left({x}_{\rm{nucleus}}\right)=\left({\rm{ReLU}}\left({x}_{\rm{nucleus}}{W}_{e}^{\;\left(1\right)}+{b}_{e}^{\left(1\right)}\right)\right){W}_{e}^{\;\left(2\right)}+{b}_{e}^{\left(2\right)},$$

(11)

where \({W}_{e}^{\;(1)}\in {{\mathfrak{R}}}^{256\times 256},{b}_{e}^{(1)}\in {{\mathfrak{R}}}^{1\times 256},{W}_{e}^{\;(2)}\in {{\mathfrak{R}}}^{256\times {n}_{{{\mathrm{genes}}}}}\) and \({b}_{e}^{(2)}\in {{\mathfrak{R}}}^{1\times {n}_{{{\mathrm{genes}}}}}\). Next, information about the spatial neighborhood is incorporated using the estimated cell-type neighborhood composition \({p}_{{est}}\in {{\mathfrak{R}}}^{{n}_{{CT}}}\) of a patch. We use multi-head cross-attention (with eight heads), which are fundamental components in Transformers. For each cell, \({p}_{{est}}\) forms the query, while S forms the key and value.

A linear layer is applied to the output of the multi-head cross-attention module, resulting in \(b\in {{\mathfrak{R}}}^{{n}_{{{\mathrm{genes}}}}}\). Finally, the predicted gene expression of each cell is computed as given by equation (12):

$${y}^{{\prime} }={\rm{ReLU}}\left(S+b\right),$$

(12)

The setting without using single-cell reference as an input is given by equation (13):

$${y}^{{\prime} }={\rm{ReLU}}\left(b\right),$$

(13)

For the spot-based prediction mode, y′ for all nuclei in a patch are summed (for weakly supervised learning) as given by equation (14):

$${y{\prime} }_{{{\mathrm{spot}}}}=\mathop{\sum }\limits_{i=1}^{C}{y{\prime} }_{i},$$

(14)

The training loss associated with gene expression in the default single-cell mode is given by equation (15):

$${L}_{{{\mathrm{GE}}}}=\frac{1}{C}\mathop{\sum }\limits_{i=1}^{C}{\left({y{\prime} }_{i}-{y}_{i}\right)}^{2},$$

(15)

where \({y}_{i}\) is the gene expression for cell i obtained from SST. The spot-based mode is given in equation (16):

$${L}_{\rm{GE}}=\frac{1}{S}\mathop{\sum }\limits_{i=1}^{S}{\left({y{\prime} }_{{\rm{spot}},{i}}-{y}_{i}\right)}^{2},$$

(16)

where \({y{\prime} }_{\rm{spot}}\) is the gene expression for spot i.

When the estimated neighborhood compositions are used to recover missing cell types, a copy of equations (9)–(13) is made for a specific cell type. This component predicts the gene expression of this cell type, \({y{\prime} }_{\rm{adj}}\), which is used for cells that are predicted as cell type t from equation (8). An additional loss, \({L}_{{{\mathrm{GE}}},{\rm{adj}}}\), is calculated to train this component for cell type t as given by equation (17):

$${L}_{{\mathrm{GE}},{\rm{{adj}}}}=\left\{\begin{array}{cc}\frac{1}{C}\mathop{\sum }\limits_{i=1}^{C}{(\;{y}_{{\rm{adj},}{i}}^{{\prime} }-{y}_{i})}^{2} & \,if\,{t}_{gt,i}\,=\,t\\ 0 &{\rm{otherwise}}\end{array}\right.$$

(17)

Model training

The model was trained by minimizing the total loss representing the sum of all the losses over N training patches as given by equation (18):

$$\begin{array}{l}\min\mathop{\sum }\limits_{n}^{N}\left[{L}_{\rm{Morph}}+{L}_{{{\mathrm{CT}}},{\rm{class}}}+{L}_{{\mathrm{{CT}}},{\rm{embed}}}+{L}_{{{\mathrm{CT}}},{\rm{logits}}}+{L}_{{{\mathrm{CT}}},{\rm{expr}}}\right.\\\left.+{L}_{{{\mathrm{NC}}},{\rm{est}}}+{L}_{{{\mathrm{NC}}},{\rm{pr}}}+\frac{1}{{N}_{{{\mathrm{CT}}}}}{L}_{{{\mathrm{GE}}},{\rm{adj}}}+{L}_{{{\mathrm{GE}}}}\right]\end{array}$$

(18)

Due to the relatively small value of \({L}_{{{\mathrm{CT}}},{\rm{embed}}}\) observed during training, it was empirically scaled by a factor of 100. \({L}_{{{\mathrm{GE}}},{\rm{adj}}}\) was inversely scaled by \({N}_{{{\mathrm{CT}}}}\), the number of cell types in the auxiliary prediction. There were no further weightings for the other losses, to improve generalizability and ensure that our losses were not fine-tuned to any particular datasets. We trained the model on varying amounts of data and observed maximum accuracy on the full dataset, as expected (Supplementary Fig. 23).

Practical implementation of GHIST

Cross-validation was carried out for the Xenium and HER2ST datasets. The training set was used to train parameters of the model. The validation set was used to evaluate models during training to allow parameters to be tuned. Results presented in the paper were calculated using the predictions using the test set for each of the folds of the cross-validation. The same cross-validation splits were used for each model to ensure fair comparison.

Fivefold cross-validation was carried out for each Xenium dataset. The whole H&E image in each dataset was horizontally sectioned into five nonoverlapping portions, with each portion serving as the testing data for a fold. The remaining portions formed the training and validation sets, where the validation set was a section that was a tenth of the whole H&E in height. The training portion of a fold was divided into nonoverlapping 256 × 256 patches. During inference, patches were divided such that there was a 30-pixel overlap (approximately the width of the largest nuclei in the data). The final prediction for each distinct nucleus was based on the input image containing the largest area of that particular nucleus.

For the HER2ST dataset, the SGE prediction was evaluated using fourfold cross-validation. The dataset was split into four folds, with samples from the same patient belonging in the same fold. In each iteration of the cross-validation, two folds were used as a training set, one fold used as a validation set and the remaining fold used as a test set. The best model within each fold was then chosen based on the epoch that produced the best PCC in the validation set.

For GHIST, batch size was set to 8 during training and inference. The model is flexible regarding the number of nuclei present in the patch, and outputs predicted gene expression as \({n}_{\rm{{cells}},{\rm{total}}}\times {n}_{{\rm{genes}}}\). The model was trained end-to-end from scratch for 50 epochs. The saved checkpoints (per epoch) were applied to the validation sets, and selection of the top-performing checkpoint was based on the best average of the rank in F1 score (of the auxiliary cell-type classification prediction component) and PCC (of the predicted single-cell expression). The top-performing checkpoint was then applied to the test set of the fold.

Weights of the convolutional layers in GHIST were initialized using the method by He et al.63. Input images were standardized using the mean and standard deviation of the training patches, and gene expression was log-normalized. We used standard on-the-fly image data augmentation by randomly applying a flip (horizontal or vertical), rotation (of 90°, 180° or 270°) in the (x,y) plane. The order of training samples was randomized before training. We used the AdamW optimizer64 to minimize the sum of all losses at a fixed learning rate of 0.001, with a first-moment estimate of 0.9, second-moment estimate of 0.999 and weight decay of 0.0001.

We ran GHIST on a Linux system with a 24-GB NVIDIA GeForce RTX 4090 GPU, Intel Core i9-13900F CPU @ 5.60 GHz with 32 threads, and 32-GB RAM. Using this setup, training was completed within 10 h using BreastCancer2, which contained around 80,000 cells, 850 M pixels and 280 genes. VRAM usage was approximately 21 GB and RAM usage was approximately 10 GB during training. Inference with GHIST was completed after around 20 h on the 92 TCGA-BRCA WSIs (around 63 million cells in total).

Application to TCGA-BRCA

Disease-specific knowledge was incorporated during inference on the TCGA images, due to the disparity between the sample that the model was trained on (which comprised normal ducts, DCIS and invasive carcinoma), and those of the TCGA cohort (all invasive subtypes). Without fine-tuning, we set α to an arbitrary high value (10,000) and considering \({p}_{{{\mathrm{CT}}}}\) as 0 for malignant cell types (equations (7) and (8)). During inference on TCGA images, ensemble averaging was carried out using the model checkpoints from three folds that were trained on BreastCancer2.

Performance evaluation

We used a multilevel evaluation strategy to comprehensively assess GHIST from multiple aspects (Supplementary Fig. 24).

Evaluation study 1: ablation study

We performed an ablation study to determine the contributions from each of the types of biological information in GHIST. We used BreastCancer2 for these experiments. Furthermore, in another set of experiments, we used BreastCancer2, BreastCancerILC and BreastCancerIDC for training and validation, with BreastCancer1 reserved for testing. We evaluated GHIST without neighborhood composition prediction, thereby impeding the ability to refine predicted gene expression for cells based on estimated neighborhood characteristics. We also investigated the effects of performing auxiliary cell-type prediction, by removing the ability of the model to leverage the relationships between gene expression and cell-type labels. Furthermore, instead of performing a linear regression of average cell-type profiles, we investigated the setting where gene expression is directly predicted as a straightforward regression.

We performed cell-type classification using scClassify with the breast cancer reference data, followed by comparison of cell-type distribution and cell-type proportion with the ground-truth data and the correlation of SVGs.

Evaluation study 2: comparison study with other spot-based methods

We describe the settings for other spot-based methods below, and more details may be found in our benchmarking work15:

-

(A)

Settings used for other methods

-

ST-Net10—ST-Net was implemented in Python and PyTorch using hyperparameter values as outlined in the original paper. The DenseNet-121 backbone used was pretrained on ImageNet. ST-Net was trained using the mean squared error (MSE) loss function, computed between predicted and ground-truth expression levels.

-

HisToGene9—The HisToGene model was implemented using PyTorch with the following hyperparameters: a learning rate of 10 × 10−5, the number of training epochs of 100, a dropout ratio of 0.1, 8 multi-head attention layers and 16 attention heads. The final model used was the model after all epochs, per the HisToGene pipeline.

-

GeneCodeR13—GeneCodeR was the only method that did not use deep learning for its model. The following hyperparameters were used for the coordinate descent updating algorithm: the dimensions of the encoding space for the samples (i_dim) of k = 500, the dimensions of the encoding space for the features (j_dim) of c = 500, initialization for the alpha_sample = ‘rnorm’, initialization for the beta_sample = ‘irlba’, maximum iterations of 20, tolerance of 5, learning rate of 0.1 and batch size of 500.

-

DeepSpaCE12—The DeepSpaCE model was then implemented using the VGG16 model as the backbone with the following hyperparameters: maximum number of training epochs of 50 (with training stopping before 50 if there is no improvement within 5 epochs), a learning rate of 10 × 10−4 and a weight decay of 10 × 10−4.

-

DeepPT8—DeepPT was implemented in Python and PyTorch using hyperparameter values as outlined in the original paper. The ResNet-50 backbone used was pretrained on ImageNet. DeepPT was trained using the MSE loss function, computed between predicted and ground-truth expression levels.

-

Hist2ST11—The model was implemented using Python and PyTorch and ran with default parameters stated in the original paper. Some hyperparameter changes were made for benchmarking including: increased the image patch size from 112 × 112 to 224 × 224 and reduced the number of input and output channels in the depth-wise and point-wise convolutional blocks from 32 to 16 due to limited computational power. Image embedding dimension was increased from 1,024 to 2,048 as the original code of setting embedding size was hard coded based on the image patch size and the number of channels. The model was trained using a sum of MSE loss of predicted and ground-truth expression levels, ZINB and Distillation mechanisms.

-

iStar14—The model was implemented in Python using PyTorch and trained for 400 epochs with a learning rate of 10−5, optimized via Adam. The input images were rescaled so that each pixel corresponded to 0.5 × 0.5 μm, ensuring that a 16 × 16-pixel patch represented an 8 × 8-μm² area, approximately the size of a single cell. Each 16 × 16 patch was embedded into a 579-dimensional space. MSE loss was used for the weakly supervised learning process.

We used code from the official GitHub repositories for each method unless otherwise stated. We trained all the comparison methods from scratch using the same dataset and cross-validation splits. This was done for fair comparison, and because many of the methods did not provide weights of their trained models. We observed that the PCC of these methods is comparable between the scores obtained in our experiments and those reported in the original papers.

-

-

(B)

Evaluation measures

-

Concordance between predicted and measured gene expression—We followed the strategies described in our benchmarking work15 to assess the performance of GHIST on HER2ST data. Briefly, the evaluation methods used include PCC and SSIM. These metrics were first calculated at an image level (for example, correlation was measured for each gene per image), and then averaged over each individual, then averaged over each gene.

HVGs and SVGs were deduced from the ground-truth SGE from the HER2ST dataset. The modelGeneVar function, followed by the getTopHVGs function with prop = 0.1 from the scran R package65 version 1.32.0 were used to obtain the HVGs in each ST dataset. For SVG selection, genes with adjusted P < 0.05 were identified as SVGs in each image sample in each dataset using the SPARK-X package in R66 (version a8b4bf2). For HER2+, only the SVGs that appear in more than 30 of 36 samples were selected, and correlation was calculated based on top 20 genes ranked by adjusted P value.

-

Assessment of the translational potential with cross-validated C-index and Kaplan–Meier curves—We first selected the top 20 genes associated with the highest C-indices from the univariate Cox models built on the predicted SGEs for each gene. Next, a multivariate Cox regression was then built using these genes. Each model was evaluated by C-index and a log-rank P value based on the predicted SGE for each of the spot-based methods and GHIST, where C-index was calculated by comparing the ground-truth event times and events with model predictions for each patient within a test set.

While many existing methods67 perform calculation of C-indices similarly to a resubstitution model, we have used a threefold cross-validation with 100 repeats. This meant that individuals were randomly split into three subsets. Each subset was used as a test set of individuals, while the rest of the patients were used to train models. Through repeating this process 100 times, we measured the C-indices for each test set to obtain an average C-index across 300 values. We also obtained the average prediction for each individual over all repetitions under cross-validation, which was then used to categorize individuals into high-risk and low-risk groups. Kaplan–Meier curves were then constructed for each of the high-risk and low-risk groups. The log-rank test was then used to obtain a P value to quantify the difference between survival of the two groups.

-

Evaluation study 3: performance evaluation at single-cell resolution

For visual assessment, we selected cell-type-specific marker genes using the FindMarkers function in Seurat to identify markers for each cell type. We visualized the predicted gene expression and the measured gene expression of the selected top marker genes.

For concordance between predicted and measured cell-type proportion, we predicted the cell types in the TCGA data using the single-cell breast reference as aforementioned. We then visually compared the cell types between predicted and measured intensities using bar plots and scatterplots.

For correlation between predicted and measured gene expression, we treated the measured gene expression from the SST data as the ground truth. We computed the PCC of a collection of genes between the predicted gene expression from H&E and the measured (ground truth) gene expression from SST. We calculated the PCC based on the (1) top 20 SVGs, (2) top 50 SVGs, (3) top 20 HVGs, (4) top 50 HVGs, (5) top 20 non-SVGs, (6) top 50 non-SVGs, (7) top 20 non-HVGs and (8) top 50 non-HVGs. We defined the top SVGs and HVGs using the default ordering of the genes from the SVG and HVG calculation. The non-SVGs and non-HVGs are defined as the genes ranked in the bottom of the SVG and HVG calculation. For example, the bottom 20 non-HVGs are defined to be the genes ranked in the bottom 20 of the HVG calculation, similarly for the bottom 20 non-SVGs.

The SVGs were calculated using Giotto rank68 version 1.1.2, one of the top-performing methods based on a recent benchmarking paper69. The HVGs were calculated based on the function FindVariableFeatures from the Seurat R package70 version 5.0.3. A list of all genes in each Xenium dataset and their corresponding SVG statistics is in Supplementary Data 1.

For assessment of the translational potential of spatial features, we examined whether the prediction expression contains information that can be used to stratify individuals into distinct survival groups. We used death as the survival event outcome, days to death as the survival time and days to last follow-up when there was no death event. We considered each of the feature types as described in ‘multi-view feature generation’ and Supplementary Table 5 and examined the discriminatory power of each feature type by estimating the high-risk and low-risk individuals based on a given set of features. For a given feature type, we selected the top 1% of the features, or the top five features, whichever number is greater, from each feature type separately (Supplementary Table 5) and used them to fit a Cox proportional hazard model (function crossValidate in the ClassifyR71 R package version 3.9.1). Next, for each of the feature types described in Supplementary Table 5, we used 5,000 repeated threefold cross-validation strategies to identify high-risk and low-risk individuals. In each iteration (one repeat), two folds of the individuals were used to train the Cox proportional hazard model based on the selected features, and individual risk was calculated on the remaining testing fold. This was repeated 5,000 times, resulting in a vector of a ‘risk score’ for each individual. For each individual, this resulted in a vector of 5,000 risks estimated when the individual was inside the ‘test set’ for each iteration. The final risk score was calculated as the median of this vector of risk estimates.

Using the final risk score, patients with a risk in the 0% to 25% quantile were defined as the ‘low-risk group’, while individuals in the 75% to 100% quantile were defined as the ‘high-risk group’. A Kaplan–Meier plot was then constructed, and a log-rank test was performed. The chi-square statistic and P value associated with the log-rank test were calculated to measure the difference in survival between the two groups. We used the chi-square statistics associated with each feature type to assess the discrimination capacity of each feature type. To ensure a fair comparison with TCGA, we first subset the TCGA bulk RNA-seq to the same set of genes as in the H&E gene panel.

Downstream statistical and computational biology analysis

To examine the downstream application of GHIST, we applied GHIST trained on the dataset BreastCancer2 to predict the expression on 92 TCGA HER2 samples, 461 TCGA individuals with the luminal subtype and another breast cancer tissue microarray cohort (detailed in previous sections).

Multi-view feature generation

We generated multiple types of features based on the prediction gene expression to enable more comprehensive exploration of the TCGA individuals. Using the R package scFeatures28 version 1.4.0, we generated feature types from three distinct categories: single-cell-based cell-type proportion, single-cell-based cell-type-specific expression and spatial pattern. Each feature type contained tens to hundreds of features. All feature types are defined in the scFeatures28 publication. Supplementary Table 5 describes each feature type in detail.

Differential state analysis between conditions (ER−/PR− versus ER+/PR+)

While we now have a computationally generated multi-sample, multi-group spatially resolved scRNA-seq TCGA HER2 dataset, we first focused on identifying markers for differential cell states for each cell type between individuals with ER−/PR− versus ER+/PR+. We modeled this using a negative binomial generalized linear model implemented in the function pbDS in the R package muscat (version 1.18.0). Significant differential cell-state markers were defined as genes with log fold change > 1 and P value < 0.05. We visualized the expression of these cell-type-specific differential state genes as a heat map.

Unsupervised learning to detect new sub-cohort in ER+/PR+

Within the ER+/PR+ cohort, we performed k-means clustering based on all cell-type-specific differential state genes identified in the previous section to cluster into two groups. We used PCC as the similarity metric and used the default parameters for the rest. This was performed using the Heatmap function implemented in the ComplexHeatmap72 R package version 2.20.0.

The characteristics of the two resulting clusters were determined in three different ways:

-

(a)

Survival analysis. We calculated the Kaplan–Meier curve for survival for cluster 1 and cluster 2 using the function survfit in R package survival version 3.6-4.

-

(b)

Differential expression analysis. We generated a large collection of features for each individual in the TCGA data using scFeatures (‘Multi-view feature generation’). Next, we identified features with a differential based on a moderated t-test using the lmFit function implemented in the package limma (version 3.60.2). We visualized these results using volcano plots.

-

(c)

Classification analysis. We further performed classification to examine the separability of the two subgroups based on various feature types. To avoid the potential issue of ‘double dipping’, we removed all differential state genes from the features in each feature type, as well as genes with a correlation above 0.7 with any of the differential state genes. We assessed the performance based on balanced accuracy using 100 repeated threefold cross-validation implemented in the crossValidate function in the R package ClassifyR. The crossValidate has an in-built feature selection using t-tests to select features with differential ‘measurement’ between cluster 1 and cluster 2. We used a support vector machine as the classification model backbone. For visualization of the most important features contributing to the classification, we selected top features based on frequency of inclusion, and visualized the values of these features in cluster 1 and cluster 2 samples using box plots.

Trans-acting associations between copy number aberration and nearest-neighbor correlation

Focal CNA was estimated using the GDC pipeline with the GISTIC73,74 method implemented and then thresholded to −1, 0 or 1, representing deletion, normal copy or amplification, respectively. Here we selected 91 TCGA HER2+ individuals and 458 individuals with the luminal subtype who had CNA results to perform downstream analysis, as a few individuals did not have CNA data. CNAs on chromosome X were removed. We first selected all genes that have both deletion and amplification with a frequency ≥ 5, which resulted in 2,177 CNA genes for HER2+ individuals and 15,608 genes for individuals in the luminal cohort. Next, for a given CNA_gene, we compared between two groups of individuals, one showing CNA_gene loss (−1) and another showing CNA_gene gain (+1). For these two groups, we identified differential patterning genes where spatial patterning was measured by nearest-neighbor correlation. This differential patterning analysis was repeated for all CNA_genes, and a matrix of P values representing the moderated t-tests for all CNA genes being tested against the 280-gene nearest-neighbor correlation was generated (Supplementary Table 2). We proposed a new metric quantifying the summation of −log10(P) in a genomic region and used this to present the number of CNAs that affect SGE. Each chromosome was split into genomic regions by a length of 1 Mb. Three hotspot regions were selected for highlighting differential nearest-neighbor correlation between gain and loss copy number by calculating −log2(fold change) in the HER2+ cohort.

Reporting summary

Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.

link