Impact of agricultural industry transformation based on deep learning model evaluation and metaheuristic algorithms under dual carbon strategy

This study proposes a hybrid model that integrates CNN, LSTM networks, and the SMA to enable a comprehensive, multidimensional, and dynamic evaluation of agricultural industry transformation outcomes. A core innovation of the model lies in the use of SMA for the dynamic optimization of CNN-LSTM hyperparameters. Additionally, the model incorporates a region-specific adaptive strategy to address the limitations of traditional methods in spatiotemporal data fusion and parameter tuning.

Construction of deep learning models: integrating CNN and LSTM

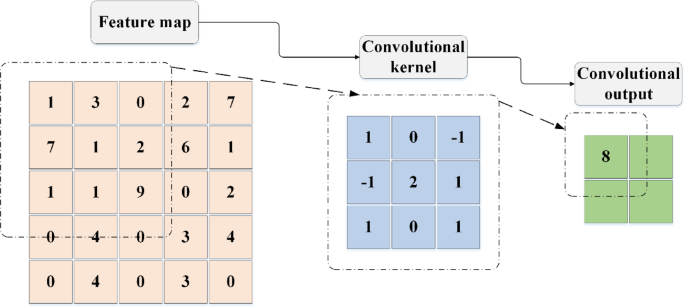

CNN is a type of deep learning architecture specifically designed for efficient image processing. They leverage several key features—local receptive fields, parameter sharing, and spatial hierarchies—to extract spatial features effectively. The basic structure of a CNN includes convolutional layers, activation functions, and pooling layers42,43,44. In the convolutional layer, the input data is processed through local receptive fields using filters (also known as convolution kernels) applied in a sliding-window manner. The convolution operation can be mathematically described by Eq. (1):

$$\:\left(f*g\right)(i,j)=\sum\:_{m}\sum\:_{n}f(m,n)\cdot\:g(i-m,j-n)$$

(1)

In Eq. (1), \(\:f(m,n)\) represents the input data, \(\:g(i-m,j-n)\) denotes the weights of the convolution kernel, * indicates the convolution operation, and \(\:(i,\:j)\) represents the coordinates of the output feature map. This operation involves calculating the dot product of weights and input data, adding a bias term \(\:b\), and introducing non-linearity through an activation function. The activation layer introduces non-linearity, enabling the model to learn and perform more complex tasks.

The process of the convolution operation is illustrated in Fig. 1.

Convolution operation process.

The Pooling Layer, which consists of both Max Pooling and Average Pooling, aims to reduce the number of parameters, extract key features, and decrease sensitivity to the spatial location of the input data through downsampling45,46. The calculation for the Max Pooling operation is shown in Eq. (2):

$$\:{P}_{max}(i,j)={max}_{m,n\in\:{\Omega\:}\left(i,j\right)}I(m,n)$$

(2)

In Eq. (2), \(\:{P}_{max}(i,j)\) represents the pooled feature, \(\:I(m,n)\) denotes the input feature map, and \(\:{\Omega\:}\left(i,j\right)\) signifies the pooling window. The pooling operation process is illustrated in Fig. 2.

Process of Pooling Operation.

The LSTM network is a specialized type of RNN designed to capture long-term dependencies in sequential data. It overcomes the vanishing gradient problem often encountered in traditional RNNs by incorporating a gating mechanism composed of input, output, and forget gates, along with a cell state featuring self-loops47,48,49. Table 2 outlines the key steps involved in processing input sequences within an LSTM network.

The model employs a CNN to extract spatial features from agricultural data, such as farmland distribution and soil types, using standard convolution operations. An LSTM network is then used to capture long-term dependencies in temporal variables, including meteorological trends and policy changes. The outputs of the CNN and LSTM are fused through a fully connected layer to generate a unified spatiotemporal feature representation. To improve the model’s adaptability to regional heterogeneity, a dynamic weight adjustment mechanism is introduced, as defined in Eq. (3):

$$\:{W}_{adapt}={\upalpha\:}\cdot\:{W}_{CNN}+(1-\alpha\:)\cdot\:{W}_{LSTM}$$

(3)

In Eq. (3), \(\:{\upalpha\:}\) represents the regional feature weighting coefficient, which is dynamically optimized by the SMA based on the spatial distribution characteristics of the input data.

The pseudocode for the deep learning model training process is shown in Table 3.

Parameter tuning and optimization of SMA

In this study, the fitness function plays a critical role in optimizing the model’s hyperparameters. A hybrid framework is proposed that integrates CNN, LSTM networks, and the SMA. The primary objective of this framework is to minimize the mean squared error (MSE) between the predicted outputs and the actual values. Given a multisource agricultural input dataset \(\:X\) and its corresponding labels \(\:Y\), the loss function is defined as shown in Eq. (4):

$$\:\mathcal{L}\left({\uptheta\:}\right)=\frac{1}{N}\sum\:_{i=1}^{N}{({y}_{i}-{\widehat{y}}_{i})}^{2}$$

(4)

In Eq. (4), N denotes the number of samples in the dataset, \(\:{y}_{i}\) represents the true value of the i-th sample, and \(\:{\widehat{y}}_{i}\) is the corresponding predicted value. A lower MSE indicates higher prediction accuracy. Therefore, the objective of hyperparameter optimization is to minimize the MSE. During the optimization process, the SMA iteratively adjusts the model’s hyperparameters to reduce the MSE. After each iteration, SMA evaluates the current model configuration using the fitness function and determines whether further adjustments are necessary. As such, the fitness function plays a central role in guiding the optimization process.

SMA was selected as the optimization algorithm for this study due to its strong global search capabilities and adaptability. Inspired by the foraging behavior of slime molds, SMA simulates how these organisms adjust their movement based on odor concentration gradients. This behavior allows the algorithm to perform extensive searches in complex environments without requiring prior knowledge. Its robust global exploration makes it especially effective for high-dimensional, nonlinear optimization problems. Compared with other modern metaheuristic algorithms—such as the Crayfish Optimization Algorithm, Reptile Search Algorithm, and Red Fox Optimizer—SMA offers distinct advantages in addressing dynamically changing and complex tasks. While algorithms like the Crayfish Optimization Algorithm and Red Fox Optimizer may perform well in certain scenarios, SMA’s ability to avoid local optima through adaptive exploration results in more stable and accurate outcomes, particularly in tasks involving spatiotemporal data. It is important to note, however, that the selection of SMA does not imply it is universally superior. According to Wolpert’s “No Free Lunch Theorem,” no single optimization algorithm consistently outperforms others across all problem domains. SMA was chosen in this study based on its suitability for the specific challenges of modeling complex, spatiotemporal agricultural data. Its dynamic adjustment mechanism improved both convergence speed and optimization precision.

Advantages of SMA: Strong Global Search Capability: SMA mimics the foraging behavior of slime molds, enabling extensive exploration in high-dimensional spaces and effectively avoiding local optima. High Adaptability: The algorithm adapts well to diverse optimization tasks, making it particularly effective for handling the spatiotemporal complexity of agricultural data. High Optimization Efficiency: Compared with other optimization algorithms, SMA offers faster convergence and greater accuracy, especially during model training for complex agricultural datasets.

SMA is a bio-inspired optimization algorithm based on the foraging behavior of slime molds. It simulates the decision-making process these organisms use when locating food, optimizing model parameters in a similar fashion. During the search phase, slime molds rely on odor concentration cues to guide their movement toward food sources50,51,52. The mathematical formulation of this process is provided in Eq. (5) through (7).

(5)

$$\:{X}_{t+1}={v}_{c}\cdot\:\text{X}\left(t\right),r\ge\:p$$

(6)

$$\:p=tanh\left|S\left(i\right)-{D}_{F}\right|$$

(7)

In Eq. (5) to (7), \(\:{X}_{t+1}\) represents the position vector of the slime mold population at time step t+1. \(\:{X}_{A}\left(t\right)\) denotes the position vector of the food source at time step t, and \(\:{X}_{B}\left(t\right)\) corresponds to the position vector of another individual in the slime mold population. W is the weight matrix, while \(\:{v}_{b}\) and \(\:{v}_{c}\) are velocity factors that control the movement speed of the slime molds. The variable r is a random number between 0 and 1, used to determine the movement strategy. The threshold p, calculated using Eq. (14), dictates whether the slime mold adopts a fast or slow movement strategy. \(\:S\left(i\right)\) denotes the intensity or feature value of the i-th odor source, and \(\:{D}_{F}\) is a predefined constant representing the food detection threshold53,54. The computation of the weight matrix W is detailed in Eq. (8) to (10).

$$\:W\left(SmellIndex\left(i\right)\right)=1+r\cdot\:\text{lg}\left(\frac{{b}_{F}-S\left(i\right)}{{b}_{F}-{w}_{F}}+1\right),condition$$

(8)

$$\:W\left(SmellIndex\left(i\right)\right)=1-r\cdot\:\text{lg}\left(\frac{{b}_{F}-S\left(i\right)}{{b}_{F}-{w}_{F}}+1\right),others$$

(9)

$$\:SmellIndex=sort\left(S\right)$$

(10)

In Eq. (8) to (10), the variable \(\:SmellIndex\left(i\right)\) denotes the rank-based index of S, representing the intensity of odor sources or other features. \(\:{b}_{F}\) and \(\:{w}_{F}\) correspond to the boundary values of the food source and the slime mold’s current position, respectively. When the slime mold senses a high concentration of the food source, it increases the weight assigned to that area to enhance its ability to effectively encircle the food55,56. The weight adjustment process is detailed in Equations (11) to (13).

$$\:{X}^{*}=\text{r}\text{a}\text{n}\text{d}\cdot\:\left({U}_{B}-{L}_{B}\right)+{L}_{B},rand

(11)

(12)

$$\:{X}^{*}={v}_{c}\cdot\:\text{X}\left(t\right),r\ge\:p$$

(13)

In Equations (11) to (13), \(\:{X}^{*}\) represents the updated position of the slime mold individual. \(\:{L}_{B}\) and \(\:{U}_{B}\) define the lower and upper bounds of the slime mold’s position. \(\:z\) is a random threshold used to determine the weight correction strategy57.

During this phase, the slime mold adjusts the width of its veins using propagating waves generated by biological oscillators, improving the efficiency of selecting optimal food sources. The oscillatory process is represented by the parameters \(\:W\), \(\:{v}_{b}\), and \(\:{v}_{c}\), where the coordinated oscillation of \(\:{v}_{b}\) and \(\:{v}_{c}\) simulates the slime mold’s selective foraging behavior58,59.

To handle the nonlinear and high-dimensional nature of agricultural data, the SMA is employed for global hyperparameter optimization. While traditional SMA updates individual positions by simulating slime mold foraging behavior, this study enhances the weight update strategy by incorporating constraints specific to agricultural scenarios, as described in Eq. (14):

$$\:{W}_{new}={W}_{old}+\eta\:\cdot\:{\nabla\:}_{region}({D}_{climate},{D}_{policy})$$

(14)

In Eq. (14), \(\:\eta\:\) represents the learning rate, and \(\:{\nabla\:}_{region}\) denotes the gradient constraint function derived from regional climate and policy data. This function encodes geographic differences as optimization directions, ensuring that hyperparameter selection aligns with the specific agricultural characteristics of each region. The optimization objective is to minimize the MSE, following the standard formulation.

For parameter tuning in deep learning models, SMA optimizes model weights by simulating the natural foraging behavior of slime molds. The parameter tuning and optimization process using SMA is illustrated in Fig. 3.

Parameter Tuning and Optimization Process of SMA.

This study employs the SMA to optimize deep learning hyperparameters and enhance model performance. Specifically, SMA fine-tunes key parameters including the number of convolutional layers and kernel sizes in the CNN, the number of units in the LSTM, learning rate, optimizer choice, batch size, and the number of training epochs. The CNN’s structure—particularly its depth and kernel sizes—directly impacts its spatial feature extraction ability, while the number of LSTM units determines the capacity to capture temporal dependencies. Optimizer selection and learning rate are crucial for training efficiency, with appropriate learning rates enabling faster convergence. Batch size and training epochs also affect training speed and model accuracy. SMA optimizes these hyperparameters by simulating slime mold foraging behavior, effectively navigating the search space to avoid local optima issues common in grid or random search methods. This leads to faster convergence and improved generalization. The experiments integrate SMA within the deep learning framework to optimize the CNN and LSTM architectures’ key hyperparameters.

The SMA was used to optimize the hyperparameters of the deep learning models. The search ranges for these hyperparameters were determined based on practical application requirements, model performance, and insights from previous studies. For the CNN, the number of convolutional layers was set between 2 and 5 to avoid both overfitting from overly deep networks and underfitting from networks that are too shallow. Kernel sizes were chosen from [3 × 3,5 × 5,7 × 7], balancing feature extraction capability and computational efficiency. The stride was set to either 1 or 2; smaller strides improve feature detail, while larger strides speed up computation. Pooling window sizes were selected from [2 × 2,3 × 3], helping reduce computation and prevent overfitting. For the LSTM, the number of units was selected from [64,128,256], reflecting a trade-off between capturing long-term dependencies and computational cost. The learning rate was chosen from [0.0001,0.001,0.01] to ensure effective training without premature convergence. The Adam optimizer was used due to its strong performance and minimal need for parameter tuning. Batch size and training epochs were set to16,32,64 and [100,300,500], respectively. Batch size affects memory use and training stability, while epoch range balances learning completeness and overfitting risk. A summary of these search ranges is provided in Table 4.

The pseudo code of SMA optimization process is shown in Table 5:

To account for the inherent randomness of metaheuristic algorithms like SMA, multiple independent runs were conducted to ensure result reliability and stability. Each experiment was repeated 10 times with different initial conditions to reduce the risk of convergence to local optima. The average results from all runs provided a stable and representative measure of the optimization process, enhancing the robustness of the conclusions.

Figure 4 illustrates the architecture and operational flow of the proposed CNN-LSTM-SMA hybrid model. Multi-source agricultural data—including satellite remote sensing images, meteorological time series, and field management records—are fed into the CNN and LSTM modules via parallel channels. The CNN extracts spatial features such as farmland distribution and soil composition through convolutional operations, while the LSTM captures long-term temporal dependencies in variables like climate and policy interventions using GRUs. Outputs from both networks are fused through a weighted concatenation module, where the weights are dynamically optimized by the SMA. This optimization incorporates gradient constraints based on regional climate and policy heterogeneity. Through iterative updates, SMA adjusts critical hyperparameters—such as convolutional kernel sizes and the number of LSTM units—improving the model’s adaptability. Optimized parameters are continuously fed back into training. The fused spatiotemporal features then pass through a fully connected layer to produce an agricultural transformation effectiveness score, enabling comprehensive and dynamic evaluation. The schematic clearly depicts data flow, module interactions, and the feedback-driven optimization, highlighting the model’s innovative ability to integrate heterogeneous data and adapt to regional variability.

Structure and Optimization Process of the CNN-LSTM-SMA Hybrid Model.

To enhance the model’s regional adaptability, a region-specific feature weighting coefficient \(\:{a}_{i}\) is introduced during the CNN and LSTM feature fusion stage. This coefficient adjusts the contribution of features from different regions to the final prediction. The initial values of \(\:{a}_{i}\) are derived by normalizing a prior indicator vector constructed from geographical attributes such as climate zones, soil types, and terrain relief, as shown in Eq. (15):

$$\:{a}_{i}^{\left(0\right)}=\frac{{x}_{i}}{\sum\:_{j=1}^{n}{x}_{j}}$$

(15)

where \(\:{x}_{i}\) represents the feature intensity score of the i-th region, and n is the total number of regions. During model training, the SMA incorporates the regional weighting coefficients as tunable parameters within the individual encoding, alongside hyperparameters such as the number of CNN convolutional kernels and LSTM units. After each iteration, SMA evaluates the fitness of each individual based on prediction error—primarily using MSE—and fine-tunes the regional weights accordingly. The update strategy combines global exploration with local exploitation, enabling the model to dynamically adjust spatial feature weights based on the performance of input data from different regions. This mechanism significantly improves the model’s generalization and adaptability across regions.

link