Development of an optimized deep learning model for predicting slope stability in nano silica stabilized soils

Performance analysis of the hybrid deep learning models

Table 6 presents a comprehensive evaluation of three different machine learning models CNN-LSTM-RNN, LSTM-CNN-RNN and RNN-CNN-LSTM in the classification of stabilized NS slopes into stable and unstable categories. The Models’ Performance is Assessed Using Various Classification Metrics, Including Accuracy, F1 Score, Precision, Recall, Kappa, and MCC. For Accuracy, The CNN-LSTM-RNN MODEL ACHIEVES THE HIGHEST TRAINING ACCURACY (0.996), Indicating Its Superior Ability to Fit the Training Data. However, when testing on invisible data, LSTM-CNN-RNN overcomes others with an accuracy of 0.996, which slightly exceeds the CNN-LSTM-RNN model, which scores 0.992. The RNN-CNN-LSTM Model Performs Slightly Lower in Both Training (0.992) and Testing (0.994). The F1 score, which balances accuracy and appeal, shows that CNN-LSTM-RNN is the best artist, with the F1 score of 0.995 during training and 0.990 during testing. This suggests that CNN-LSTM-RNN MAINTAINS and Good Balance Between Precision and Recall. The LSTM-CNN-RNN is closely monitored by the F1 0.992 training score and the F1 score testing, while the RNN-CNN-LSTM shows a lower score F1 (0.991 during training and 0.993 during testing).

Regarding the accuracy that measures the share of correctly predicted positive instances, RNN-CNN-LSTM leads with an impressive accuracy of 0.999 during training and 1,000 during testing. This shows the excellent performance of the model to minimize false positives, especially in testing. CNN-LSTM-RNN and LSTM-CNN-RNN show lower accuracy during testing (0.983 and 1,000). The appeal metric, which focuses on how well the model identifies the real positive instance, shows that CNN-LSTM-RNN excels in withdrawal of 0.997 during training and 0.998 during testing, indicating that it captures the most unstable slopes. The LSTM-CNN-RNN has a slightly lower withdrawal (0.986) during training but works well during testing, while RNN-CNN-LSTM reaches a lower withdrawal (0.982 during training and 0.986 during testing). Regarding Kappa, which measures an agreement between the expected and actual classifications, CNN-LSTM-RNN works again best and during testing it reaches Kappa 0.991 and 0.983. This shows the best overall match between the expected and actual slope classification. LSTM-CNN-RNN and RNN-CNN-LSTM show similar power, with Kappa values of 0.986 and 0.984 during training and 0.991 and 0.987 during testing.

MCC (Matthews correlation coefficient) provides a balanced assessment of the model’s performance about all possible results (real positives, false positives, real negatives and false negatives). CNN-LSTM-RNN reaches the highest MCC with a value of 0.991 during training and 0.983 during testing, indicating a strong overall performance. LSTM-CNN-RNN and RNN-CNN-LSTM have similar MCC values, the former scored 0.986 during training and 0.991 during testing and the second during testing scored 0.984 during training and 0.987 during testing. In conclusion, CNN-LSTM-RNN is the most popular model that excels in overall accuracy, scoring F1 and Kappa, which is ideal for general classification tasks where balanced performance is critical. However, if the minimization of false positives is most important, RNN-CNN-LSTM offers excellent accuracy, especially during testing. On the other hand, LSTM-CNN-RNN provides the highest test accuracy and F1 scores, making it a strong candidate for tasks requiring high accuracy and download. The performance of each model can be adapted to specific needs depending on the emphasis on accuracy, induction or overall classification balance. Based on the ranking of individual performance metrics, the LSTM-CNN-RNN model achieved the best overall performance with the lowest average rank of 1.58, followed closely by the CNN-LSTM-RNN model with an average rank of 1.67. The RNN-CNN-LSTM model consistently ranked lower, with a higher average rank of 2.58. Thus, the LSTM-CNN-RNN hybrid model is identified as the top-performing approach in this study.

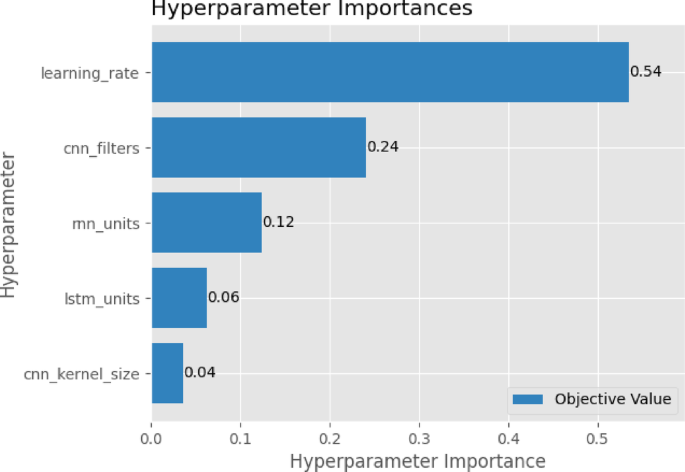

Table 7 represents the best-optimized hyperparameters used to develop a hybrid model for the classification of stable and unstable slopes in NS-stabilized soils. This model combines the strengths of convolution neural networks (CNN) and recurring neural networks (RNN), including long short-term memory (LSTM) units to effectively analyze both spatial and time data associated with inclination stability. CNN filters (in the range of 39 to 57) are designed to extract the critical spatial properties from geotechnical parameters such as soil index, cohesion and pore pressure, while the size of cores 2 and 4 allows the model to capture both fine and wide spatial patterns in the data. LSTM units (85 to 128) are optimized to learn long-term dependencies, such as the effect of dining days on the behaviour of the slope and the RNN (90 to 111) units, help the model understand gradual variations such as NS content changes over time. Carefully tuned learning rates (ranging from 0.000357 to 0.005664) ensure a balanced training process, allowing rapid convergence without jeopardizing the stability or accuracy of the model. By integrating these optimized parameters, the hybrid model shows high accuracy and robustness, making it a powerful tool for predicting the stability of inclination in NS-treated soils where static geotechnical properties and dynamic changes play a key role.

Figure 5 illustrates the relative importance of different hyperparameters in optimizing the power of the hybrid model developed to classify stable and unstable slopes in NS-stabilized soils. Of all the hyperparameters, the learning rate has emerged as the most critical, with a score of 0.54, suggesting that the gentle fine-tuning of this parameter had the greatest impact on the accuracy and convergence of the model. This emphasizes how necessary it was to check the speed at which the model updated its weights during training because the optimum learning speed ensures both quick convergence and model stability. Then the number of CNN filters significantly contributed to the score of 0.24, which emphasized the role of the effective extraction of the spatial element from geotechnical parameters such as soil cohesion, pore pressure and friction angle. By adjusting the number of filters, the model could better capture complex formulas in soil behaviour affected by NS stabilization stabilization.

RNN units (with a score of the importance of 0.12) played a key role in modelling sequential dependencies in data such as changes in inclination stability over time due to factors such as the days of treatment or variable water pressure. While the LSTM units contributed to capturing long-term temporal relationships, their lower importance score of 0.06 suggests that simpler recurrent architectures were sufficient for the task or that the temporal variations were not as complex. The CNN kernel size had the least impact on model performance, with a score of 0.04, indicating that while kernel size affects the scope of feature detection, it was not as influential as other hyperparameters. Overall, Fig. 7 demonstrates that precise tuning of the learning rate and feature extraction mechanisms were pivotal in achieving high model accuracy, while temporal modelling components provided additional, though less dominant, support in classifying slope stability.

Hyperparameter importance for hybrid models.

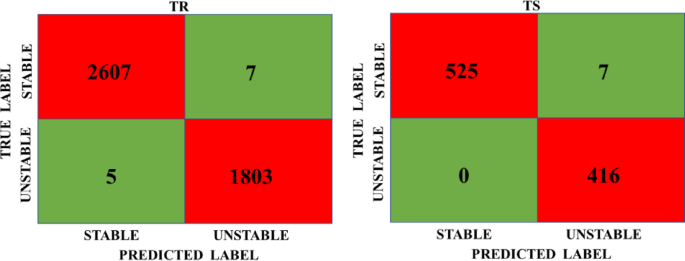

Confusion matrix of hybrid deep learning models

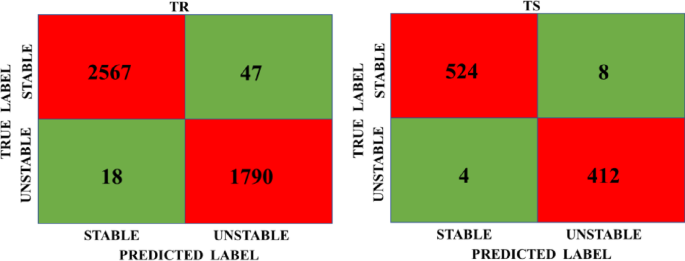

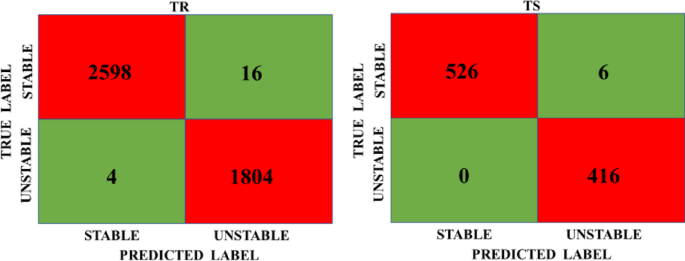

Figure 6 shows the confusion Matrix of CNN-LSTM-RNN values indicating stable performance for both training (TR) and testing (TS). The model achieves high correct predictions (2567 True Positives and 1790 True Negatives) with minimal errors (18 False Positives and 47 False Negatives). Similarly, Fig. 7 shows the confusion matrix of the LSTM-CNN-RNN model for TR and TS. The model has 2598 true positives and 1804 true negatives in TR. Furthermore, Fig. 10 confusion Matrix of the RNN-CNN-LSTM model for TR and TS. The model has 2607 true positives and 1803 true negatives in TR. The TS results for Figs. 6, 7 and 8 show a similar pattern to the TR results of the model. This consistency between TR and TS suggests the model is stable, as it generalizes well to unseen data without overfitting.

Confusion matrix of CNN-LSTM-RNN model for TR and TS.

Confusion matrix of LSTM-CNN-RNN model for TR and TS.

Confusion Matrix of RNN-CNN-LSTM model for TR and TS.

Overfitting score analysis

Difference method

Table 8 presents the performance differences (TR – TS) for three hybrid model architectures CNN-LSTM-RNN, LSTM-CNN-RNN, and RNN-CNN-LSTM across several evaluation metrics: Accuracy, F1 Score, Precision, Recall, Kappa, and Matthews Correlation Coefficient (MCC). Since smaller values indicate better generalization and higher performance on unseen data, models with the lowest differences are considered superior in maintaining consistency between training and testing phases. Among the three architectures, RNN-CNN-LSTM demonstrated the best overall performance with the smallest differences across most metrics, indicating superior generalization capabilities. It recorded the lowest differences in Precision (-0.001), F1 Score (-0.002), Accuracy (-0.002), Kappa (-0.003), and MCC (-0.003), suggesting that this architecture was the most effective in minimizing overfitting and maintaining performance consistency on testing data. However, the Recall value of -0.004 implies that it may have had slight challenges in identifying all unstable slopes, but this was consistent across other models as well. The LSTM-CNN-RNN architecture followed closely, with slightly larger performance differences. It showed Accuracy (-0.003), F1 Score (-0.003), Precision (-0.002), and Recall (-0.004). The higher differences in Kappa (-0.005) and MCC (-0.005) compared to RNN-CNN-LSTM suggest that this model was slightly more prone to overfitting, although it still performed better than CNN-LSTM-RNN. In contrast, the CNN-LSTM-RNN architecture showed the highest performance differences across most metrics, such as Accuracy (0.004), F1 Score (0.005), Precision (0.009), Kappa (0.008), and MCC (0.008). These larger positive differences indicate that while the model performed well during training, it struggled to maintain that performance on unseen testing data, suggesting a higher tendency toward overfitting. Interestingly, Recall (-0.001) had the smallest negative difference, showing that this model was slightly better at identifying unstable slopes during testing, but at the cost of overall generalization.

Table 9 illustrates the percentage variation between Training (TR) and Testing (TS) performance for three hybrid model architectures CNN-LSTM-RNN, LSTM-CNN-RNN, and RNN-CNN-LSTM across several key evaluation metrics: Accuracy, F1 Score, Precision, Recall, Kappa, and Matthews Correlation Coefficient (MCC). In this context, lower percentage variations indicate better generalization performance and less overfitting, as they reflect more consistent behaviour between the training and testing phases.

Among the models, RNN-CNN-LSTM exhibited the smallest percentage variations across most metrics, indicating superior generalization and robustness. For example, the lowest accuracy changes (0.20%), F1 scores (0.20%), accuracy (0.10%), Kappa (0.30%) and MCC (0.30%). These results suggest that this architecture was most effective in maintaining consistent performance between data sets of training and testing, minimizing excess value. However, both RNN-Cnn-Lstm and Lstm-Cnn-Rnn have seen slightly higher download changes (0.40%), suggesting that both models had similar challenges due to the consistent identification of unstable slopes during testing.

The LSTM-CNN-RNN model followed narrowly, with mild percentage changes. It showed accuracy (0.30%), score F1 (0.30%), accuracy (0.20%), Kappa (0.50%) and MCC (0.50%), reflecting a good but slightly less consistent power compared to RNN-CNN-LSTM. Variations of higher memories (0.40%) suggest that while this model was able to identify unstable slopes during training, its remembrance performance decreased on invisible data, indicating a slight tendency to exaggerate in this aspect. On the other hand, CNN-LSTM-RNN architecture showed the highest percentage variations across most of the metrics, including accuracy (0.40%), F1 scores (0.50%), accuracy (0.90%), Kappa (0.80%) and MCC (0.80%). These larger variations emphasize that while the model worked strongly during the training, its ability to generalize to new, invisible data was less robust, indicating a higher degree of excessive amount. Interestingly, the appeal (0.10%) showed the lowest variant of this architecture, suggesting that it maintained better consistency in identifying unstable slopes compared to the other two models, but at the cost of general generalization across other metrics.

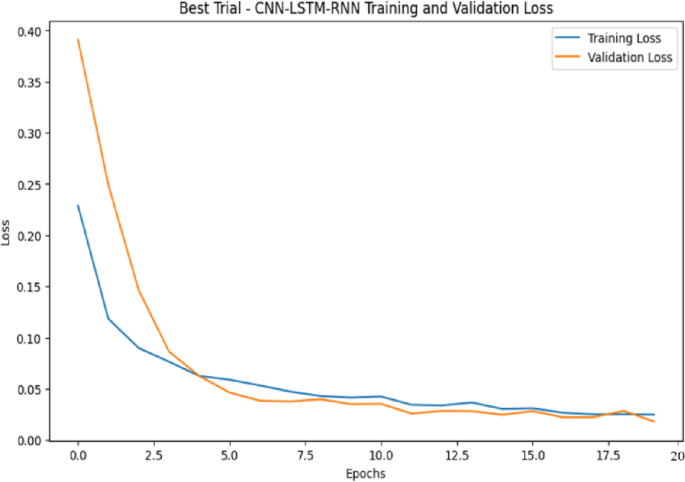

Training and validation loss method

Figure 9 illustrates the training and validation loss curves for a CNN-LSTM-RNN model over multiple epochs. The training loss (blue) and validation loss (orange) both show a significant decrease in the initial epochs, indicating effective learning. Importantly, the validation loss closely follows the training loss throughout the training process, with no noticeable divergence. This suggests that the model is not overfitting, as there is no significant gap between the two losses. Overfitting typically manifests as a point where the training loss continues to decrease while the validation loss starts increasing or stagnating. In this case, both losses converge toward a minimal value, reflecting good generalization. Therefore, this model appears well-regularized and capable of performing well on unseen data, demonstrating its robustness in learning meaningful patterns without memorizing the training dataset.

CNN-LSTM-RNN training and validation loss.

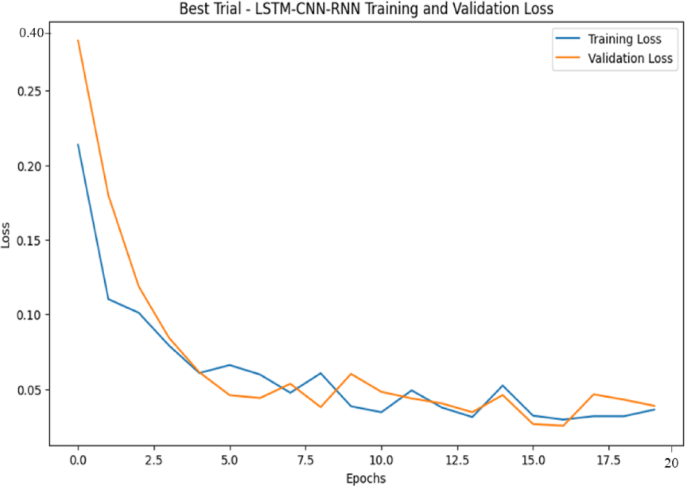

Figure 10 represents curves of loss of training and validation for the LSTM-CNN-RNN model in multiple epochs. The rapid decrease in both losses during the initial epoch indicates effective learning and convergence. Loss of training (blue) and loss of validation (orange) remain closely aligned, showing that the model is well generalized without overfill. Although minor fluctuations in validation loss appear in later epochs, they remain within an acceptable range, which suggests natural variations rather than excessive ones. Overpowers would usually be characterized by a constant decrease in training loss, while the loss of validation is beginning to rise, which is not observed here. Instead, the loss of validation is stabilized with a low value, which means that the model maintains a strong predictive performance on invisible data. The minimum abyss between training losses and validation further confirms that the model is well-controlled and effectively captures the basic formulas in the data file without remembering.

LSTM-CNN-RNN training and validation loss.

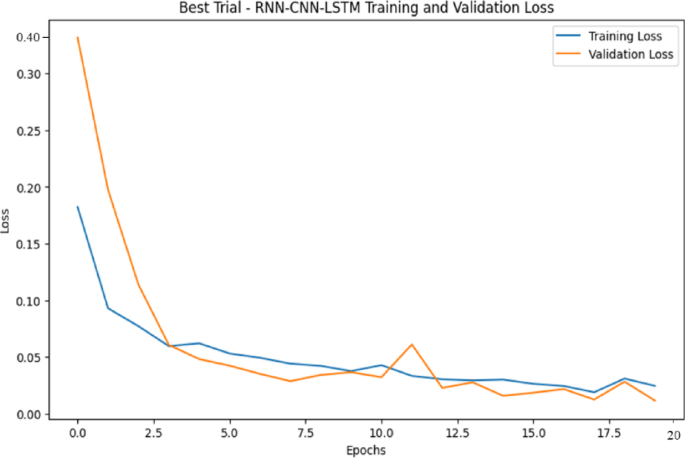

Figure 11 represents curves of loss of training and validation for the RNN-CNN-LSTM on multiple epochs. The deflective decrease in both losses during the initial epoch indicates rapid learning and effective weight optimization. Importantly, loss of training (blue) and loss of validation (orange) show a similar trend, suggesting that the model maintains a strong generalization capacity. The convergence of two-loss curves at lower values confirms that the model successfully extracts meaningful properties from the data file without overfitting. The key observation is the smooth and stable nature of both loss curves, with only a less fluctuations in the loss of validation. These fluctuations are expected as a result of changes in the validation data set and do not indicate excess. Overfitting typically occurs when the training loss continues to decrease while validation loss stagnates or increases significantly, which is not the case here. Instead, the close alignment of both loss curves throughout the training process suggests that the model is well-regularized and robust, ensuring reliable performance on unseen data. Table 10 shows the comparative analysis of hybrid deep learning models in the overfitting analysis.

RNN-CNN-LSTM training and validation loss.

link