Deep learning approaches for assessing pediatric sleep apnea severity through SpO2 signals

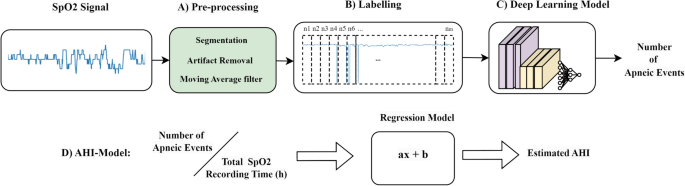

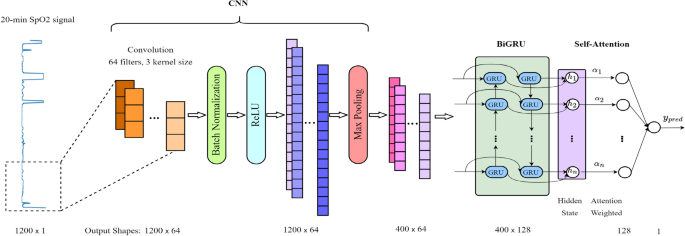

The methodology for AHI estimation in our study is outlined in Fig. 1, comprising an end-to-end pipeline with four key stages: (A) Signal Segmentation and Pre-processing; (B) Labeling; (C) Deep Learning Model; and (D) AHI Estimation.

End-to-End Process Flow for AHI Estimation from SpO2 Signals.

Signal segmentation and pre-processing

The SpO2 signals, acquired from PSG using a pulse oximeter finger probe, varied in sampling rates, ranging from 1 to 512 Hz. Our initial step involved re-sampling the SpO2 recordings to a unified rate of 1 Hz, rounded to the nearest second decimal place. This standardization, inspired by prior research28,34, aimed to reduce computational demands and achieve consistency across signals. Following re-sampling, we divided the SpO2 signals from each subject into non-overlapping segments of 20 min (1200 samples). This segmentation strategy facilitated the detection of sustained desaturation events, consistent with criteria defining desaturation clusters of at least 10 min duration34. To prepare the signals for analysis, we initially addressed motion artifacts and zero-level artifacts, which commonly arise from sensor disconnections. We eliminated abrupt changes exceeding 4% per second between consecutive samples over a one-second interval and disregarded any instances where oxygen saturation fell below 50%, following the guidance of previous studies9,35. We designed an algorithm inspired by these methodologies to effectively locate zero-level artifacts by detecting signal values below 50%, which are not typical for a healthy individual, and identifying abrupt changes by checking for differences greater than 4% between consecutive signal values. These artifacts were then removed and substituted with values derived from linear interpolation between their preceding and following values. This step was crucial for ensuring data integrity, as significant SpO2 drops below 50% and rapid fluctuations are often indicative of measurement errors or sensor issues. Furthermore, to smooth the signal and reduce short-term variations, we applied a 3-s moving average (MA) filter. This effectively attenuated sharp spikes and ripples in the data35.

Labeling of SpO2 signal segments

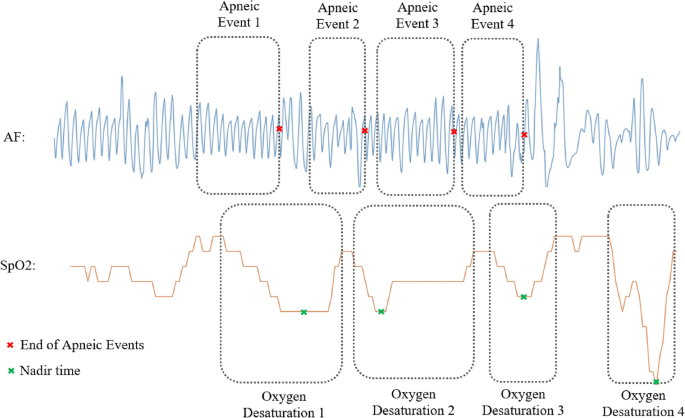

The labeling process for each 20-min SpO2 signal segment was crucial in our study. Based on annotations provided by sleep technicians, as referenced in32, our labeling algorithm was meticulously designed to accurately identify all desaturation events associated with apneic episodes. This algorithm operates on the principle that desaturations linked to any respiratory event rely on the nadir desaturation (lowest oxygen level during desaturation) reached, typically within a 30-s span following the event’s conclusion32. For each segment, the output label was determined based on the number of apnea and hypopnea events associated with a 3% oxygen desaturation occurring within the 20-min window. Figure 2 exemplifies this process, showcasing the correlation between apneic events and their subsequent oxygen desaturations in AF and SpO2 signals. This labeling was meticulously conducted in accordance with the annotation files provided in the CHAT dataset. To validate the effectiveness of our labeling algorithm, we conducted a comparative analysis. This involved matching the number of detected apneic events, linked with a 3% oxygen desaturation, against the sum of original PSG variables from the dataset. These variables describe the number of each type of apneic event associated with a 3% oxygen desaturation. In our rigorous validation process, only recordings with a labeling error margin below 10% were considered suitable for training and evaluating our models. This criterion led to the selection of 884 SpO2 recordings for our study. Table 1 in our paper presents the clinical and demographic data of the subjects from these selected recordings.

Synchronous events of apnea and oxygen desaturation.

Deep learning model

ResNet architecture for SpO2 signal analysis

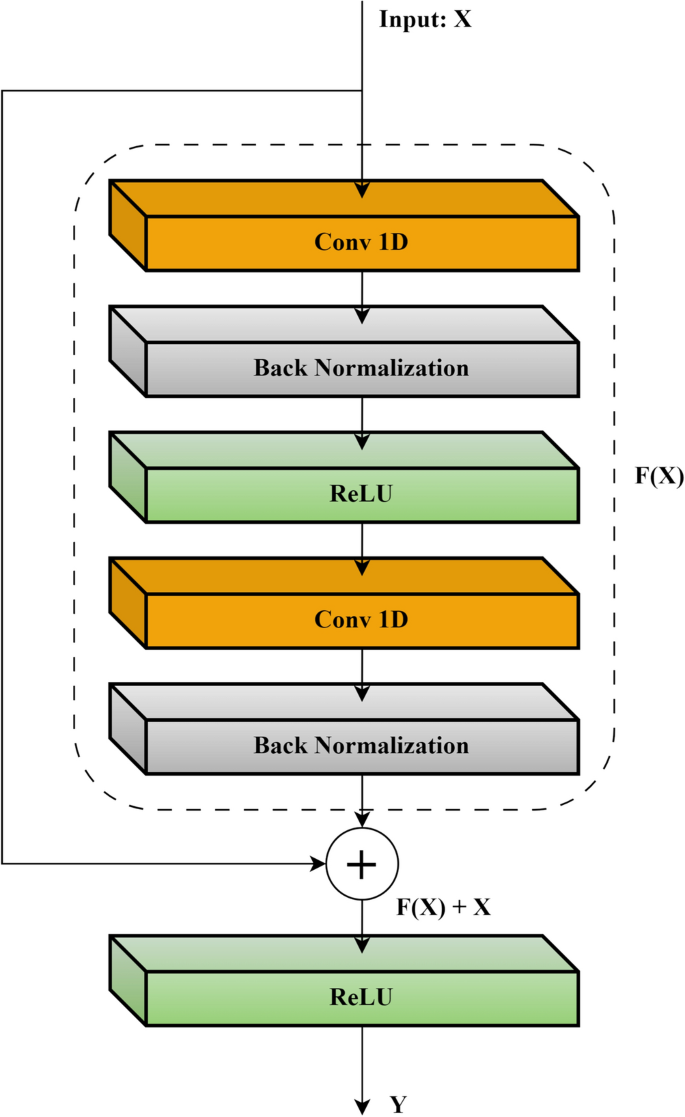

CNN-based models have demonstrated significant effectiveness in diagnosing the severity of pediatric OSA, as shown in previous research27,28,29,30. However, these networks often encounter a major obstacle when increasing the number of convolutional layers: the training loss tends to plateau, a phenomenon largely attributed to the vanishing gradient problem. The Residual Network (ResNet) framework was developed to address this challenge, notably enhancing the accuracy of deep CNNs36. ResNet introduces the concept of residual learning, a paradigm shift from traditional deep network methodologies that attempt a direct mapping from input to output. Instead, ResNets focus on learning residual mappings. This is mathematically expressed as Y = F(X) + X, where Y denotes the desired output, F(X) represents the residual function, and X is the input. This approach allows the network to concentrate on learning the additional information (F(X)) needed to achieve the desired output Y, particularly beneficial when F(X) is close to zero. The benefits of ResNet include easing the training of deep networks by alleviating the gradient vanishing issue and enabling the construction of much deeper networks without sacrificing accuracy. Additionally, ResNet incorporates skip connections, which directly add the input X to the output of the residual function F(X), promoting smoother gradient flow during training. Figure 3 illustrates a typical residual block, showing how input X is transformed into its desired mapping Y.

Schematic of a residual learning block.

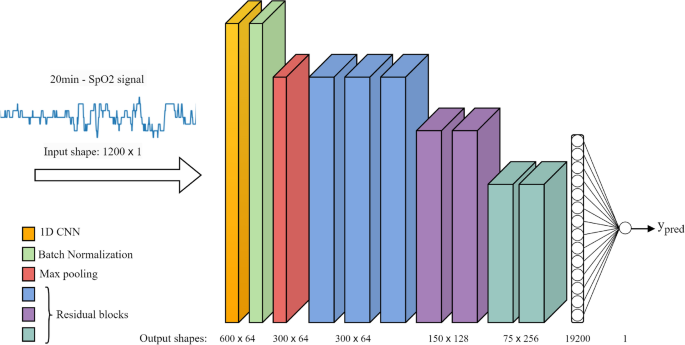

In our study, we have adapted the ResNet-34 architecture, initially designed for image recognition36, into a 1D format suitable for analyzing SpO2 signals. This adaptation involved replacing 2D convolutional layers (Conv2D) with 1D convolutional layers (Conv1D), which are more apt for processing time-series data like SpO2 signals. This adaptation involved replacing 2D convolutional layers (Conv2D) with 1D convolutional layers (Conv1D), which are more apt for processing time-series data like SpO2 signals. Given the size of our dataset, we started with the relatively shallower ResNet-34 model, consisting of 34 layers. However, to mitigate overfitting, we optimized the model to 16 layers, which provided efficient performance with quicker convergence compared to deeper models.

Figure 4 presents a detailed depiction of our modified ResNet architecture, illustrating the sequence and function of each layer:

-

Input Layer: Receives the raw 20-min SpO2 signal segment with a size of 1200 × 1.

-

Conv1D Layer: Applies learnable filters to the input, extracting fundamental patterns and low-level features.

-

Batch Normalization: Normalizes the activations from the Conv1D layer, stabilizing and expediting the training by reducing internal covariate shift.

-

ReLU Activation: Introduces non-linearity through the rectified linear unit (ReLU) function.

-

MaxPooling1D: Reduces the dimensionality of the data, maintaining essential features while lessening computational load.

-

Residual Blocks: Each block, consisting of two convolutional layers, forms the core component of the ResNet. Our model includes seven such blocks.

-

Flatten Layer: Transforms the output of the last residual block into a one-dimensional vector, ensuring compatibility with the subsequent fully connected layer.

-

Fully Connected Layer: Processes the received output to perform the final mapping, producing a numerical prediction.

-

Output Layer: Generates the final prediction for the number of apneic events (ypred).

Architecture of the modified ResNet model for SpO2 signal analysis.

Development of the CNN-BiGRU-attention architecture

In our study, we designed a sophisticated model that combines a CNN with a Bidirectional Gated Recurrent Unit (BiGRU) and an attention mechanism. This integrative approach, inspired by its successful application in blood pressure estimation37, is adapted here to process SpO2 signals for AHI estimation. The incorporation of the attention mechanism is driven by its proven effectiveness in focusing on pertinent segments within datasets, a principle extensively utilized in diverse fields such as image captioning, machine translation, and speech recognition38. Our model aims to investigate the synergy of these distinct architectures, assessing how the RNN structure processes features extracted by the CNN and the role of the attention mechanism in augmenting RNN performance for AHI estimation. Figure 5 in our paper illustrates the architecture of the proposed model. The process begins with the CNN layer, which is responsible for extracting pertinent features from the input SpO2 signals. Following feature extraction, the BiGRU layer, recognized for its capability to handle long-term dependencies in sequential data, processes these features. This is achieved by analyzing the data in both forward and backward temporal directions, thereby capturing complex temporal dynamics inherent in the SpO2 signals. Furthermore, we integrate an attention mechanism with the outputs of the BiGRU layer. This mechanism assigns weights to different temporal features, enabling the model to concentrate its predictive capacity on the most crucial segments of the signal. This combination—CNN for initial feature extraction, BiGRU for in-depth temporal processing, and attention for targeted focus—constitutes a potent framework designed to enhance the accuracy of AHI estimation from SpO2 signals.

CNN-BiGRU Attention model architecture for SpO2 signal analysis.

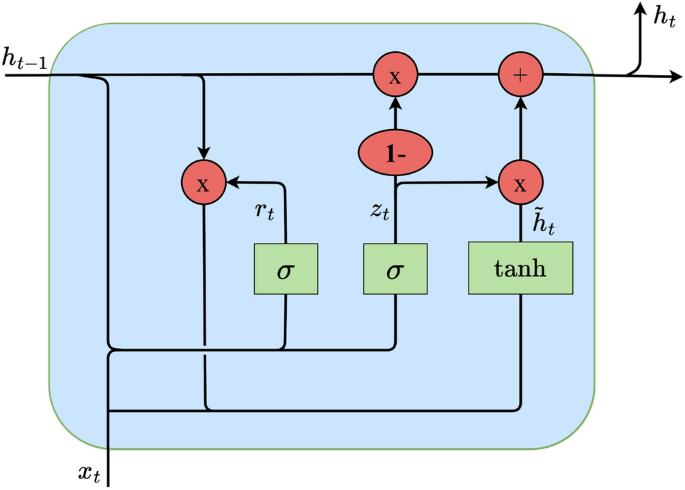

It is pertinent to note that while RNNs are pivotal in handling sequential data tasks such as speech recognition, they often struggle with long sequences due to the vanishing gradient problem39. LSTM networks, introduced by Hochreiter and Schmidhuber40, have been developed to counter this issue, incorporating specialized gates to manage information flow. Bidirectional LSTMs (BiLSTMs) further refine this approach by processing data in both forward and backward directions, thus encompassing past and future contexts in the analysis. BiGRUs, a variant conceived by Cho et al.41, streamline the design of LSTMs by combining the input and forget gates, thereby reducing the model’s complexity while retaining efficiency in processing bidirectional sequence data. The architecture of the GRU cell employed in this analysis is portrayed in Fig. 6. At each time step, the GRU cell encompasses two crucial input vectors: the preceding hidden output value \(h_t-1\), which includes feature values from the previous time step across all feature maps extracted by the CNN layer filters, and the current input vector \(x_t\), which contains the current feature values from all feature maps extracted by the CNN layer filters. The computation of the contemporary hidden output value of the cell \(h_t\) unfolds through the following equations:

$$ \beginarray*20l z_t = \sigma \left( W_z \cdot \left[ h_t – 1 ,x_t \right] \right) \hfill \\ \begingathered r_t = \sigma \left( W_r \cdot \left[ h_t – 1 ,x_t \right] \right) \hfill \\ \tilde\texth_t = \tanh \left( W_h \cdot \left[ r_t \odot h_t – 1 ,x_t \right] \right) \hfill \\ \, \hfill \\ \endgathered \hfill \\ \begingathered h_t = \left( 1 – z_t \right) \odot h_t – 1 + z_t \odot \tilde\texth_t \hfill \\ \endgathered \hfill \\ \hfill \\ \endarray $$

(1)

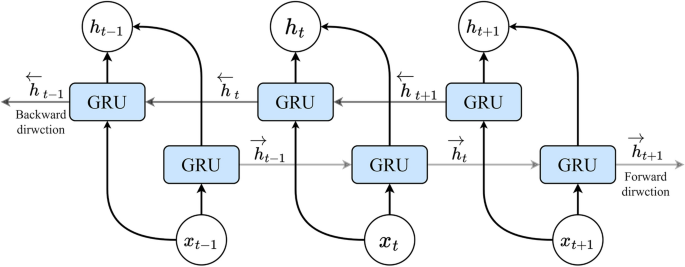

Schematic of a Gated Recurrent Unit (GRU) cell.

Here, \(\textz_\textt\) and \(r_t\) denote the update and reset gate vectors, respectively. The weight parameters \(W_z\), \(W_r\) and \(W_h\) are trainable and contribute to the gate operations. The term \(\widetildeh _t\) signifies the candidate state, capturing the extent of assimilating present information post the reset gate. The activation functions \(\sigma (\bullet )\) and \(\texttanh(\bullet )\) encapsulate the sigmoid and hyperbolic tangent functions, respectively, while \(\odot \) signifies element-wise multiplication. Unlike its unidirectional counterpart, the conventional GRU, a bidirectional GRU (BiGRU) is adopted in this study. A BiGRU encompasses both forward and backward layer cells’ hidden output values. Figure 7 shows the architecture of a BiGRU layer structure, with one pair of GRU cells at each time step. The final hidden output vector of the BiGRU layer at time step t, \(\overrightarrowh_out_t\) is a concatenation of the forward hidden output vector \(\overrightarrowh_t\) (including forward layer’s cells hidden output values) and backward hidden output vector \(\overleftarrowh_t\) (including backward layer’s cells hidden output values):

$$ \begingathered \vech_t = [h_tc_1 , \, h_tc_2 ,…, \, h_tc_n – 1 , \, h_tc_n ] \, \hfill \\ \mathoph\limits^\leftarrow _t = [h_tc_n , \, h_tc_n – 1 ,…, \, h_tc_2 , h_tc_1 ] \hfill \\ \overrightarrow h_out_t = [\vech_t , \, \mathoph\limits^\leftarrow _t ] \hfill \\ \endgathered $$

(2)

Schematic of a Bidirectional GRU (BiGRU) layer structure with one pair GRU cells at each time step.

The attention mechanism, initially introduced by Bahdanau et al. in 201442 to address limitations in traditional sequence-to-sequence models, marked a significant breakthrough in natural language processing. This mechanism, commonly referred to as attention, has since evolved into self-attention or intra-attention, finding widespread application in diverse DL tasks. In our work, we harness the power of self-attention to enhance the model’s ability to capture crucial temporal features within sequential SpO2 signals. By applying self-attention to the outputs of the BiGRU layer, our model dynamically assigns weights to different temporal features, prioritizing the most relevant signal segments for accurate AHI estimation. In developing the self-attention mechanism, we consider the final hidden state matrix \(H_s\) from the BiGRU including hidden output vectors of the BiGRU at each time step \(h_t\), where t \(\in \) [1, N]. The significance score vector \(\overrightarrows\) is computed using a score function, \(score(\cdot )\), before multiplying the hidden state matrix by a randomly initialized weight and bias vectors (\(\overrightarroww\) and \(\overrightarrowb\)) as outlined below:

$$ \, \overrightarrow s = score\left( H_s \vecw + \vecb \right), \, H_s = \left[ \begingathered \vech_out_1 \hfill \\ \vech_out_2 \hfill \\ \vdots \hfill \\ \vech_out_N \hfill \\ \endgathered \right] $$

(3)

For the score function, we initially experimented with the dot product, tanh, and ReLU functions. Ultimately, we selected ReLU as the score function because it provided better performance and faster convergence during the training process. After obtaining the importance score values for each BiGRU hidden output vectors at each time step (including the hidden output value of all cells) and formming the score vector of \(\overset\lower0.5em\hbox$\smash\scriptscriptstyle\rightharpoonup$ s \), the attention weight \(\alpha _i\) for the hidden output vector at time step \(t\) is determined by applying a softmax function to the score vector:

$$ \begingathered \overrightarrow s \, = \, [s_1 ,s_2 ,…,s_N ] \hfill \\ a_i = \frac\exp \left( s_i \right)\sum\limits_j = 1^N \exp \left( s_j \right) \, \hfill \\ \endgathered $$

(4)

This softmax function guarantees that the attention weights collectively sum to 1, effectively normalizing the significance scores across all time step vectors within the hidden state matrix. The attention weights vector \(\overrightarrow\alpha \) includes all attention weights for each cell’s hidden output vector. The final output vector \(\overrightarrowv\) is derived by multiplying the attention vector by the hidden state matrix of the BiGRU:

$$ \begingathered \overrightarrow a = [a_1 , \, a_2 ,…, \, a_N ] \hfill \\ \overrightarrow v = \overrightarrow a H_s \hfill \\ \endgathered $$

(5)

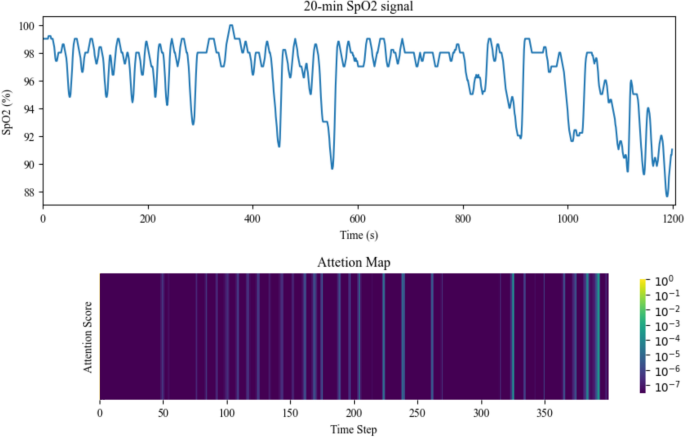

This summation offers a comprehensive representation of the input sequence, highlighting the contributions of distinct time steps based on their computed attention weights. To futher clarify the effect of the attention layer on each part of the input signal segment, we plotted an attention map (heatmap) of the attention weights vector (attention scores) for a 20-min signal segment from the test set, as represented in Fig. 8. The heatmaps, obtained by graphing the alpha vector resulting from the softmax output in Eq. (4), were scaled using log10 to better represent the distribution of attention score values.

Attention Map of the Attention Scores Derived from the Attention Layer for a 20-Minute Signal Segment Input.

Model training and optimization

For training the ResNet and CNN-BiGRU-Attention models, we employed distinct initialization methods. The ResNet model was initialized using the He-normal method43, while the CNN-BiGRU-Attention model started with random weights. Data were fed into both models in batches and shuffled at each training epoch to enhance convergence. Since the number of non-apneic SpO2 segments exceeded the apneic segments, we balanced the data by oversampling the apneic segments, repeating them before the start of each training process. To facilitate efficient weight updates, we employed the adaptive moment estimation (Adam) optimizer44 with an initial learning rate. For the loss function, we chose the Huber loss45 due to its robustness, as evidenced by its strong performance in previous AHI estimation studies28,29. The Huber loss strikes a balance between quadratic and linear loss behaviors, as expressed by its formula:

$$ L(\haty_n ,y_n ,\delta ) = \left\{ {\beginarray*20c \frac12\left( \haty_n – y_n \right)^2 , & \haty_n – y_n \right \\ \haty_n \left( \left \right), & otherwise \\ \endarray } \right.. $$

(6)

Here, \(\widehaty_n\) and yn denote the label and model output for segment n, respectively. The parameter δ acts as a threshold and serves as a tunable hyperparameter crucial for effectively handling data with outliers and noise during the model optimization process. The Huber loss function is particularly effective for datasets with outliers, providing a quadratic loss for smaller errors (inliers) and a linear loss for larger errors (outliers). Two key techniques were implemented to optimize the training process. Firstly, a dynamic learning rate reduction strategy was employed, reducing it by 50% every 10 epochs within the loss function. This strategy promotes training stability, facilitating smoother convergence. Secondly, early stopping was introduced to halt the training process if the validation set loss did not improve for 30 consecutive epochs, ensuring the generalization capability of the models. We employed the Keras deep learning framework with a TensorFlow backend for model training in the Google Colab environment, leveraging the availability of NVIDIA Tesla T4 GPUs.

AHI estimation

After obtaining the estimated number of apneic events in each 20-min segment, the AHI is calculated as the sum of the detected apneic events divided by the total recording duration. Utilizing the DL model’s output yn for each 20-min SpO2 segment (n = 1, 2, 3, …, N), the AHI for each patient is calculated using the formula:

$$ \textAHI – Model = \frac\sum\limits_n = 1^N y_n \textSpO_2 \text signal recording time (h) \, $$

(7)

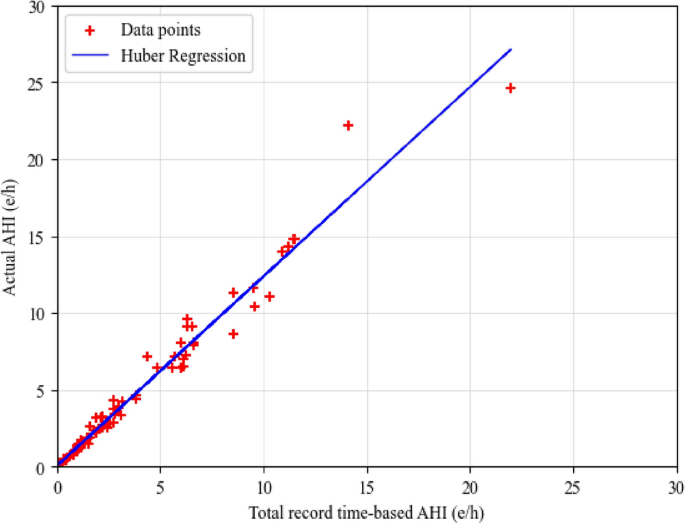

where N is the total number of 20-min SpO2 segments in the SpO2 signal. To calculate the AHI, knowledge of the total sleep duration for each patient is essential, particularly for sleep staging analysis. In the absence of this information, we made the assumption that the total length of the SpO2 signal serves as an approximation of the sleep duration. To refine this estimation, a regression model was employed to map the calculated AHI, based on the total SpO2 recorded time, to the actual AHI, taking sleep duration into consideration. The regression model utilizes an optimization method to determine the optimal coefficients of the linear equation \(ax+b\) that minimizes an error function. In this study, we opted for the Huber loss function (Huber regressor) to train the model on the validation set. The Huber loss function adjusts the parameters of the linear equation during training to minimize the loss across all predictions. To strike a balance between sensitivity to outliers and overall model performance, the delta value is crucial. In our case, the delta value was determined by selecting the value that minimized the root mean square error on the validation set. The choice of a delta value significantly influences the behavior of the Huber loss function. A higher delta makes the loss more robust to outliers, as it allows for a larger linear region, while a lower delta increases sensitivity to outliers by making the loss more quadratic. In our study, after experimenting with different delta values, we found that a delta of 6 led to the minimum root mean squared error on the validation set. This particular value was chosen to strike an optimal balance, aiming to maximize the accuracy of our AHI estimates in the presence of data variability and potential outliers. This approach effectively mitigates the lack of explicit sleep duration information, enhancing the accuracy of AHI estimation. The final estimated AHI is obtained through the Huber regression model. Figure 9 displays a scatter plot of total record time-based AHI and actual AHI in the validation set, along with the fitted regression function line on data points.

Scatter plot of total record time-based AHI and actual AHI in the validation set.

link