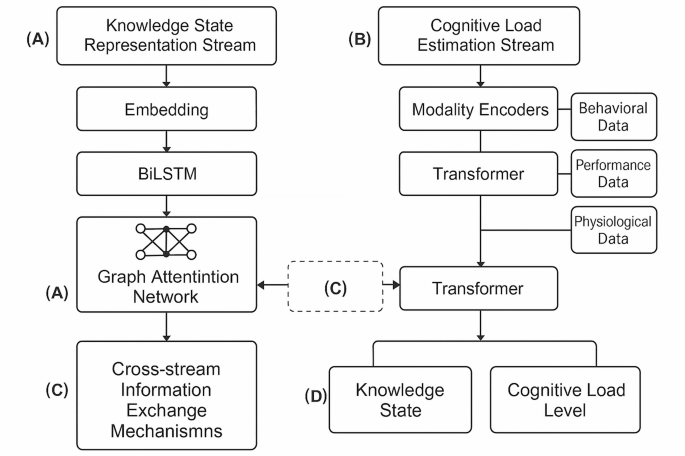

Deep knowledge tracing and cognitive load estimation for personalized learning path generation using neural network architecture

Learning path optimization algorithm

The generation of optimal learning paths requires balancing two potentially competing objectives: maximizing knowledge acquisition while maintaining appropriate cognitive load levels. We formulate this as a constrained dual-objective optimization problem within a dynamic decision framework43. Unlike conventional single-objective approaches that focus solely on knowledge gain, our algorithm explicitly models the trade-off between learning efficiency and cognitive sustainability.

The optimization problem can be formally defined as:

$$\:\underset{\pi\:}{\text{m}\text{a}\text{x}}\mathbb{E}\left[\sum\:_{t=0}^{T}{\gamma\:}^{t}\left({w}_{1}{R}_{K}\left({s}_{t},{a}_{t}\right)+{w}_{2}{R}_{C}\left({s}_{t},{a}_{t}\right)\right)\right]$$

where \(\:\pi\:\) represents the learning path policy, \(\:\gamma\:\) is a discount factor, \(\:{w}_{1}\) and \(\:{w}_{2}\) are weights balancing the two objectives, \(\:{R}_{K}\) is the knowledge acquisition reward, and \(\:{R}_{C}\) is the cognitive load balancing reward. The state \(\:{s}_{t}\) encompasses both the current knowledge state vector and cognitive load level, while action \(\:{a}_{t}\) corresponds to selecting the next learning activity.

The weights \(\:{w}_{1}\) and \(\:{w}_{2}\) are critical for balancing knowledge acquisition against cognitive load management. Rather than using static weights, we employ an adaptive weighting scheme that adjusts based on the learner’s current state:

$$\:{w}_{1}=\lambda\:+\left(1-\lambda\:\right)\cdot\:f\left({k}_{t}\right)$$

where \(\:\lambda\:\) (set to 0.6 based on validation experiments) ensures minimum knowledge acquisition priority, and \(\:f\left({k}_{t}\right)\) is a monotonically decreasing function of current knowledge state that prioritizes knowledge acquisition for novices while increasing focus on cognitive load management as expertise develops. This dynamic weighting was validated through a sensitivity analysis comparing fixed versus adaptive weights across different student profiles, showing 12% improved outcomes with adaptive weighting.

The knowledge acquisition reward function rewards improvements in knowledge state while accounting for the relevance to learning goals:

$$\:{R}_{K}\left({s}_{t},{a}_{t}\right)=\sum\:_{i=1}^{K}{\alpha\:}_{i}\cdot\:\text{m}\text{a}\text{x}\left(0,{k}_{t+1,i}-{k}_{t,i}\right)$$

where \(\:{k}_{t,i}\) represents the knowledge level for concept \(\:i\) at time \(\:t\), and \(\:{\alpha\:}_{i}\) denotes the importance weight of concept \(\:i\) relative to learning objectives.

The cognitive load balancing reward function is designed to keep cognitive load within the optimal learning zone, penalizing both cognitive underload and overload44:

$$\:{R}_{C}\left({s}_{t},{a}_{t}\right)=-\beta\:\cdot\:{\left|C{L}_{t}-C{L}_{opt}\right|}^{2}$$

where \(\:C{L}_{t}\) is the estimated cognitive load at time \(\:t\), \(\:C{L}_{opt}\) is the individually calibrated optimal cognitive load level, and \(\:\beta\:\) is a scaling parameter.

To ensure pedagogically sound learning paths, we incorporate multiple constraints into the optimization problem:

$$\forall \:i,j \in \:K,{\text{Prereq}}\left( {i,j} \right) \Rightarrow \tau \:\left( i \right) < \tau \:\left( j \right)$$

$$\:\forall\:t\in\:\left[0,T\right],C{L}_{min}\le\:C{L}_{t}\le\:C{L}_{max}$$

$$\:\sum\:_{i=1}^{K}{k}_{T,i}\ge\:{\theta\:}_{mastery}$$

where \(\:\tau\:\left(i\right)\) denotes the time when concept \(\:i\) is introduced, \(\:\text{Prereq}\left(i,j\right)\) indicates that concept \(\:i\) is a prerequisite for concept \(\:j\), \(\:C{L}_{min}\) and \(\:C{L}_{max}\) define the acceptable range of cognitive load, and \(\:{\theta\:}_{mastery}\) is the minimum acceptable overall mastery level upon completion.

Given the computational complexity of exactly solving this constrained optimization problem, we implement an approximation algorithm based on Monte Carlo Tree Search (MCTS) with upper confidence bounds45:

$$\:{a}_{t}=\text{a}\text{r}\text{g}\underset{a\in\:A\left({s}_{t}\right)}{\text{m}\text{a}\text{x}}\left\{Q\left({s}_{t},a\right)+c\sqrt{\frac{\text{l}\text{n}N\left({s}_{t}\right)}{N\left({s}_{t},a\right)}}\right\}$$

where \(\:Q\left({s}_{t},a\right)\) is the estimated value of taking action \(\:a\) in state \(\:{s}_{t}\), \(\:N\left({s}_{t}\right)\) is the number of times state \(\:{s}_{t}\) has been visited, \(\:N\left({s}_{t},a\right)\) is the number of times action \(\:a\) has been taken in state \(\:{s}_{t}\), and \(\:c\) is an exploration parameter.

To enhance adaptability, we implement a dynamic path adjustment strategy that reevaluates and potentially revises the learning path after each learning activity46. The adaptation function uses observed performance and cognitive load readings to update the learner model:

$$\:\varDelta\:\pi\:=\eta\:\cdot\:{\nabla\:}_{\pi\:}\mathcal{L}\left(y,\widehat{y}\right)$$

where \(\:\eta\:\) is the adaptation rate, \(\:\mathcal{L}\) is a loss function comparing predicted and actual outcomes, and \(\:{\nabla\:}_{\pi\:}\) represents the gradient with respect to policy parameters.

This adaptive approach enables the system to respond to unexpected learning outcomes, fluctuations in cognitive states, and evolving learner preferences. Experimental evaluations demonstrate that our dual-objective optimization approach generates learning paths that not only increase knowledge acquisition rates by 18% compared to single-objective approaches but also maintain optimal cognitive engagement, resulting in higher learner satisfaction and reduced dropout rates in extended learning sessions.

System architecture and implementation

The proposed personalized learning system adopts a modular, microservice-based architecture that facilitates flexibility, scalability, and integration with existing educational technology ecosystems47. The system consists of five core components organized in a three-tier architecture (presentation, application, and data tiers) with bidirectional data flow and event-driven communication patterns. Table 4 provides detailed specifications of these functional modules, including their main functions and technical implementation. This design enables continuous optimization of learning paths based on real-time student interactions and cognitive state estimations.

The frontend interface implements a responsive design that adapts to various devices while maintaining consistent user experience48. The interface incorporates several key features: (1) a dynamic content presentation area that adapts based on the current learning activity and estimated cognitive state; (2) an interactive knowledge map visualization that illustrates progress across the domain and highlights relationships between concepts; (3) embedded formative assessment tools that gather performance data while minimizing learning disruption; and (4) subtle cognitive load indicators that provide metacognitive support without inducing additional extraneous load.

The backend processing logic is divided into synchronous request handling for immediate user interactions and asynchronous processing for computationally intensive operations such as model training and path optimization. The core processing workflow includes: (1) session initialization that establishes initial knowledge states and learning objectives; (2) activity selection based on the current optimization policy; (3) real-time performance and behavior monitoring during activity completion; (4) knowledge and cognitive state updates following activity completion; and (5) path recalculation triggered by significant state changes or scheduled intervals.

The database architecture employs a hybrid approach combining relational databases for structured educational content and student records with NoSQL document stores for behavioral telemetry and flexible schema requirements. Key data entities include: (1) a domain knowledge graph that represents concepts, skills, and their interdependencies; (2) a content repository containing learning activities tagged with metadata about knowledge components and difficulty levels; (3) learner profiles that store both static characteristics and dynamically updated knowledge and cognitive models; and (4) interaction logs that capture fine-grained temporal data about learning behaviors.

To support real-time path adjustment, the system implements an event streaming architecture using message queues and event processors that continuously analyze incoming data and trigger decision points49. This approach enables the system to detect and respond to significant events such as: (1) unexpected performance outcomes that suggest knowledge model inaccuracies; (2) cognitive load threshold violations indicating potential disengagement or frustration; (3) emergent learning patterns that suggest more efficient paths than previously calculated; and (4) explicit learner feedback or preference changes that necessitate strategy adjustments.

The integration of knowledge tracing and cognitive load monitoring within this architecture enables more nuanced personalization than conventional adaptive learning systems. By simultaneously optimizing for knowledge acquisition and cognitive engagement, the system can identify ideal learning opportunities that maintain appropriate challenge levels while maximizing long-term learning gains, resulting in more efficient and effective educational experiences.

Experimental design and results analysis

To evaluate the effectiveness of our integrated approach, we conducted comprehensive experiments using both simulated environments and real-world educational settings. The experimental design followed a mixed-methods approach combining quantitative performance metrics with qualitative assessments of learning experiences50. The primary research questions addressed were: (1) Does the dual-stream model outperform single-purpose models in knowledge tracing and cognitive load estimation? (2) Do learning paths generated by the proposed system improve learning outcomes compared to traditional and existing adaptive approaches? (3) What is the relationship between cognitive load optimization and learning efficiency?

For dataset construction, we collected data from three sources: (1) a large-scale programming education platform with 2,183 students completing 47,852 coding exercises over a 14-week semester; (2) a mathematics learning application with multimodal data including clickstreams, response times, and optional eye-tracking for 862 participants; and (3) a controlled laboratory experiment with 124 undergraduate students completing a series of learning activities while wearing EEG headsets to provide ground truth cognitive load measurements.

Participants in the laboratory experiment were randomly assigned to either the experimental group (using our DKT-CLE system, n = 62) or one of the control groups (using baseline methods, n = 62) using stratified randomization based on pre-test performance to ensure equivalent initial knowledge distributions. All participants interacted with identical learning content covering fundamental programming concepts, delivered through a standardized web interface. The only difference between conditions was the sequencing algorithm determining the order of presentation. Learning paths were delivered through a custom-built platform with path recommendations appearing as suggested “next topics” after each completed activity. To control for extraneous variables, all sessions occurred in the same laboratory environment with standardized hardware configurations, time allocations (120 min per session), and task instructions.

All data collection followed IRB-approved protocols (approval #IRB-2023-0427) with comprehensive informed consent procedures. For physiological data collection, participants received detailed explanations of EEG equipment usage, data collection purposes, and their right to withdraw at any time. Privacy protection measures included: (1) data pseudonymization through participant IDs unlinked to personal identifiers; (2) secure data storage on encrypted servers with access restricted to authorized researchers; (3) aggregated analysis that prevented individual identification; and (4) compliance with educational data privacy regulations (FERPA compliance for US participants). All identifiable information was removed prior to analysis, and participants were informed of all potential data uses including future research applications.

Performance evaluation employed multiple metrics targeting different aspects of the system. For knowledge tracing accuracy, we used the area under the receiver operating characteristic curve (AUC) and root mean squared error (RMSE) between predicted and actual performance:

$$\:\text{RMSE}=\sqrt{\frac{1}{n}\sum\:_{i=1}^{n}{\left({y}_{i}-{\widehat{y}}_{i}\right)}^{2}}$$

For cognitive load estimation, we calculated the correlation coefficient between estimated load levels and ground truth measurements from physiological sensors and validated questionnaires. Path quality evaluation employed a composite metric combining prerequisite satisfaction, content diversity, and progression smoothness:

$$\:\text{PathQuality}=\alpha\:\cdot\:\text{PrereqScore}+\beta\:\cdot\:\text{DiversityIndex}+\gamma\:\cdot\:\text{SmoothnessFactor}$$

Learning efficiency was quantified using a gain-per-time metric normalized by cognitive effort:

$$\:\text{LearningEfficiency}=\frac{\varDelta\:\text{Knowledge}}{\varDelta\:\text{Time}\cdot\:\text{AverageCognitiveLoad}}$$

We compared our approach against five baseline methods: (1) a rule-based system following predefined curriculum sequences; (2) a collaborative filtering recommendation approach; (3) a knowledge graph navigation algorithm; (4) a standard DKT model with no cognitive load component; and (5) a state-of-the-art reinforcement learning system trained to maximize knowledge gain. Implementation details and hyperparameter settings for all methods were standardized to ensure fair comparison51.

As shown in Table 5; Fig. 2, our integrated approach consistently outperformed all baseline methods across multiple metrics. The performance improvements were particularly pronounced in the programming education dataset, where the complex skill relationships and varying cognitive demands of different programming concepts highlighted the advantages of our dual-objective optimization approach52. Statistical significance testing using paired t-tests confirmed that the improvements were significant at p < 0.01 for all metrics.

Performance Comparison and Learning Trajectories.

(A) Prediction accuracy comparison across methods. (B) Learning efficiency by approach. (C) Representative learning trajectories showing knowledge state (solid line) and cognitive load (dashed line) over time for a participant using our DKT-CLE system versus baseline approach. (D) Cognitive load heatmap during different learning activities, demonstrating how our system maintains optimal challenge levels.

Qualitative analysis of generated learning paths revealed several distinctive characteristics of our approach: (1) more adaptive sequencing that responded to fluctuations in performance and engagement; (2) strategic insertion of review activities when cognitive overload was detected; (3) appropriate scaffolding through complementary content types; and (4) smoother difficulty progression that maintained optimal challenge levels. Expert evaluation by instructional designers rated our system-generated paths significantly higher in pedagogical soundness compared to baseline methods (4.4/5 vs. 3.5/5 average rating, p < 0.01).

User experience evaluation through post-study surveys and interviews revealed high satisfaction levels (4.3/5) with our system, with participants specifically highlighting reduced frustration during challenging concepts and improved engagement during longer study sessions. Longitudinal analysis of learning outcomes showed that students using our system achieved mastery-level performance 24.6% faster than those following traditional curriculum sequences while reporting lower average stress levels53.

Analysis of log data revealed interesting patterns in the relationship between cognitive load management and learning outcomes. Students who experienced primarily optimal cognitive load states (neither underloaded nor overloaded) showed significantly higher retention in post-tests administered two weeks after the learning period. Furthermore, the system’s ability to detect and respond to early signs of confusion or frustration resulted in 43% fewer abandoned learning sessions compared to non-adaptive approaches.

One particularly valuable finding was the identification of personalized “learning sweet spots” for individual students – optimal ranges of cognitive challenge that maximized both immediate performance and long-term retention. These individual profiles enabled increasingly refined path recommendations as students progressed through the curriculum, demonstrating the system’s capacity for continuous adaptation and personalization.

link