Building adaptive knowledge bases for evolving continual learning models

This section details a new method for adapter-based, task-free continual learning utilizing a policy optimization approach to decide tasks. First, we provide preliminaries for the method. After, we describe detecting negative inputs by calculating the task similarity between learned tasks and a newly introduced task for each adapter. We then describe how task-free adapters can be applied to a pre-trained learning model. We detail our reinforcement learning module that uses the task similarities to predict which adapter would best benefit from training on a new task or if a new adapter should be created for the new task. Finally, we discuss how task interference is mitigated within our model.

Continual learning with adapters

Here, we define the problem setting and notations used in the article. First, we define a data stream \({\mathcal{S}}={\{({x}_{i},{y}_{i})\}}_{i = 1}^{\infty }\), containing a potentially infinite number of examples \({x}_{i}\in {\mathcal{X}}\) each mapped to a class \({y}_{i}\in {\mathcal{Y}}\), where \({\mathcal{Y}}\) is the space of class labels. Each example is a vector comprised of features denoted as \({x}_{i}=({x}_{i}^{1},\ldots ,{x}_{i}^{n})\), where n is a constant determined by the dataset denoting the number of features in an example.

A continual learning system is a model \({\mathcal{M}}\) which trains on a data stream \({\mathcal{S}}\) to predict a class \(\hat{{y}_{i}}\in Y\) for each example xi such that \(\hat{{y}_{i}}={y}_{i}\).

Many existing continual learning approaches learn in a Task Incremental Learning (TIL) setting, where the model \({\mathcal{M}}\) trains on a sequence of Ttasks\({\{{{\mathcal{T}}}^{t}\}}_{t = 1}^{T}\). Each task \({{\mathcal{T}}}^{t}=\{{{\mathcal{D}}}^{t},{{\mathcal{C}}}^{t}\}\), where \({{\mathcal{D}}}^{t}={\{{x}_{i}^{t},{y}_{i}^{t}\}}_{i = 1}^{{N}^{t}}\) represents a stream of Nt examples for which \({y}_{i}^{t}\in {{\mathcal{C}}}^{t}\) and \({{\mathcal{C}}}^{t}\subset {\mathcal{Y}}\). In TIL, the model \({\mathcal{M}}\) receives a subset of \({{\mathcal{C}}}_{N}^{t}\) classes \({{\mathcal{C}}}^{t}={\{{y}_{j}^{t}\}}_{j = 1}^{{{\mathcal{C}}}_{N}^{t}}\) which limit the candidates for each \({y}_{i}^{t}\) prediction. We use a Class Incremental Learning (CIL) environment, where the data stream \({\mathcal{S}}\) is used and task information t is not provided with the data, extending the set of candidate class labels to all of \({\mathcal{Y}}\).

Adapters are modules applied between the layers of a pre-trained model. We leverage the adapter structure proposed by Houlsby et al.43, where each adapter layer Ai transforms the input h as follows:

$${A}_{i}(h)=h+f(h{\theta }_{{\rm{down}}}){\theta }_{{\rm{up}}}.$$

(5)

First, an input h is projected to a lower-dimensional subspace using weights \({\theta }_{{\rm{down}}}\in {{\mathbb{R}}}^{d\times r}\) and passes to a nonlinear activation function f( ⋅ ) such as ReLU. The result is projected back to the original dimension through weights \({\theta }_{{\rm{up}}}\in {{\mathbb{R}}}^{r\times d}\). For ease of notation, we denote the parameters of an adapter Ai as \({\theta }_{{A}_{i}}=\{{\theta }_{{\rm{down}}}^{{A}_{i}},{\theta }_{{\rm{up}}}^{{A}_{i}}\}\). In a transformer model44, adapters are placed after the multi-head attention layer and the feed-forward sub-layer. In deep residual neural networks45, adapters are applied between convolutional layers.

Policy optimization

A policy optimization method trains an estimator of the policy gradient through a stochastic gradient ascent algorithm with loss

$${{\mathcal{L}}}^{PG}(\theta )={{\mathbb{E}}}_{t}\left[\log {\pi }_{\theta }({a}_{t}\,| \,{s}_{t}){\hat{A}}_{t}\right]=\frac{1}{t}\mathop{\sum }\limits_{i=0}^{t-1}\log {\pi }_{\theta }({a}_{i}\,| \,{s}_{i}){\hat{A}}_{i},$$

where πθ is a stochastic policy that produces an action at with a given state st and \({\hat{A}}_{t}\) is the estimated advantage at time t. The expected value of πθ(at∣st) is calculated as the empirical average over a batch of samples.

Optimizing \({{\mathcal{L}}}^{PG}\) can often lead to large policy updates that negatively impact model performance35. Therefore, we choose a policy optimization method that constrains the magnitude of policy updates to a defined trust region.

There are two main methods of establishing trust regions for policy optimization: Trust Region Policy Optimization (TRPO) and Clipped Proximal Policy Optimization (PPO). TRPO35 uses the KL divergence of the policy gradient to set a constraint on policy updates:

$$\begin{array}{l}\mathop{{\rm{maximize}}}\limits_{{\theta }_{t}}\quad {{\mathbb{E}}}_{t}\left[\frac{{\pi }_{\theta }({a}_{t}\,| \,{s}_{t})}{{\pi }_{{\theta }_{t-1}}({a}_{t}\,| \,{s}_{t})}{\hat{A}}_{t}\right],\\ {\rm{s.t.}}\quad {{\mathbb{E}}}_{t}\left[KL\left[{\pi }_{{\theta }_{t-1}}({a}_{t}\,| \,{s}_{t})\,| | \,{\pi }_{\theta }({a}_{t}\,| \,{s}_{t})\right]\right]\le \delta ,\end{array}$$

where \({\pi }_{{\theta }_{t-1}}\) is the previous policy before the update, and δ is the trust region threshold. However, continually calculating \(KL[{\pi }_{{\theta }_{t-1}}({a}_{t}\,| \,{s}_{t})\,| | \,{\pi }_{\theta }({a}_{t}\,| \,{s}_{t})]\) at each time-step t is a potentially expensive operation computationally, we will use Clipped PPO.

Clipped PPO sets a range in which the policy can be updated rather than changing penalties over time. This is computationally cheaper than TRPO and improves policy performance46. Let \(r({\theta }_{t})=\frac{{\pi }_{\theta }({a}_{t}\,| \,{s}_{t})}{{\pi }_{{\theta }_{t-1}}({a}_{t}\,| \,{s}_{t})}\). The loss function for Clipped PPO is changed to:

$${{\mathcal{L}}}^{CLIP}({\theta }_{t})={{\mathbb{E}}}_{t}\left[\min \left(r({\theta }_{t}){\hat{A}}_{t},{\rm{clip}}\left(r({\theta }_{t}),1-\epsilon ,1+\epsilon \right){\hat{A}}_{t}\right)\right],$$

(6)

where ϵ is a hyperparameter defining the trust region for the policy. The term \({\rm{clip}}\left(r({\theta }_{t}),1-\epsilon ,1+\epsilon \right){\hat{A}}_{t}\) removes the incentive for updating r(θt) outside of the interval [1 − ϵ, 1 + ϵ] and prevents large updates that may degrade model performance.

Overview

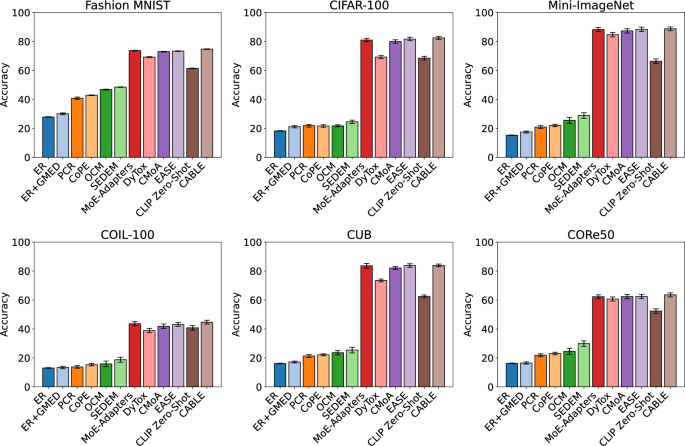

This article presents a modular, adapter-based framework called CABLE designed to enable pre-trained models to learn additional tasks in a CIL setting without requiring task information to be provided through the data stream. Instead, task information is inferred by a reinforcement learning (RL) component that is rewarded by instances of positive transfer between tasks. While previous approaches have considered backwards transfer for task identification, we prioritise both forward and backwards transfer and consider underlying class properties through the RL module.

An overview of the CABLE framework is provided in Fig. 7. CABLE contains a set of adapters \({\{{A}_{i}\}}_{i = 1}^{N}\) where N is the number of adapters initialized before training. Each adapter Ai is assigned a task \({{\mathcal{T}}}^{i}\) for which the class set \({{\mathcal{C}}}^{i}\) is unknown at the start of training. As instances of new classes are encountered during training, that is, an example (xi, yi) is encountered such that \({y}_{i}\notin \mathop{\bigcup }\nolimits_{i = 0}^{N}{{\mathcal{C}}}^{i}\), the RL module assigns class yi to an existing adapter based on a policy learned by the RL module. Task information decided by the RL module is used to inform a router WR, which computes a gated average of adapter outputs to produce a prediction. Unlike existing approaches, the RL module aims to boost both forward and backwards transfer between identified tasks and considers underlying inter-task and intra-task interference.

Blue components represent the frozen pre-trained backbone, red components represent dynamic continual learning adapters, and yellow components represent the RL components.

Motivation and negative inputs

We aim to mitigate forgetting on adapters by avoiding the introduction of negative inputs. A negative input is some sequence of examples that, when trained on by an adapter, causes misclassification of previously learned examples. While existing works on continual learning have considered the impact of negative inputs on an entire neural network47, in an adapter-based setting, we have separate parameters for each adapter and must consider different behaviours. We adapt task similarity from multi-task learning to address this, proposing a novel task similarity measure, and further demonstrate that monitoring a single task similarity measure is not sufficient for task selection, motivating the CABLE framework.

Multi-task learning is a learning paradigm that attempts to learn multiple tasks simultaneously, prioritizing positive transfer between tasks. To achieve this, previous works have proposed measures to determine whether tasks may share parameters to transfer information during gradient updates48,49,50. It has been shown that some tasks learn better on shared parameters, and it is beneficial to determine which tasks should and should not be trained on the same parameters51. Inspired by this, we seek to select tasks for training on separate adapter layers in an evolving, class incremental setting.

Consider an adapter-based learning setting where the loss function is parameterized by \(\{{\theta }_{* }^{t}\}\cup {\{{\theta }_{{A}_{i}}^{t}\}}_{i = 1}^{N}\) where \({\theta }_{* }^{t}\) represents the frozen parameters of the internal deep learning architecture at time t and \({\theta }_{{A}_{i}}^{t}\) represents the trainable parameters of adapter layer Ai at time t. The loss over an incoming batch of examples \({{\mathcal{X}}}^{t}\) is then given by:

$${\mathcal{L}}({{\mathcal{X}}}^{t},{\theta }_{* }^{t},{\{{\theta }_{{A}_{i}}^{t}\}}_{i = 1}^{N})=\mathop{\sum }\limits_{i=1}^{N}{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{* }^{t},{\theta }_{{A}_{i}}^{t}),$$

(7)

where \({{\mathcal{L}}}_{i}\) is the loss for adapter Ai with assigned task \({{\mathcal{T}}}^{i}\). Because \({\theta }_{* }^{t}\) remains frozen for the entire duration of model training, we simplify this notation:

$${\mathcal{L}}({{\mathcal{X}}}^{t},{\{{\theta }_{{A}_{i}}^{t}\}}_{i = 1}^{N})={\mathcal{L}}({{\mathcal{X}}}^{t},{\theta }_{* }^{t},{\{{\theta }_{{A}_{i}}^{t}\}}_{i = 1}^{N})=\mathop{\sum }\limits_{i=1}^{N}{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t}).$$

(8)

When an input is received that matches an adapter’s task \({{\mathcal{T}}}^{i}\), that adapter updates its parameters based on the stochastic gradient descent policy with learning rate η:

$${\theta }_{{A}_{i}}^{t+1}={\theta }_{{A}_{i}}^{t}-\eta {\nabla }_{{\theta }_{{A}_{i}}^{t}}{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t}).$$

(9)

We propose that a comparison between the updated parameters \({\theta }_{{A}_{i}}^{t+1}\) and the initial parameters \({\theta }_{{A}_{i}}^{t}\) may indicate the impact of new examples \({{\mathcal{X}}}^{t}\) on the previously learned task \({{\mathcal{T}}}^{i}\), and whether \({{\mathcal{X}}}^{t}\) may positively or negatively impact performance on \({{\mathcal{T}}}^{i}\). Previous approaches, such as task affinity49, perform a lookahead loss; however, they only compare the loss functions with respect to the current examples \({{\mathcal{X}}}^{t}\), which does not consider backwards transfer. We, therefore, propose a new task similarity measure to promote both forward and backwards transfer:

$$\Lambda ({{\mathcal{X}}}^{t},{A}_{i})=1-\frac{{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t+1}){{\mathcal{L}}}_{i}({{\mathcal{X}}}^{i},{\theta }_{{A}_{i}}^{t+1})}{{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t}){{\mathcal{L}}}_{i}({{\mathcal{X}}}^{i},{\theta }_{{A}_{i}}^{t})},$$

(10)

where \({{\mathcal{X}}}^{i}\) represents a set of examples stored in memory previously used to train adapter Ai. A positive value of \(\Lambda ({{\mathcal{X}}}^{t},{A}_{i})\) indicates an overall lower loss over adapter Ai after parameter update \({\theta }_{{A}_{i}}^{t+1}\), and a negative value of \(\Lambda ({{\mathcal{X}}}^{t},{A}_{i})\) indicates a higher loss, and thus poorer performance, after the parameter update. Equation (10) captures both forward and backward transfer by directly quantifying the change in loss on both the new task examples \({{\mathcal{X}}}^{t}\) and previously seen data \({{\mathcal{X}}}^{i}\), before and after the parameter update. Unlike gradient-based similarity measures, which rely on local approximations (e.g., gradient dot products or cosine similarity), \(\Lambda ({{\mathcal{X}}}^{t},{A}_{i})\) evaluates the impact of training via actual loss changes, making it more robust to nonlinear interactions and curvature effects in parameter space. The multiplicative structure provides a normalised comparison between pre- and post-update performance, avoiding the need for tuning hyperparameters that weigh forward and backwards components.

We now provide a theoretical lower bound for \(\Lambda ({{\mathcal{X}}}^{t},{A}_{i})\). Assume a supervised learning setting with bounded, smooth loss functions. Also assume that \({{\mathcal{L}}}_{i}(\cdot ,\theta )\) is L-Lipschitz in θ, \({{\mathcal{L}}}_{i}(\cdot ,\theta )\) is β-smooth and \(\parallel {\nabla }_{\theta }{{\mathcal{L}}}_{i}({\mathcal{X}},\theta )\parallel \le G\) for all θ and all inputs. We define relative loss change factors:

$${\Delta }_{t}=\frac{{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t+1})}{{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t})},\quad {\Delta }_{i}=\frac{{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{i},{\theta }_{{A}_{i}}^{t+1})}{{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{i},{\theta }_{{A}_{i}}^{t})},$$

so that \(\Lambda ({{\mathcal{X}}}^{t},{A}_{i})=1-{\Delta }_{t}{\Delta }_{i}\). Using smoothness and standard gradient descent, we obtain:

$${{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t+1})\le {{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t})-\eta \parallel {\nabla }_{\theta }{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t}){\parallel }^{2}+\frac{\beta {\eta }^{2}}{2}\parallel {\nabla }_{\theta }{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t}){\parallel }^{2}.$$

Using the bounded gradient norm, this yields:

$${\Delta }_{t}\le {\overline{\Delta }}_{t}:= 1-\eta \frac{\parallel {\nabla }_{\theta }{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t}){\parallel }^{2}}{{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t})}+\frac{\beta {\eta }^{2}{G}^{2}}{2{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t})}.$$

For backwards transfer, we expand the loss change on \({{\mathcal{X}}}^{i}\):

$${{\mathcal{L}}}_{i}({{\mathcal{X}}}^{i},{\theta }_{{A}_{i}}^{t+1})\le {{\mathcal{L}}}_{i}({{\mathcal{X}}}^{i},{\theta }_{{A}_{i}}^{t})-\eta \langle {\nabla }_{\theta }{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{i},{\theta }_{{A}_{i}}^{t}),{\nabla }_{\theta }{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t})\rangle +\frac{\beta {\eta }^{2}}{2}{G}^{2},$$

leading to:

$${\Delta }_{i}\le {\overline{\Delta }}_{i}:= 1-\eta \frac{\langle {\nabla }_{\theta }{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{i},{\theta }_{{A}_{i}}^{t}),{\nabla }_{\theta }{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{t},{\theta }_{{A}_{i}}^{t})\rangle }{{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{i},{\theta }_{{A}_{i}}^{t})}+\frac{\beta {\eta }^{2}{G}^{2}}{2{{\mathcal{L}}}_{i}({{\mathcal{X}}}^{i},{\theta }_{{A}_{i}}^{t})}.$$

Combining these:

$$\Lambda ({{\mathcal{X}}}^{t},{A}_{i})\ge 1-{\overline{\Delta }}_{t}{\overline{\Delta }}_{i}.$$

(11)

This lower bound shows that \(\Lambda ({{\mathcal{X}}}^{t},{A}_{i})\) increases when the gradients from \({{\mathcal{X}}}^{t}\) and \({{\mathcal{X}}}^{i}\) are aligned, and both losses are reduced.

Calculating task similarity requires that a selection of previously trained examples be stored in memory. We keep a buffer \({{\mathcal{B}}}_{i}^{{\bf{y}}}=\mathop{\bigcup }\nolimits_{j = t-b}^{t}\{{{\bf{x}}}_{j}^{i},{\bf{y}}\}\) for each class y, where \({{\bf{x}}}_{t}^{j}\) is the most recent example with class label y trained on adapter Ai and b is the maximum number of examples in buffer \({{\mathcal{B}}}_{i}^{{\bf{y}}}\). To extend the buffer to represent all classes learned by an adapter equally, we set an adapter buffer \({{\mathcal{X}}}^{i}={\bigcup }_{y\in {{\mathcal{C}}}_{i}}{{\mathcal{B}}}_{i}^{y}\) with maximum size \(b| {\mathcal{Y}}|\). This buffer will be used when calculating \(\Lambda ({{\mathcal{X}}}^{t},{A}_{i})\).

To assess whether new examples are negative inputs on adapters, we calculate the task similarity of an incoming batch of examples \({{\mathcal{X}}}^{t}=\{{{\bf{x}}}_{t-| {{\mathcal{B}}}_{i}| },\cdots \,,{{\bf{x}}}_{t}\}\) from data stream \({\mathcal{S}}\).

Figure 8 shows an example of task similarity between four classes from the CIFAR-100 dataset. In this example, we find that visually similar classes to humans score highest on average in the task similarity measure. For instance, the Cattle and Fox classes feature quadruped animals, which exhibit transferrable knowledge to a learning system. When the Fox class is compared to an adapter trained on the Cattle class, the mean task similarity of batches from the Fox class is higher than that of the Truck or Television classes. Likewise, when the Cattle class is compared to an adapter trained on the Fox class, it scores the highest task similarity. A task similarity can also be observed between the Television and Truck classes, which often visually contain rectangular geometric shapes. In this case, the classes should be grouped together in a task, as in the process shown in Fig. 9.

The classes are Cattle, Fox, Television and Truck. Each graph of results is acquired over one adapter training on the first 20 batches of the target class. After training on each batch, a distinct batch from each of the remaining three classes is chosen to calculate task similarity.

A new batch with class \({{\mathcal{Y}}}^{t}\), is encountered. The task similarity for each adapter is calculated using buffers of previously learned examples \({{\mathcal{B}}}_{1}\) and \({{\mathcal{B}}}_{2}\). A critic is trained based on model performance and provides feedback to the policy, which provides an action for the given state of task similarities. The action identifies the adapter best suited to training on examples with class \({{\mathcal{Y}}}^{t}\) based on the current state of the model.

However, task similarity can be misleading. In the Fig. 8, the Truck class produces a high similarity spike on batch 12 onto the Cattle class, despite generally being unrelated. This example highlights that while task similarity offers valuable insight, it is not sufficient for reliable adapter assignment. Spurious spikes or short-term artifacts may cause misassignment and lead to performance degradation, and we further observe that thresholds alone are not well equipped to manage the variation in task similarity scores. This motivates the need for a more robust policy beyond task similarity alone, which we addressed by proposing the CABLE framework.

Adapters with knowledge bases

We utilize adapters to create a scalable architecture that addresses the issue of catastrophic forgetting in the continual learning of large pre-trained models. CABLE consists of multiple adapters \({\bf{A}}={\{{A}_{i}\}}_{i = 1}^{N}\), where N represents the number of predefined adapters. A set of routers decides on the outputs of our adapters via a gated average. Due to the modular nature of adapters, we propose that CABLE may be implemented on many existing pre-trained deep learning models. To demonstrate this, we implement CABLE on CLIP and ResNet18.

CLIP contains parallel encoders (FI, FT), where FI represents the image embedding function and FT represents the text embedding function. Features of input images and corresponding texts are extracted, and the final embeddings’ highest cosine similarity determines the input image’s class. We implement adapters in all transformer blocks of encoders (FI, FT) so that the outputs of a multi-head attention layer are passed to the adapters. ResNet18 is a convolutional model and does not use text encoders, so we implement adapters after each convolutional layer.

A router WR is used at each adapter layer to determine which adapters are activated for a new input. When an input xt is received, the router predicts a set of N gating weights: \(R({{\bf{x}}}_{t})={\rm{softmax}}({{\bf{W}}}^{R}{{\bf{x}}}_{t})\in {{\mathbb{R}}}^{N}\), each corresponding to one of the N adapters. Each router WR is learned during training. The combined output yt for xt is determined by:

$${{\bf{y}}}_{t}=\mathop{\sum }\limits_{i=1}^{N}{W}_{i}^{R}{A}_{i}({{\bf{x}}}_{t}),$$

(12)

where \({{\bf{W}}}^{R}={\{{W}_{i}^{R}\}}_{i = 1}^{N}\). Routers ensure that the output of each adapter is weighted for an input based on that adapter’s expertise.

Training a task selection policy using reinforcement learning

We train a policy optimization model to decide whether to add a newly encountered class to the task of an existing adapter or to create a new adapter for that class. The policy should learn not only to respect existing task similarity calculations but also to predict the advantage of future gradient similarities and the future performance of each adapter. We train our policy by using a variation on Clipped PPO with clipping36.

The state st of an adapter-based model with adapters A is defined as \({s}_{t}=\{{{\mathcal{X}}}_{y}^{t},{\{{{\mathcal{C}}}_{{A}_{i}}\}}_{i = 1}^{N},{\{\Lambda ({{\mathcal{X}}}_{y}^{t},{A}_{i})\}}_{i = 1}^{N}\}\), where \({{\mathcal{X}}}_{y}^{t}\) is a batch of new examples with class y that is yet to be assigned to an adapter, \({\{{{\mathcal{C}}}_{{A}_{i}}\}}_{i = 1}^{N}\) is the set of classes learned by each adapter, and \({\{\Lambda ({{\mathcal{X}}}_{y}^{t},{A}_{i})\}}_{i = 1}^{N}\) is the task similarity of \({{\mathcal{X}}}_{y}^{t}\) with each adapter. The state transitions st → st+1, where \({s}_{t}=\{{{\mathcal{X}}}_{y}^{t+1},{\{{{\mathcal{C}}}_{{A}_{i}}\}}_{i = 1}^{N},{\{\Lambda ({{\mathcal{X}}}_{y}^{t+1},{A}_{i})\}}_{i = 1}^{N}\}\).

An action at assigns y to an existing adapter based on this state. The action is decided by a policy πθ(st), which is trained using the loss function presented in Equation (6). The advantage \({\hat{A}}_{t}\) is a key factor in training πθ, representing the expected improvement of action at on the model. We define the advantages as follows:

$$\begin{array}{c}Q({s}_{t},{a}_{t})={{\mathbb{E}}}_{t}\left[\mathop{\sum }\limits_{i=0}^{\infty }{b}^{i}r({s}_{t+i})\,| \,{s}_{t},{a}_{t}\right],\quad V({s}_{t})={{\mathbb{E}}}_{t}\left[\mathop{\sum }\limits_{i=0}^{\infty }{b}^{i}r({s}_{t+i})\,| \,{s}_{t}\right],\\ {\hat{A}}_{t}={\hat{A}}_{t}({s}_{t},{a}_{t})=Q({s}_{t},{a}_{t})-V({s}_{t})\end{array}$$

where V(st) is the value function, the expected reward of a state, and Q(st, at) is the expected reward given an action in a state. The bias B is set between 0 and 1 and represents the penalty for rewards r further in the future. We define the reward as follows:

$$r({s}_{t},{{\mathcal{X}}}^{t})=\frac{1}{B| {\bf{A}}| }\sum _{{A}_{i}\in {\bf{A}}}\Lambda ({{\mathcal{X}}}^{t},{A}_{i})\cdot {(y-y^{\prime} )}^{2}.$$

(13)

The reward incentivizes a high task similarity between an adapter’s assigned tasks and a low loss.

Inter-task and intra-task interference

The continual learning aspect of CABLE uses adapters to train on tasks identified by the RL module. Therefore, there can be no inter-task interference between two identified tasks, as they share no trainable parameters. However, the RL module may incorrectly identify a task if an incoming batch \({{\mathcal{X}}}^{t}\) returns an anomalous \(\Lambda ({{\mathcal{X}}}^{t},{A}_{i})\) for an adapter Ai that does not represent the distribution of the class associated with \({{\mathcal{X}}}^{t}\). This may lead to interference and forgetting within adapter Ai, which we call intra-task interference.

To mitigate intra-task interference, we must reduce the probability that an action is made by policy πθ on a state that incorrectly represents the incoming class. Intuitively, the more examples from a class that are represented in a state, the better the distribution of that class is captured. We, therefore, take two steps to mitigate intra-task interference:

First, the state space is represented as \(S=(X{{\prime} }_{y},{\{{A}_{i}\}}_{i = 1}^{N},\Lambda )\), where \(X{{\prime} }_{y}={\{{{\mathcal{X}}}_{y}^{i}\}}_{i = 1}^{{N}_{X}}\). This ensures that more examples from class y are interpreted by πθ before an action is taken, reducing the probability that an anomalous example contributes to the action. Second, we update the reward function:

$$r({s}_{t},X^{\prime} )=\frac{1}{B| {\bf{A}}| | X^{\prime} | }\sum _{{A}_{i}\in {\bf{A}}}\sum _{{{\mathcal{X}}}^{t}\in X^{\prime} }\Lambda ({{\mathcal{X}}}^{t},{A}_{i})\cdot {(y-y^{\prime} )}^{2},$$

(14)

where a single batch does not determine that policy updates and the mean task similarity of a series of examples is instead considered. This reduces the probability of a policy update that reduces the performance of the RL module.

To further examine interference, we define a forgetting example as follows: For any adapter A with parameters \({\theta }_{A}^{t}\) trained on a set of examples (X, Y) up to time t, a forgetting example is an example (XF, YF), where \({{\bf{Y}}}^{F}\cap {\bf{Y}}={{\emptyset}}\), which updates parameters \({\theta }_{A}^{t}\) to \({\theta }_{A}^{t+1}\) at time t + 1 such that loss \({\mathcal{L}}({\theta }_{A}^{t},{\bf{X}},{\bf{Y}}) < {\mathcal{L}}({\theta }_{A}^{t+1},{\bf{X}},{\bf{Y}})\).

Using this definition for a forgetting example, we define the severity of a forgetting example as follows:

$${\rm{Sev}}({{\bf{X}}}^{F},{{\bf{Y}}}^{F})={\mathcal{L}}({\theta }_{A}^{t+1},{\bf{X}},{\bf{Y}})-{\mathcal{L}}({\theta }_{A}^{t},{\bf{X}},{\bf{Y}}).$$

(15)

A larger severity indicates a more considerable increase in loss for previously learned examples before and after introducing a forgetting example. These definitions help to capture how interference from input examples affects a continual learning model.

Computational complexity

The integration of an RL component in the CABLE framework introduces additional computational overhead. Specifically, we employ Clipped-PPO to train a policy that assigns new class instances to adapters based on estimated transfer dynamics. This section analyses the resulting computational cost.

Let N denote the number of adapters, T the number of time steps per episode, and K the number of rollouts per policy update. Denote by ∣π∣ the number of parameters in the policy network, Cf the cost of a forward pass through the model and adapter stack, and B the batch size. The RL pipeline consists of three primary stages:

First, the policy rollout stage. At each time step, the policy network selects an adapter based on the current state st, and a rollout is executed over a batch of examples \(X{\prime}\). The per-step cost is \({\mathcal{O}}(| \pi | )\) for policy inference and \({\mathcal{O}}({C}_{f})\) for evaluating adapter predictions. Across T steps and K rollouts, this contributes \({\mathcal{O}}(KT(| \pi | +{C}_{f}))\).

Second, the reward computation stage. The reward signal at each step is given by Equation (14). For each Ai and each sample in \(X{\prime}\), this entails forward passes to compute updated losses and predictions. The worst-case cost of reward computation is \({\mathcal{O}}(BN{C}_{f})\) per step, giving \({\mathcal{O}}(KTBN{C}_{f})\) total.

Finally, the policy update stage. Clipped-PPO performs multiple epochs of stochastic gradient descent over the rollout buffer. Let BRL be the batch size used for PPO updates. The policy update phase costs \({\mathcal{O}}(K{B}_{{\rm{RL}}}| \pi | )\).

Combining all components, the total additional computational cost introduced by the RL module per training cycle is:

$${\mathcal{O}}(KT(| \pi | +BN{C}_{f})+K{B}_{{\rm{RL}}}| \pi | ).$$

This overhead scales linearly with the number of adapters N, batch size B, and PPO hyperparameters. Although the cost is non-trivial, it is offset by parallelisation and amortisation over long training sequences, and is justified by the RL module’s role in promoting effective task decomposition and transfer-aware routing.

Time series forecasting

We demonstrate the versatility of CABLE by providing real-world applications in both maritime trajectory forecasting and air quality forecasting. These tasks highlight the advantage of adapting existing pre-trained models to a continual learning environment, showing how CABLE can be applied to diverse time series data domains under noisy and evolving real-world conditions.

For maritime trajectory forecasting, CABLE receives an input trajectory jt−1 = P1, …, PN comprised of Tlen points Pi = MMSIi, xi, yi, sogi, cogi, where xi and yi are UTM coordinates representing the vessel’s spatial location. The goal is to predict a future trajectory \({\hat{j}}_{t}={P}_{1},\ldots ,{P}_{N},\ldots ,{P}_{N+n}\) over the next Tlen points.

For air quality forecasting, CABLE is provided with a historical input sequence qt−1 = q1, …, qM, where each point qj = PM2.5,j, tj represents the PM2.5 concentration and its associated timestamp. The objective is to forecast the future sequence \({\hat{q}}_{t}={q}_{1},\ldots ,{q}_{M},\ldots ,{q}_{M+m}\) of PM2.5 values for the next Tlen time steps.

In both settings, we use the pre-trained time series knowledge base PromptCast34 as the backbone for CABLE. PromptCast is a generative time series forecasting model that operates by conditioning on a textual prompt to predict future numerical sequences. To enable continual learning, we integrate adapter layers after each attention block in the PromptCast architecture. These adapters allow CABLE to retain previously learned representations while incorporating new domain-specific knowledge. For both maritime and PM2.5 forecasting, where prediction tasks do not involve discrete class labels, Root Mean Squared Error (RMSE) is used as the loss function and as the criterion for assessing task similarity across adaptation steps.

Related work

Machine learning systems today are typically constrained by two major limitations: the lack of adaptability and the need for extensive training data. Pre-trained knowledge bases, such as large language models52 and deep residual neural networks45, offer a promising solution by providing foundational knowledge that can reduce the need for retraining from scratch. However, incorporating new information is a continuous challenge, particularly when mitigating catastrophic forgetting and ensuring that new knowledge integrates seamlessly with prior learning. Continual learning addresses these limitations by enabling models to adapt to new information over time, avoiding catastrophic forgetting, and preserving previously learned knowledge. We summarise existing literature on continual learning, specifically focussing on continual learning approaches with pre-trained knowledge bases and task-free adapters. Figure 10 provides an overview of continual learning approaches with a focus on dynamic architectures and task selection.

We show approaches to four areas of continual learning: task selection, dynamic architectures, replay-based approaches and regularization-based approaches.

Continual learning

Continual learning has garnered increasing attention in recent literature, with a primary focus on addressing the challenge of catastrophic forgetting. Existing methodologies for mitigating catastrophic forgetting are commonly classified into three principal categories: experience replay strategies, regularization-based techniques, and dynamic architectural modifications53.

Replay-based methods address catastrophic forgetting by reintroducing data from prior tasks during training on new inputs. This process reinforces previously learned representations, counteracting the tendency of model parameters to drift when adapting to new data in the absence of prior task information. Experience Replay (ER)22 is a replay method that maintains a fixed-size memory buffer containing examples from previously encountered tasks. During training, the model is updated using a mixture of current task data and randomly sampled memory data, enabling it to retain performance on earlier tasks by continuously revisiting past experiences. Many replay techniques, including Gradient-based Memory Editing (GMED)23 and Proxy-based Contrastive Replay (PCR)24, represent samples in the memory buffer in such a way that they optimize their utility for mitigating forgetting by either enhancing gradient alignment with past tasks or preserving task-specific features through proxy representations. Alternatively, Continual Prototype Evolution (CoPE)25 integrates prototype-based representation learning with replay by maintaining evolving class prototypes instead of raw exemplars, demonstrating that it is unnecessary to memorise raw examples. Furthermore, Online Cooperative Memorisation (OCM)26 samples from short-term and long-term memory buffers, specifically selecting examples that should be memorised long-term. While replay has demonstrated success in reducing forgetting, a major limitation is the need to store and constantly re-train on previously observed concepts.

Regularisation-based approaches to continual learning mitigate catastrophic forgetting by constraining parameter updates to preserve knowledge from previous tasks. These methods introduce additional loss terms that penalise deviations from important weights identified during prior training. Techniques such as Elastic Weight Consolidation (EWC)54 and Synaptic Intelligence (SI)55 estimate parameter importance and restrict updates accordingly, thereby reducing interference with previously acquired knowledge while allowing adaptation to new tasks. However, in settings with high task dissimilarity, the imposed parameter restraints can restrict model adaptation to new tasks.

Dynamic architectures mitigate the problem of catastrophic forgetting by expanding the number of parameters that may be trained to avoid overwriting parameters important to a previously learned task. The Self-Evolved Dynamic Expansion Model (SEDEM)27 framework evaluates diversity between a set of experts based on examples stored in memory, and creates a new expert to learn novel information. Recent dynamic approaches have applied experts, or adapters, to pre-trained deep learning architectures known as knowledge bases.

Continual learning with knowledge bases

This article investigates the integration of continual learning with pre-trained knowledge bases, considering how the combination can lead to more efficient learning systems capable of lifelong learning without sacrificing the quality or utility of pre-existing knowledge. One of the main challenges in continual learning is ensuring that the new knowledge does not overwrite or diminish the value of pre-existing knowledge in the knowledge base. Knowledge retention strategies can be integrated with continual learning frameworks to prevent the model from forgetting previous knowledge. Techniques like episodic memory56 and knowledge distillation57 are valuable for ensuring that important information from a model remains intact as the system learns over time; however, even with these methods, catastrophic forgetting has not been eliminated, making frozen pre-trained parameters ideal for applying continual learning to foundational knowledge.

In the context of pre-trained transformer-based models such as CLIP58, adapting these models to new tasks or domains without retraining them entirely is a critical challenge. One efficient solution to this problem is the integration of adapters59. Adapters are lightweight task-specific modules inserted between the layers of a pre-trained model. Unlike full fine-tuning, which requires adjusting the weights of all model parameters, adapters typically consist of small bottleneck layers that introduce only a small number of parameters. The key idea is that the large pre-trained model retains its original weights, and only the adapter layers are trained for specific tasks. This reduces the computational cost and memory usage while enabling the model to generalize across various tasks, and multiple adapters prevent inter-task interference. Transformers44 are composed of multiple layers, each with attention and feed-forward components. When adapters are added, they are typically inserted after the attention or feed-forward layers in each transformer block. The adapter modules consist of a small multi-layer perceptron with a bottleneck structure-usually a smaller hidden layer followed by an expansion layer. The input to the adapter is the output of the transformer layer, and the output of the adapter is then added to the original layer’s output through a residual connection. This ensures that the pre-trained knowledge is not overwritten but complemented by the task-specific modifications introduced by the adapter. The task-specific nature of adapters often requires task information to be accessible in a dataset, which is often not the case in real-world applications. Therefore, we also propose that adapters train in a task-free environment.

With the growing popularity of large-scale foundation models, there has been an increasing interest in adapting pre-trained models to a continual learning setting. Using transformer architectures, DualPrompt60 and Learning to Prompt61 train a set of prompts that update through the continual learning process and report improvements compared to traditional continual learning methods. Fine-tuning knowledge bases has also been a popular approach. The Slow Learner with Classifier Alignment method42 fine-tunes a vision transformer knowledge base with a lower learning rate than classifier heads. However, using softmax requires introducing a classifier alignment method, which imposes a significant memory cost as a feature covariance matrix for each class. Fine-tuning has also been applied to larger-scale knowledge bases such as the CLIP model by leveraging the Learning without Forgetting regularization algorithm62. Lastly, First Session Adaptation63 avoids updating the knowledge base entirely and instead trains classifier heads known as adapters for each class. Despite promising performance and low memory cost compared to other continual learning methods, this approach struggles to introduce positive transfer between tasks.

Task-free adapters

Class-incremental learning (CIL) refers to the scenario where a continual learning model must learn a sequence of new classes without access to task-specific information. This differs from task-incremental learning (TIL), where examples are received and a task ID specifying that example’s relation to a pre-established task. In an adapter-based setting, task IDs can be used to train adapters on specific tasks, further diminishing the possibility of inter-task interference.

The reliance on task IDs during inference presents a key limitation when adapting pre-trained models with task-specific adapters64. In scenarios where the model is expected to perform various tasks, the task ID is typically used as a routing mechanism to select the correct adapter for each task. This works well when the task is predefined or known in advance, such as in batch processing, where task information is readily available.

However, the task ID may be unknown during inference in many real-world applications, especially in online or dynamic environments. For instance, when a system is tasked with answering a wide array of queries or handling multiple user requests pertaining to different tasks (e.g., natural language processing, image recognition, or recommendation systems), it is impractical to always provide a task ID. Furthermore, the system may not know what task is required until the input is processed, especially in cases where the input could be related to any number of tasks. This dependency on task IDs makes the task-specific adapter approach unsuitable for environments requiring task-free inference, where the model must dynamically choose the correct adapter without a predefined identifier.

Applying continual learning in a task-free environment has recently been a popular area of research. One approach is task-free memory replay23,65,66, which stores previously experienced examples in memory and interleaves those examples in future training to mitigate catastrophic forgetting. Maximally Interfered Retrieval67 is a task-free replay process that trains variational autoencoders and classifiers by storing the most perturbed examples in replayed memory. Task-free regularization68,69 is another approach where penalties are applied to changes made to parameters that contribute to the classification of a previous task. 64 estimates the importance of each weight to previously experienced classes using memory-aware synapses. The most popular approach in recent research is dynamic architectures, where catastrophic forgetting is avoided by incrementally adding new parameters to avoid overwriting old parameters7. expands on the Mixture-of-Experts architecture by applying a Distribution Discriminative Auto-Selector to infer the tasks of examples in the data stream. DYnamic feature space Self-OrganizatioN (DYSON)70 updates its architecture when new classes are encountered and then aligns the feature space to fit the new geometry. However, existing dynamic architecture approaches often reduce transfer between tasks as each task contains unique classes, eliminating opportunities for positive transfer between different tasks. We aim to encourage both forward and backwards transfer while deciding on task boundaries.

Task similarity is a widely used method for task selection in continual learning, particularly within dynamic architectures such as adapters. By projecting inputs into a shared embedding space, models compute similarity scores against stored representations or centroids to determine the most relevant module or expert. This approach enables task inference without requiring explicit task identifiers. For example61, utilises embedding similarity for task-conditioned expert selection, while71 incorporates similarity-based routing in incremental learning frameworks, demonstrating improved adaptability and reduced forgetting. SEDEM27 identifies tasks for existing and new experts by comparing new examples to previously experienced examples stored in a memory buffer, prioritising forward transfer of examples.

link