Advancing deep learning for expressive music composition and performance modeling

AI-driven music generation and transcription apply the deep learning approach in their operations. The research presents information about dataset preparation together with preprocessing approaches and explains the structure of chosen models which consist of LSTM alongside Transformer and GAN-based networks. The evaluation technique for each model’s ability to produce structured expressive and human-like musical compositions involves discussion of the training pipeline as well as hyperparameter optimization and metric assessment.

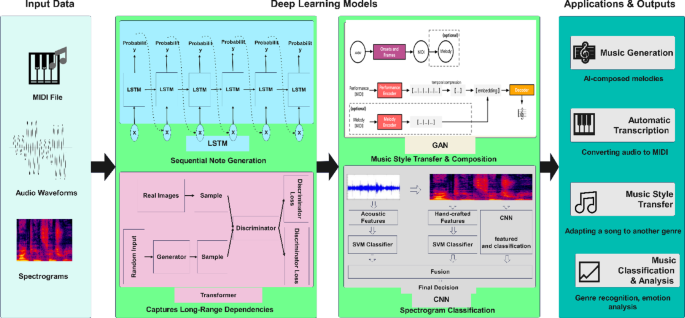

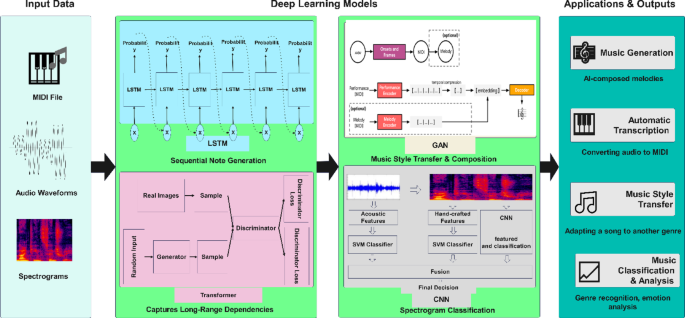

Figure 1 demonstrates AI music processing operations which begin with MIDI input files and audio waveforms and spectrograms leading to deep learning systems that utilize LSTMs for note sequences then Transformers for dependency modeling as well as GANs for style transformation and CNNs for spectrogram identification.

Deep learning models for music processing.

Dataset

The MAESTRO dataset3 serves as a major database for creating deep learning models for musical transcription tasks, generation purposes, and performance evaluation capabilities. The Magenta team at Google created this dataset from International Piano-e-Comp titles, achieving high-quality classical piano performances that establish MAESTRO as a premier AI research tool in musical expressions and details.

MAESTRO includes more than 200 h of piano recordings that match with MIDI files for exact timing, dynamics, and articulation modeling. The recordings within the database extend from 2004 to 2018, ensuring it encompasses multiple styles and performer interpretations. The Yamaha Disklavier pianos used during recordings created high-definition MIDI data and audio representations of each musical piece.

The data consists of three fundamental sections, including FLAC stereo format audio at 44.1 kHz with 16-bit resolution and MIDI files and metadata files. The MIDI files become a helpful resource by expressing musical expressions through their embedded note onset times, durations, velocities, and pedal data. Research into stylistic variations of various compositions can be performed through the metadata that incorporates composer names, piece titles, performance durations, and recording years.

The MAESTRO dataset distributes its content into three parts for model testing: an 80% train portion and 10% each for validation and testing purposes. The MIDI files benefit researchers by enabling automatic music transcription, style transfer, and neural music synthesis practices. Combining MIDI file data and piano recordings allows AI systems to learn from end to end to generate performances that simulate human piano musicianship. The MAESTRO dataset contains structured information combining symbolic and audio formats of classical piano performances, thus enabling researchers to conduct sophisticated deep-learning research on music generation, transcription techniques, and performance modeling approaches. The database provides MIDI files with musical notations alongside high-fidelity audio examples containing expressive elements. An exact pairing of these file formats enables AI training by combining symbolic data and acoustic signals.

MIDI files recorded in MAESTRO encompass precise timings regarding note onsets, quantity measurements (velocities), duration specifications, and pedal status data. Deep learning models can learn expressive musical elements from tempo variations through rubato alongside articulation marks because the MIDI files contain exact timestamp information and expression markings that get lost in manual MIDI transcriptions. Because MIDI files contain structured abstract musical event information without timbral details, the format excels for symbolically generating music and performance examination.

The FLAC format audio recordings in the dataset use 44.1 kHz and 16-bit stereo resolution for high-quality playback. The Yamaha Disklavier pianos record the audio performances that enable precise timing alignment between MIDI files. Combining MIDI and audio representations enables deep learning models to perform automatic music transcription, translating raw sound into symbolic notation with greater accuracy than previous datasets. Table 1 summarizes the key components of the MAESTRO dataset, detailing the format, resolution, and main features of each data type.

The structured nature of the MAESTRO dataset provides a benchmark for deep learning-based music analysis by offering precisely aligned symbolic and acoustic data. Researchers have utilized this dataset in polyphonic transcription, style transfer, and AI-generated expressive performance modeling. The dataset’s combination of structured MIDI events and high-resolution audio makes it one of the most valuable resources for machine learning applications in computational musicology.

Data cleaning and preprocessing techniques

Preprocessing is essential in preparing the MAESTRO dataset for deep learning models. Since the dataset includes MIDI files and audio recordings, specific preprocessing techniques are applied to each format to ensure consistency, accuracy, and high-quality feature extraction. The main objectives of preprocessing include standardizing time data alongside pitch while eliminating errors and transforming raw information to suitable training input for neural networks. Time quantization is a primary preprocessing step that maps note events onto a uniform time framework. Deep learning models need time quantification from MIDI events, which measure their durations at the millisecond level. Given a quantization factor \(Q\), the adjusted event time \(t^\) is computed as:

$$t^=\left\lfloor\:\fracX(t,f)\right\right\rceil\:Q$$

(1)

where \(t\) represents the original event time, and \(t^{\prime\:}\) is the quantized time. This process ensures uniform timing across all training samples.

Another essential step is pitch standardization, which involves transposing all MIDI sequences to a reference key such as \(C\) major or \(A\) minor. This transformation is performed by shifting each note’s pitch value \(P\) by a transposition factor \(k\), yielding:

where \(P^{\prime\:}\) is the standardized pitch. Standardization allows the model to focus on relative harmonic structures rather than absolute key signatures.

Velocity normalization is applied to scale note velocities, representing dynamic intensity, into a fixed range between 0 and 1. The normalized velocity \(V^{\prime\:}\) is computed as:

$$V^{\prime\:}=\frac{V-{V}_{min}}{{V}_{max}-{V}_{min}}$$

(3)

where \(V\) is the original velocity value, and \({V}_{min}\) and \({V}_{max}\) represent the dataset-wide minimum and maximum velocities. This ensures consistent dynamic representation across different piano pieces.

Duplicate note events and overlapping articulations are removed to maintain data clarity. MIDI files sometimes contain unintended duplicated note-on messages, which can distort the training data. These redundant events are filtered out to improve model performance.

The FLAC recordings in MAESTRO require transformation into feature-rich representations for models that process raw audio. This begins with resampling, as different neural network architectures require different audio sampling rates. Most deep learning models work best at 16–22.05 kHz, so all recordings are standardized to one of these rates.

A crucial step in audio preprocessing is spectrogram computation, which converts the waveform into a time-frequency representation. This is achieved using the Short-Time Fourier Transform (STFT), defined as:

$$X(t,f)=\sum\:_{n=0}^{N-1}x\left(n\right)w(n-t){e}^{-j2\pi\:fn}$$

(4)

where \(X(t,f)\) represents the spectrogram, \(x\left(n\right)\) is the raw waveform, \(w\left(n\right)\) is a window function, and \(f\) is the frequency bin index. STFT is widely used in deep learning models for music transcription and synthesis, as it provides a rich representation of harmonic and rhythmic content.

To further refine the spectral representation, the Mel spectrogram is computed by applying a Mel filter bank to the power spectrogram:

$${S}_{m}=M\cdot\:{\left|X(t,f)\right|}^{2}$$

(5)

where \({S}_{m}\) is the Mel spectrogram and \(M\) represents the Mel filter bank matrix. The Mel scale closely matches human auditory perception, making it an effective input representation for machine learning models.

The data augmentation techniques consisting of pitch shifting time stretching and dynamic range compression work to expand dataset diversity. The audio processing technique of pitch shifting makes semitone range transformations that preserve sample duration and the time stretching technique maintains pitch stability through speed modifications. Model generalization benefits from these augmentations which allow AI-generated music to maintain expressive musical attributes.

Preprocessing enhances the MAESTRO dataset for deep-learning application by creating a well-structured and noise-free optimized format. When this processed dataset provides clean data to models, they can create expressive musical structures that advance both music creation and transcription capabilities of AI programs.

Deep-learning models require feature extraction to analyze and produce structured music that expresses visible emotions when working with the MAESTRO dataset. AI models gain the capability to learn essential musical foundations through the extraction of musical elements which include pitch along with tempo together with chord progressions and dynamics. The fundamental pitch frequency appears in both MIDI note data and audio signal data. MIDI files contain MIDI note numbers directly available between 0 and 127 that represent pitch values. Both YIN and CREPE algorithms and Fourier Transform-based methods enable pitch estimation in audio files by delivering fundamental frequency \({f}_{0}\) estimations in Hz:

$${f}_{0}=arg\underset{f}{min}E\left(f\right)$$

(6)

where \(E\left(f\right)\) is the fundamental frequency estimation that produces errors. Accurate pitch tracking serves as a foundation for successfully carrying out melody extraction together with polyphonic transcription activities. The musical speed depends on tempo which shows its pace by counting beats each minute (BPM). MIDI files have embedded MIDI meta-events for tempo control whereas the extraction of tempo occurs through onset detection and beat tracking algorithms such as the Dynamic Bayesian Network (DBN) model in audio files.

$$T=\frac{60}{\varDelta\:t}$$

(7)

where \(\varDelta\:t\) is the time difference between the beats detected. Tempo variation is key to representing expressive timing changes, including ritardando and accelerando, which affect performance style.

The harmonic structure of music is described by chord progressions. For MIDI files, we extract chords by combining simultaneous note events and determining the root note and the chord quality (major, minor, diminished, etc.). Usually, chromagram analysis in audio detects harmonic content:

$$C(f,t)=\sum\:_{k\in\:H\left(f\right)}\left|X(k,t)\right|$$

(8)

where \(C(f,t)\) represents the chroma feature, and \(H\left(f\right)\) is the set of harmonic frequencies. Chord progressions are crucial in genre classification, music generation, and accompaniment models.

Dynamics refer to variations in note intensity (loudness), affecting musical expression. MIDI’s dynamics are represented by velocity values ranging from 0 (soft) to 127 (loud). In audio, dynamics are measured through amplitude envelope analysis using the Root Mean Square (RMS) energy.

$$E=\frac{1}{N}\sum\:_{n=1}^{N}{x}^{2}\left(n\right)$$

(9)

where \(x\left(n\right)\) is the amplitude of the signal. Dynamics are essential for modeling expressive playing styles and improving the realism of AI-generated performances.

Deep learning architectures for music generation and analysis

Deep learning has transformed music generation and analysis by enabling AI models to learn pitch, rhythm, harmony, and dynamics from data. RNNs, particularly LSTM networks, are widely used for sequential music modeling. LSTM networks keep track of musical sequences through time, enabling generated musical content to stay harmonious. During the LSTMs’ hidden state update, the calculation works as follows:

$${h}_{t}={o}_{t}\odot\:tanh\left(ct\right)$$

(10)

Music Transformer represents an advancement over RNNs by implementing self-attention mechanisms that enable it to detect distant connections while abandoning the necessity for sequence ordering. The attention mechanism is defined as:

$$Attention(Q,K,V)=softmax\left(\frac{{QK}^{T}}{{d}_{k}}\right)V$$

(11)

Generative Adversarial Networks (GANs) generate music by training a generator and a discriminator, refining AI compositions through adversarial learning. Their objective function is:

$$\underset{G}{min}\:\underset{D}{max}{E}_{x\sim\:{P}_{data}\left(x\right)}[logD(x\left)\right]+{E}_{z\sim\:{P}_{z}\left(z\right)}[log(1-D\left(G\right(z\left)\right)\left)\right]$$

(12)

Convolutional Neural Networks (CNNs) analyze spectrograms for chord recognition, transcription, and genre classification using:

$$Y(i,j)=\sum\:_{m}\sum\:_{n}X(i-m,j-n)W(m,n)$$

(13)

Model selection: LSTMs, transformers, GANs, and CNNs

Different deep-learning models should be selected according to the type of music task that needs to be performed. The effectiveness of LSTM networks in dealing with sequential data proves helpful in melody creation and polyphonic music representation. The sequential operating method hinders scalability potential. The Music Transformer and other Transformer models relieve the technical restriction through self-attention designs to analyze long-range structural elements for sophisticated melody sequences.

GANs are used in music style transfer and improvisation, where a generator creates new music and a discriminator refines it. GANs help in creating music that closely resembles human compositions. CNNs, primarily applied to spectrogram-based tasks, are effective in chord recognition, transcription, and genre classification, extracting hierarchical musical features from audio data.

Training pipeline and hyperparameter tuning

The training pipeline starts with data preprocessing, where MIDI sequences and audio spectrograms are normalized. Training data is split into 80% for training, 10% for validation, and 10% for testing. The selected model is trained using gradient-based optimization, often with the Adam optimizer for stability. The loss function varies by task: categorical cross-entropy for classification and mean squared error (MSE) for music reconstruction.

Hyperparameter tuning involves adjusting the learning rate, batch size, and sequence length to optimize performance. Learning rates are typically set between \(1{e}^{-4}\) and \(1{e}^{-3}\), with batch sizes ranging from 32 to 128. Regularization techniques such as dropout (0.2–0.5) and L2 weight decay are applied to prevent overfitting. Training is monitored using validation loss and accuracy, with early stopping mechanisms to optimize convergence.

Evaluation metrics for AI-generated music

The evaluation process regarding AI-generated music includes multiple assessment dimensions that involve quantitative and qualitative measurement elements. Guiding music evaluation differs from regular machine learning tasks, which use defined objective measures, because it requires the assessment of harmonic structure, temporal coherence, and expressive quality values. Different metrics analyze the extent of melody similarity, rhythmic stability, harmonic development, and human recognition of AI musical output.

Objective metrics

Sequence modeling uses Perplexity (PPL) as a widespread evaluation metric. Lower perplexity numbers directly correspond to better music sequence prediction outcomes. It is calculated as:

$$PPL=exp\left(-\frac{1}{N}\sum\:_{i=1}^{N}logP\left({x}_{i}\right)\right)$$

(14)

where \(P\left({x}_{i}\right)\) is the probability of the next musical event and \(N\) is the sequence length.

Pitch Class Histogram (PCH) metric analyzes the pitch distributions in generated music melodies relative to those of human compositions. Rhythmic Entropy provides a measure to check for variation in note durations so generated music stays interesting rather than sounding monotonous. The calculation involves Shannon entropy.

$$H=-\sum\:{p}_{i}\:{log}_{2}\:{p}_{i}$$

(15)

where \({p}_{i}\) is the probability of a particular rhythmic pattern occurring.

The evaluation of Harmonic Consistency depends on chord transition matrices to compute the degree of match between AI-generated and traditional harmonic progressions. Cross-correlation analysis detects functional and tactical structural analogies between compositions made by AI systems and human-composed music.

Subjective metrics

Subjects must assess musical elements and emotional resonance since human judgment remains vital. Researchers conduct listening tests that ask participants to evaluate automated music using different criteria, including coherence and human likeness, together with originality and expressive qualities. Listening participants use the Mean Opinion Score (MOS) to evaluate scores from 1 to 5 based on their assessment of generated music.

The Turing Test’s success relies on the Turing Test Success Rate, which measures the percentage of listeners who cannot differentiate between human music performance and artificial intelligence-created songs. Higher scores from these evaluations show that the high-FI becomes more realistic while its creative value improves.

link