A hybrid filtering and deep learning approach for early Alzheimer’s disease identification

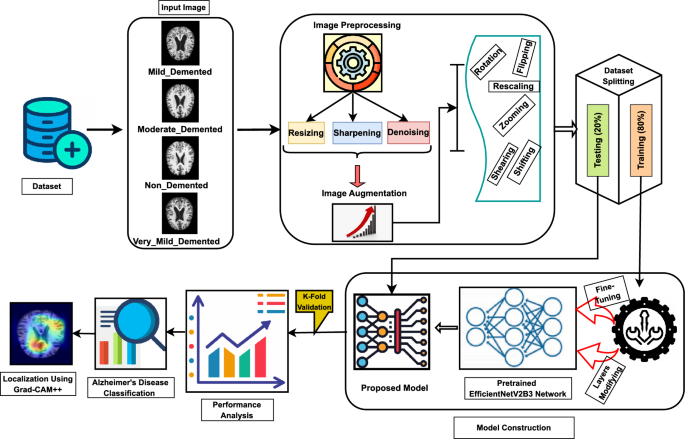

This section delineates the processes of data preprocessing, data augmentation, the CNN model, and the implemented architecture that were carried out before conducting trials for performance evaluation. Figure 1 visually represents the proposed methodology. The proposed Alzheimer’s Disease detection system utilizes MRI scans of the human brain as input in this block diagram. Subsequently, the gathered images undergo preprocessing by applying resizing and denoising methods. Subsequently, the image data undergoes augmentation. Ultimately, the model performed training and testing using the clinical datasets. The proposed model was constructed using the foundational deep learning model termed EfficientNetV2B3. This study involved the development of the model by incorporating additional layers into its underlying network. The layers experienced modifications through regularization, kernel initialization, and reliable fine-tuning techniques to enhance the experiment’s robustness and efficiency. Furthermore, this model can precisely categorize images into four distinct classes with the maximum level of accuracy. Again, the pseudocode of AD classification from MRI scans is illustrated in Algorithm 1.

Proposed workflow diagram of Alzheimer’s disease classification.

Pseudocode of Alzheimer’s Disease Classification from MRI Images

Preprocessing of images

Preprocessing the images from the dataset is essential to optimizing the learning module’s performance and ensuring accuracy. This preprocessing phase consists of effective resizing with hybrid filtering (adaptive nonlocal means denoising and sharpening filter) operations on the image data.

Resizing of images

The inputs’ dimensions over the models pre-trained on ImageNet will be either smaller or equal to 224*224. For transfer learning, it is necessary to ensure that the inputs are compatible with the pre-trained model. To ensure uniform dimensions for all collected images, we resized individuals to a resolution of 224*224 pixels.

Denoising of images

Denoising an image involves the restoration of a signal from images that contain noise. Denoising is eliminating undesirable noise from an image to enhance its analysis. One effective method for image denoising is the Non-Local Means (NLM) technique. Non-Local Means (NLM) denoising is a widely used method for reducing noise in images41. It operates on the principle that patches in an image with comparable characteristics also have identical pixel values. NLM denoising is a technique that averages pixel values of similar patches in an image to reduce noise while preserving underlying structures, using a weighted average based on similarity between the reference patch and neighborhood patches.

We may now mathematically represent this process. Let’s consider I as the image affected by noise, where u denotes the pixel values in the noisy image, and v represents the pixel values after the denoising process.

The Equation1 for NLM denoising is expressed as :

$$\begin{aligned} v(x) = \frac{1}{C(x)} \sum _{y \in \Omega } w(x, y) \cdot u(y) \end{aligned}$$

(1)

where x denotes the position of a pixel within the image, while y represents the pixel’s position in the neighboring region \(\Omega\) centered around x. The function w(x, y) assigns a weight to the pixel at location y, which is determined by the similarity of the patches surrounding pixels x and y. C(x) serves as a normalization factor, ensuring that the weights assigned to pixels around x sum up to 1, maintaining the integrity of the weighted averaging process. The weight function w(x, y) can be defined by utilizing a Gaussian kernel in Equation2:

$$\begin{aligned} w(x, y) = \frac{1}{Z(x)} e^{-\frac{\Vert P(x) – P(y)\Vert ^2}{h^2}} \end{aligned}$$

(2)

where the patch located at point x is denoted as P(x). The decay of weights can be controlled using the parameter h. The normalization coefficient Z(x) ensures that all the weights add up to 1. The NLM denoising approach entails traversing all pixels in the image and implementing the weighted averaging procedure to efficiently remove noise from the image.

Reducing noise in MRI images is essential for enhancing diagnostic precision and minimizing artifacts that could hinder interpretation. Traditional methods like Non-Local Means (NLM) filtering can be slow and may not adapt well to images’ diverse noise characteristics and intensity variations. We employed the Adaptive Non-Local Means (NLM) filter approach in our investigations to address this. This technique dynamically adjusts filtering strength based on local image properties, guaranteeing optimal noise reduction across various intensities.

In particular, the method initially computes the mean intensity of the input image. This means intensity is then used to adjust the filtering parameter h in the cv2.fastNlMeansDenoising function from the OpenCV library. The adjustment is based on the following relationship as shown in Equation3.

$$\begin{aligned} h = \text {base}\_\text { h} \times (1 + \text {mean}\_\text {intensity}) \end{aligned}$$

(3)

where base \(\_h\) is a predetermined baseline value, and in our study, the baseline value 10 was used in this case. When the mean intensity is high, h increases, enhancing filtering to manage higher noise in brighter areas. In contrast, darker regions undergo milder filtering to retain details that uniform filtering might obscure. The fastNlMeansDenoising function uses templateWindowSize set to 7 and searchWindowSize set to 21, determining the local neighborhood sizes for filtering. Before filtering, the input image is normalized to a [0, 255] range and then scaled back to its original range. This adaptive technique effectively reduces noise while preserving fine details. Figure 2 shows the preprocessing results of using the Adaptive NLM filter.

Sample preprocessing results based on Adaptive NLM Filter and Sharpening Filter: (a) Mild_demented case (b) Moderate_demented case.

Sharpening of images

Following the noise reduction procedure, a sharpening filter is incorporated in this study to enhance the collected MRI image details and improve edge definition. The non-local means (NLM) filtering inherent in the denoising process can cause blurring, potentially obscuring fine details and softening edges. A subsequent sharpening filter mitigates this blurring, enhancing the visibility of subtle features and improving the overall image sharpness. This sharpening step is beneficial for tasks such as feature extraction, where precise edge definition is crucial.

The concept of the sharpening filter is based on Laplacian filters, which emphasize areas of rapid intensity change and represent a second-order derivative enhancement system42. Typically, this is derived as shown in Eq. 4.

$$\begin{aligned} \nabla ^2 f = \frac{\partial ^2 f(x,y)}{\partial x^2} + \frac{\partial ^2 f(x,y)}{\partial y^2} \end{aligned}$$

(4)

Here,

$$\begin{aligned} \frac{\partial ^2 f(x,y)}{\partial x^2} \approx f(x+1,y) + f(x-1,y) – 2f(x,y) \end{aligned}$$

(5)

$$\begin{aligned} \frac{\partial ^2 f(x,y)}{\partial y^2} \approx f(x,y+1) + f(x,y-1) – 2f(x,y) \end{aligned}$$

(6)

Equation 4 leads to the derivation of the discrete Laplacian:

$$\begin{aligned} \nabla ^2 f \approx f(x+1,y) + f(x-1,y) + f(x,y+1) + f(x,y-1) – 4f(x,y) \end{aligned}$$

(7)

From Eq. 7, a mask is generated as presented in Fig. 3a. Additionally, the literature43 indicates that various other types of Laplacian masks and filters are available. This study employs a modified Laplacian filter, the kernel shown in Fig. 3b. Hence, the intensity value in the given Laplacian filter is calculated by adding the center point of the mask to the sum of the surrounding points. This can be expressed as:\(C5 + (C1 + C2 + C3 + C4 + C6 + C7 + C8 + C9)\). In this case, the intensity value of “0” is obtained by summing the center value with the surrounding values in the mask. When the original image is processed using this mask, the result is a darker image that highlights only the edges, where the intensity value is 0. The original image can be reconstructed using the rules specified in Eqs. 8 and 9.

An illustration of various filters is presented: (a) the generated mask, (b) a modified Laplacian filter, and (c) a sharpening filter.

$$\begin{aligned} g(x,y)=f(x,y)-\bigtriangledown ^{2}f;W_5<0 \end{aligned}$$

(8)

$$\begin{aligned} g(x,y)=f(x,y)+\bigtriangledown ^{2}f;W_5>0 \end{aligned}$$

(9)

In this context, g(x, y) denotes the filtered output after applying the desired operation. If the center value of the Laplacian filter is negative, the process follows Eqs. 8 and 9; otherwise. Let us define f(x, y) as C5, with \(C1,C2,\ldots ,C9\) representing the other surrounding points. Based on the corresponding Laplacian mask, Eq. 10 can be derived from Eq. 9. From Eq. 10, the resulting mask or filter is shown in Fig. 3c.

$$\begin{aligned} g(x,y)=9W_5-W_1-W_2-W_3-W_4-W_6-W_7-W_8-W_9 \end{aligned}$$

(10)

This filter, known as the sharpening filter, is designed to enhance image details and emphasize edges. The sharpening filter process is implemented using OpenCV’s filter2D function. In addition, it helps make transitions between features more distinct and noticeable, in contrast to smooth, noisy, or blurred images. Figure 2 depicts the preprocessing results of using the Sharpening filter.

Augmentation of images

Image augmentation44 is a widely used technique in deep learning that artificially enhances the training dataset’s variety through various modifications to the source images. This contributes to the improvement and resilience of deep learning models. Augmentation techniques commonly encompass operations that involve rotation, mirroring, scaling, cropping, adjustments in the brightness and contrast and other geometrical or color alterations can be operated by using ImageDataGenrator45. So, applying image augmentation procedures in our study, we can mitigate overfitting and enhance the model’s capacity to handle diverse real-world situations, resulting in a more efficient and dependable model for our proposed deep learning model. The augmentation approaches employed in our investigation are presented in Table 2 and the description of these augmentation criteria is given as follows:

-

Rotation: Applied random rotations across a range of \({\pm }7\) degrees to achieve model invariance to varying orientations of brain scans.

-

Width and Height Shifting: Horizontal and vertical transformations of up to 5% of the image sizes were employed to replicate positional variation in MRI images.

-

Zooming: A zoom range of 10% was utilized to simulate variations in the field of view.

-

Rescaling: All pixel values were normalized into the interval [0, 1] by dividing by 255, enhancing convergence during training.

-

Shearing: A shear transformation with a magnitude of 5% was used to induce affine distortions, hence improving the model’s generalization capacity.

-

Brightness Adjustment: Random brightness fluctuations within the interval [0.1, 1.5] were implemented to accommodate variations in image contrast and lighting circumstances.

-

Flipping: Horizontal and vertical flips were utilized to emulate various views, hence diminishing bias towards particular orientations.

Proposed transfer learning model

The proposed model methodology relies on a sophisticated deep transfer learning (DTL) architecture. Scholars have recently shown a growing interest in employing transfer learning-based convolutional neural network (CNN) models to address diverse computer vision challenges. These models have gained extensive application in medical disease diagnostics11,19 over the past few decades. In this study, we developed and implemented a transfer learning architecture based on CNNs to classify Alzheimer’s disease from MRI images. In our experiment, we train a DTL pre-trained CNN model termed EfficientNetV2B3 using the preprocessed image data. The DTL algorithm that was adopted in our experiment is explained below:

-

EfficientNetV2B3 : EfficientNetV2B3 is a deep neural network architecture introduced as an extension of the EfficientNet family46. The EfficientNetV2B3 model is designed to optimize computational efficiency and model performance across various tasks, particularly image classification. It incorporates improvements over its predecessors, including enhanced feature extraction capabilities and a well-balanced trade-off between model size and accuracy. This architecture is based on compound scaling, which uniformly scales the network dimensions (depth, width, and resolution) to achieve better performance. EfficientNetV2B3 is pre-trained on large-scale datasets, making it effective for transfer learning tasks. Its architecture allows for efficient utilization of computational resources while maintaining competitive accuracy levels.

Therefore, we choose EfficientNetV2B3 rather than EfficientNetV2B0, B1, and B2 because EfficientNetV2B3 is anticipated to possess a more extensive architecture when compared to EfficientNetV2B0, B1, and B2. The heightened depth is expected to enhance the model’s capacity for capturing intricate features, potentially leading to improved capabilities in representation learning. Additionally, EfficientNetV2B3 is projected to have an increased width, leading to a more expansive capacity for feature extraction. Furthermore, EfficientNetV2B3 will likely feature an elevated resolution compared to its forerunners. This heightened resolution has the potential to augment the model’s capability to capture intricate details in images, enhancing its overall performance.

Design and modification of the model’s structure

The proposed study used EfficientNetV2B3 as the foundational model. The base network was constructed and developed using techniques including layer attachment, regularization, kernel initializer, and hyper-parameter tuning.

The model takes a pre-processed dataset of images with dimensions of 224*224 pixels as input. Figure 4 depicts the block-wise internal details of the EfficientNetV2B3 pre-trained network. The feature extraction unit of EfficientNetV2B3 has six blocks that incorporate essential operations, including convolution, depth-wise convolution, batch normalization, activation, dropout, and global-average pooling. Block 1, block 2, block 3, block 4, block 5 and block 6 have two, three, three, five, seven and twelve sub-blocks with out-shape (112, 112, 16), (56, 56, 40), (28, 28, 56), (14, 14, 112), (14, 14, 136) and (7, 7, 232) respectively. Block 1a contains a total of 5760 parameters. Upon completing six blocks, Block 6l generates 322944 parameters respectively.

After the EfficientNetV2B3 model completes its six blocks, which progressively reduce spatial dimensions and increase the number of channels, the final feature extractor layer outputs a \(7 \times 7 \times\) 2048 feature map. This map is then processed by a global average pooling layer, which reduces the spatial dimensions to a \(1 \times 1\) grid. This pooling layer averages all values within each feature map, simplifying the model and enhancing robustness by reducing the risk of overfitting. Subsequently, dense layers with the ELU activation function and GlorotNormal kernel initializer are added, along with dropout layers to prevent overfitting. The model concludes with a fully connected layer using the SoftMax activation function for multiclass classification. This configuration enables the model to utilize the detailed feature representations from the EfficientNetV2B3 backbone while maintaining a straightforward and efficient classification mechanism.

The proposed transfer learning architecture, consisting of: Feature Extraction unit (including Block-wise operations, parameters, output-shape) and Modified Unit (including layers modification, regularization, kernel initialization, hyper-parameter tuning, parameters and output-shape).

Model development process

-

Layer Modification and Fine-tuning: Following the final activation layer of the pre-trained network, we incorporated global average pooling, dense layers with the eLU activation function, and “GlorotNormal” kernel initializer, dropout layers and the modification was ended with a fc (fully connected) layer named SoftMax for multiclass categorization. We used fine-tuning to make it easier for the pre-trained models to adapt to our particular task. The following points give more details of our model development:

-

i.

GA Pooling Layer: The Global Average Pooling (GAP) layer is integral to convolutional neural networks (CNNs) for image classification. Unlike traditional fully connected layers, GAP operates on the entire spatial dimensions of the input feature map. Computing the average of all values within each feature map condenses information, resulting in a single value per feature map and effectively reducing spatial dimensions to a 1×1 grid. While GAP can partially provide translation invariance by limiting the spatial dimensions of the feature map to a single value per feature map, it does not entirely ensure translation invariance. The main objective of incorporating GAP (Global Average Pooling) into our architecture is to reduce the number of model parameters and mitigate the risk of overfitting. This, in turn, can improve our system’s resilience to slight spatial translations.

-

ii.

Dropout Layer: The Dropout layer is a regularization technique employed in neural networks to mitigate overfitting. During training, it randomly deactivates a specified fraction of neurons, preventing co-dependency and encouraging the network to learn more robust features. This dropout of neurons simulates training multiple diverse networks and improves the model’s generalization capabilities. Randomly excluding neurons using a Dropout layer in our study reduces the risk of overfitting, enhancing the proposed model’s ability to adapt to various inputs and improving its overall performance on unseen data. The proposed study incorporates two dropout layers, each having a dropout rate of 0.2 and 0.3, respectively.

-

iii.

Dense Layer: Dense layer also known as a fully connected layer, is a fundamental component in neural networks. It connects every neuron from one layer to every neuron in the subsequent layer, creating a dense interconnection pattern. Each connection is associated with a weight and the layer typically includes a bias term. The Dense layer plays a pivotal role in our study for learning complex patterns and relationships within the data. Our study structures the dense layer with the ’eLu’ activation function and the ’GlorotNormal’ kernel initializer.

The “Glorot Normal” kernel initializer, sometimes referred to as Xavier normal initialization, aims to mitigate the problems of vanishing or exploding gradients by sampling weights from a normal distribution with a mean of 0 and a variance that depends on the number of input and output units. This method guarantees that the weights are evenly distributed, enhancing the workout’s stability and effectiveness. Although the tanh activation function is often linked to it, its usefulness extends beyond just tanh. The initializer is effective with the eLU activation function since it may ensure a consistent and steady flow of gradients.

The eLU activation function mitigates the vanishing gradient problem, a prevalent challenge in deep networks, and permits negative inputs, hence minimizing the occurrence of dead neurons. The smooth gradient of the training process is enhanced by the “Glorot Normal” initializer, resulting in stable and efficient training. The effectiveness of this combination was confirmed by empirical validation in our experiments, exhibiting enhanced performance and stability during the training process.

The objective is to utilize the “Glorot Normal” initializer and the eLU activation function to improve our model’s learning capacity and stability.

-

iv.

SoftMax Activation Layer(Output Classification): We utilized an output layer activated with softmax for the classification task. This layer assigns probabilities to diverse classes, enabling the efficient categorization of MRI images into their respective relevant groups.

-

v.

Random Seed: Employing a constant random seed is crucial for guaranteeing the replicability of our studies. By specifying the seed as 45, we ensure that every execution of our model will yield consistent outcomes. Reproducibility is crucial in scientific study, enabling us to compare our findings with other investigations.

-

vi.

Adamax Optimizer: Adamax, an extension of the Adam optimizer for deep learning, employs adaptive learning rates, dynamically adjusting them during training. It utilizes the infinity norm for stable parameter updates and is characterized by two key parameters, beta1 and beta2, controlling moment estimate decay rates. Adamax excels in handling sparse gradients, demonstrating robust performance across various neural network architectures. To ensure numerical stability in the parameter update step, a small positive constant, epsilon \(\epsilon\), is added to the denominator, preventing division by zero. This feature enhances the reliability and efficiency of our research optimization processes, so Adamax is a popular choice in our proposed deep neural network training. In our investigation, we employed a learning rate of 1e-03 and a decay rate having two parameters: beta1=0.91 and beta2=0.9994. We also set the epsilon value to 1e-08.

-

i.

link