The application of suitable sports games for junior high school students based on deep learning and artificial intelligence

AI-assisted sports activities in junior high school

From data analysis and motion pose estimation to virtual reality training, smart wearable devices, and intelligent referee systems, AI technology has brought revolutionary changes to various aspects of sports activities. In sports activities, data analysis is crucial23,24. AI technology collects and analyzes a large amount of match data, athlete training data, etc., to provide coaches and athletes with precise data support and decision-making basis. Specifically, AI can employ machine learning algorithms to mine athletes’ historical data, discover patterns and trends, and develop personalized training plans and game strategies for athletes25,26,27.

Computer vision technology is a vital tool for motion pose estimation and motion behavior recognition28,29. By capturing students’ motion images with cameras and utilizing DL algorithms to process and analyze the images, it is possible to accurately identify students’ motion poses and behaviors. This technology can furnish students with real-time feedback and guidance to help them correct errors in movements and improve their sports skills.

DL technology is one of the core technologies in the AI field, which automatically learns feature representations in data and achieves high-precision prediction and classification30,31,32. In PE teaching, DL technology can be applied to motion pose estimation, physical fitness assessment, motion behavior recognition, and many other aspects. By training neural network models, precise analysis and processing of students’ motion data can be achieved, offering scientific decision support for teachers.

Sensor technology can monitor students’ motion data and physiological indicators in real-time, such as heart rate, number of steps, movement trajectory, etc. By transmitting these data to smart devices or cloud servers for analysis and processing, personalized exercise suggestions and guidance can be provided to students. Meanwhile, sensor technology can also be used to monitor students’ motion status and safety conditions, ensuring their safety during sports activities. The application of AI technology in sports activities is summarized, as detailed in Table 2.

Human pose estimation method and the Mediapipe framework in sports games

Human pose estimation refers to the extraction of the positions of human joints from images or videos using computer vision technology, thereby estimating and recognizing human body poses33,34,35. In the field of sports, human pose estimation is mainly used for athletes’ technical analysis, motion correction, and development of training plans. Depending on the task, human pose estimation can be divided into two-dimensional (2D) and three-dimensional (3D) pose estimation. Based on the recognition of individuals, it can be classified into single-person and multi-person pose estimation. The studies involving human participants were reviewed and approved by Department of Sports Studies, Facility of Educational Studies, Universiti Putra Malaysia Ethics Committee (Approval Number: 2022.200219819996). The participants provided their written informed consent to participate in this study. All methods were performed in accordance with relevant guidelines and regulations.

The 2D human pose estimation method is mainly employed to extract the 2D coordinate information of human joints from images or videos. This method is primarily used for technical analysis and motion correction of athletes. Common 2D human pose estimation methods include template matching-based and DL-based methods.

The template matching-based method is a traditional approach to human pose estimation. This method first establishes a template library of human poses and then matches the input image with the templates in the library to find the most similar template, thus obtaining the position information of human joints36,37. This method is simple and easy to implement, but it has poor capabilities in handling intricate backgrounds and occlusions.

The DL-based method is currently the mainstream approach in the 2D human pose estimation domain. This method trains the DL model to automatically extract the position information of human joints from images or videos, involving key point positions, joint angles, and body poses. Moreover, this method has strong feature extraction capabilities and generalization abilities, enabling it to deal with complex occlusions and backgrounds.

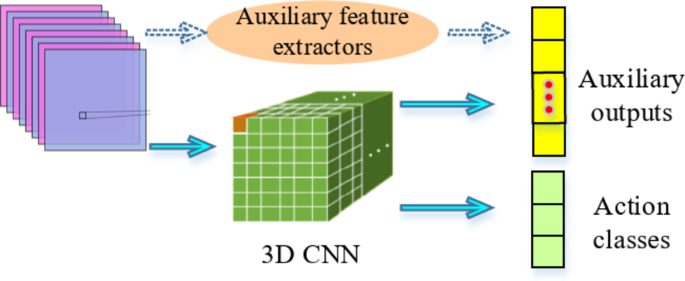

3D CNN achieves direct processing of 3D data by introducing 3D convolution kernels in the convolutional layers38,39,40. These kernels slide in the 3D space (height, width, and depth) and perform convolution operations with local regions in the input data to extract 3D features. This operation enables 3D CNN to capture spatial relationships and temporal dynamics in 3D data. In 3D CNN, components such as activation functions, pooling, fully connected (FC), and convolutional layers appear alternately, collectively forming the entire network structure. Convolutional layers extract features through convolution operations; pooling layers are utilized to mitigate the size of feature maps while retaining critical information. FC layers merge features and output the final classification or regression results, and activation functions introduce nonlinearity to enhance the network’s representation ability. By connecting a series of auxiliary output nodes to the last hidden layer of CNN, the extracted features during the training process more accurately approximate the computed high-level behavioral motion feature vectors, as revealed in Fig. 1.

Feature extraction of 3D CNN.

MediaPipe is an open-source framework developed by Google for implementing machine learning models in the media processing domain, encompassing images, videos, and audio, among others. This study selects sit-ups as the research focus, aiming to achieve accurate identification and evaluation of sit-ups by integrating the MediaPipe framework with the ST-GCN algorithm. The approach provides scientific data support and personalized teaching suggestions for teachers. As a result, it enhances students’ training effectiveness and overall experience. The core framework of MediaPipe is implemented in C + + and supports languages like Java and Objective C. Its main concepts include packets, streams, calculators, graphs, and subgraphs. MediaPipe graphs are directed, with packets flowing from data sources into the graph until they leave at the sink node.

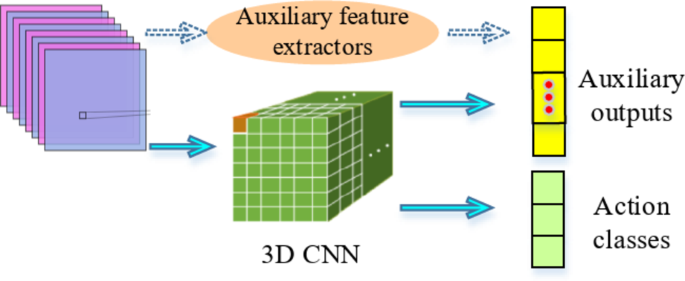

Taking a hand key point detection model as an example, as presented in Fig. 2, the model operates on the cropped image area defined by the hand detector and returns high-fidelity 3D hand key points.

Key point location of 3D hand joint coordinates based on MediaPipe.

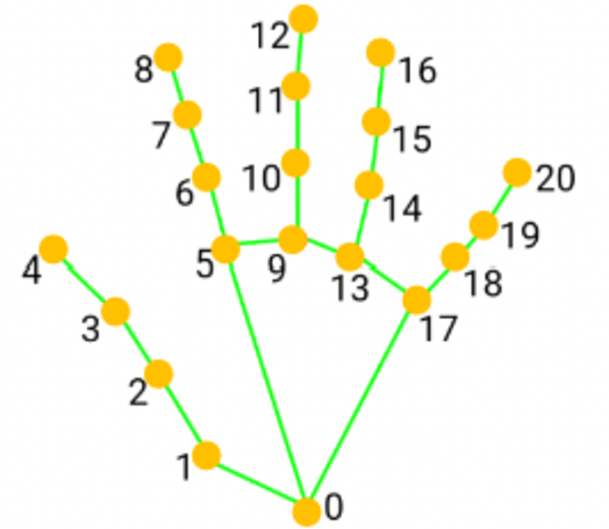

BlazePose is a pose estimation algorithm based on the MediaPipe framework, specifically designed for real-time human pose estimation. It features high accuracy, efficiency, and real-time performance, suitable for various platforms including mobile devices, desktop computers, and embedded systems. BlazePose uses a lightweight neural network architecture based on MobileNetV3 design, with lower computational and parameter requirements. This network structure achieves efficient feature extraction and information fusion through depth-wise separable convolutions and lightweight attention mechanisms. The BlazePose algorithm-based human pose prediction process is illustrated in Fig. 3.

Human pose prediction process based on BlazePose algorithm.

Fine-grained action segmentation and recognition based on DL

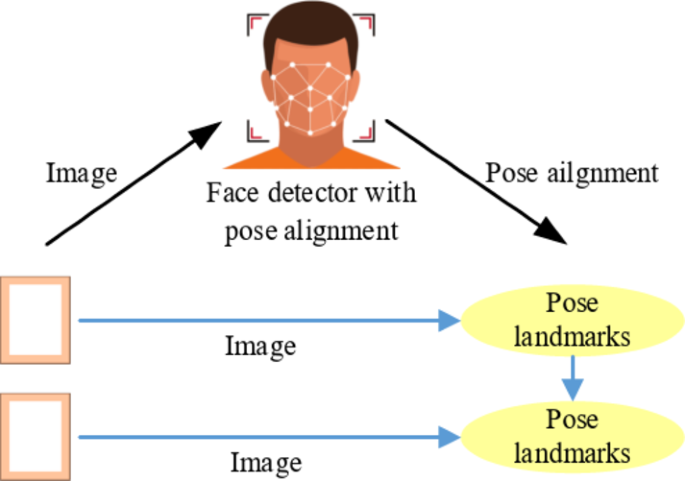

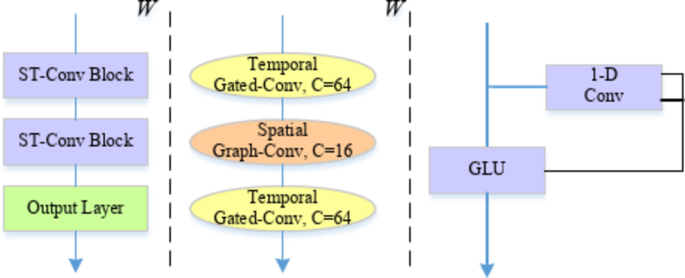

In the domain of video analysis and understanding, fine-grained action segmentation and recognition are pivotal research directions. Traditional action recognition methods typically concentrate on class labels for entire videos or video segments, while fine-grained action segmentation requires precisely segmenting each action in the video and assigning corresponding class labels40,41,42. In the fine-grained action segmentation and recognition of human skeletal motion, this study applies the Spatial Temporal-Graph Convolutional Network (ST-GCN) method (Fig. 4).

The pseudo code of ST-GCN is shown in Table 3.

In each time step, the skeleton diagram structure is rolled up in space to capture the spatial relationship between key points41,42,43. This is usually achieved by defining an adjacency matrix, which describes the connection relationship between key points. Between adjacent time steps, the time graph convolution operation is carried out on the skeleton graph structure to capture the position change of key points. This can be achieved by treating the key points of adjacent time steps as nodes in the graph and defining edges between these nodes.

The internal connections of joints within a single frame are represented by the adjacency matrix A and the identity matrix I representing the self-connections. In the case of a single frame, ST-GCN using a partitioning strategy can be achieved through the following equations:

$${f_{out}}={\Lambda ^{ – \frac{1}{2}}}{\left( {A+I} \right)^{ – \frac{1}{2}}}{f_{in}}W$$

(1)

$${\Lambda ^{ii}}=\sum\nolimits_{j} {\left( {{A^{ij}}+{I^{ij}}} \right)}$$

(2)

W indicates that weight vectors of multiple output channels are superimposed to form a weight matrix.

In the spatiotemporal context, input feature maps can be represented as (C, V, T) dimensional tensors. Graph convolution is achieved by performing\(1 \times \Gamma\) standard 2D convolution and multiplying the resulting tensor with the normalized adjacency matrix\({\Lambda ^{ – \frac{1}{2}}}\left( {A+I} \right){\Lambda ^{ – \frac{1}{2}}}\) in the second dimension.

In the distance partitioning strategy,\({A_0}=I\)and\({A_1}=A\), there are:

$${f_{out}}=\sum\nolimits_{j} {\Lambda _{j}^{{ – \frac{1}{2}}}} \left( {{A_j}} \right)\Lambda _{j}^{{ – \frac{1}{2}}}{f_{in}}{W_j}$$

(3)

Similarly, it can be obtained that:

$$\Lambda _{j}^{{ii}}=\sum\nolimits_{k} {\left( {A_{j}^{{ik}}} \right)+\alpha }$$

(4)

For every adjacency matrix, there is a learnable weight matrix M. That is, the equation before the substitution is:

$${f_{out}}={\Lambda ^{ – \frac{1}{2}}}\left[ {\left( {A+I} \right) \otimes M} \right]{\Lambda ^{ – \frac{1}{2}}}{f_{in}}W$$

(5)

represents the product of elements between two matrices. The mask M is initialized as a matrix with all 1.

This study uses sit-up exercises in sports activities as an example. In sit-up action detection and counting, the analysis and processing of action data often involve iterative convergence and nonlinear fitting methods. The characteristic of sit-up actions is the periodic variation of the body between lying down and sitting up, which can be detected by analyzing the positional changes of a certain body part, such as the head or hips. To ensure the normativity and accuracy of sit-up actions and prevent excessive involvement of other body parts, this study constructs a reasonable penalty function by setting loss values and corresponding penalty factors for each joint or body part. The calculation of the penalty value can be written as:

$$S=\sum\limits_{{{s_i}}}^{{{e_i}}} {\left( {\alpha \cdot los{s_{wriet}}+\beta \cdot los{s_{knee}}+\gamma \cdot los{s_{ankle}}} \right)}$$

(6)

\(\alpha\),\(\beta\), and \(\gamma\) are all penalty factors; \(los{s_{wriet}}\), \(los{s_{knee}}\), and \(los{s_{ankle}}\), represent the loss values of the wrist, knee, and ankle, respectively. The calculation for the loss value of each part of the body can be expressed as:

$$los{s_j}=\sqrt {{{\left( {{\alpha _j} – threshold} \right)}^2}}$$

(7)

\({\alpha _j}\)refers to the parameter value calculated for each part of the current body.

The thresholds and penalty factors for parameters of various body parts are outlined in Table 4.

In order to ensure that ST-GCN model can accurately and robustly deal with all kinds of complex and bad data conditions, this study has carried out detailed design and implementation in data preprocessing and enhancement. Firstly, key frames are extracted from the input video at a fixed frame rate to ensure that the model can handle consistent data input. Then, the extracted video frames are normalized, including image size adjustment, color space conversion (such as from RGB to gray image or YUV space) and pixel value normalization to improve the adaptability of the model to different lighting and color conditions. The pre-trained human detection model is used to detect the human body in the video frame to determine the position and size of the human body. According to the detection results, the video frame is cut, and only the area containing human body is reserved to reduce the interference of irrelevant information. In the aspect of data enhancement, the motion blur effect is simulated. By smoothing the video frame on the time axis or adding a blur filter, the image quality is simulated when the camera is moving rapidly or shaking.

link