Identifying alternately poisoning attacks in federated learning online using trajectory anomaly detection method

We conducted experimental evaluations within the federated learning framework FedAvg to assess the effectiveness of our method in detecting covert model poisoning attacks. Initially, experiments were conducted using a small-scale federated learning system with 10 clients. Subsequently, we evaluated the efficiency and performance of the algorithm in a larger-scale system with 50 clients.

Experimental setup

The central server’s configuration is OS: Ubuntu 18.04.5, CPU: 12 vCPU Intel(R) Xeon(R) Gold 8255C CPU @ 2.50GHz, Memory: 40GB, GPU: RTX 3080(24GB) \(\times\) 1. The attack scenario follows the method from literature13. Initially, we used only the MNIST dataset, setting the client size to 10 and increasing the number of malicious nodes from 1 to 4, training the clients for 50 iterations. Model parameters for each iteration were recorded, processed, and saved according to our procedure.

We observed the results and preliminarily determined the hyperparameters. Subsequently, experiments were conducted on larger-scale systems (20, 30, 40, 50 clients) to compare model testing across multiple datasets, including MNIST, FashionMNIST, and CIFAR-10. These datasets were used to train federated learning models and simulate distributed data in an IoT environment, ensuring representative data distribution to mimic real-world scenarios.

We employed the Federated Averaging (FedAvg) algorithm to coordinate model updates among clients. Each client independently trains its model and then sends the updated weights to the server for aggregation. In federated learning, secure aggregation algorithms are crucial for defending against model attacks. The FedAvg algorithm is simple yet practical, averaging parameters uploaded by users with weights determined by the number of samples they own. While FedAvg partially protects privacy, the demands on aggregation algorithms are increasing with the advancement of federated learning. The experiment included 50 training epochs to ensure model convergence.

For model attacks, our defense focuses on detecting incorrect model parameters. One approach is to use the difference in parameter values directly for detection. Each client provides n parameters, and if one client’s parameters significantly differ from others, they are judged as abnormal. Another method involves the server processing the parameters \(W_i^n\) loaded by a client, calculating them with parameters uploaded by other clients, and comparing \(WG_i\). If it exceeds a certain set value, the model parameters are considered abnormal.

The security aggregation algorithm is also a common and effective defense method in federated learning settings. It plays a key role in any centralized topology and horizontal federation learning environment, defending against model attacks. The FedAvg aggregation algorithm, simple and practical, averages parameters uploaded by users with different weights, determined by the number of samples each user has. Although FedAvg can protect privacy to some extent, the demands on aggregation algorithms are increasing with the development of federated learning.

Performance indicators

The method in this paper evaluates detection success qualitatively and quantitatively by adjusting the number of poisoning nodes and iterations. Poisoning data and models significantly degrade machine learning model predictions. To assess poisoning detection algorithms, a more intuitive approach involves training models on cleaned datasets and comparing detection accuracy on test sets. Malicious behavior is identified by detecting client training behavior and model weight contributions, analyzed using statistical methods. If abnormal behavior is detected for a client in each iteration, it is flagged as suspected malicious, with its aggregation weight reduced based on anomaly score. Clients with abnormal behavior exceeding half are classified as malicious and removed from training.

In this paper, the poisoning nodes are self-set. Therefore, to assess the success rate, we only need to consider whether the detection results for poisoning are correct and whether the clients detected as poisoning are the ones set as poisoning nodes. To accurately evaluate the experimental results, we have introduced various evaluation metrics:

Detection Accuracy (DACC): This metric represents the overall learning accuracy and is used to gauge the overall performance of different defense methods against poisoning attacks in federated learning. It reflects the average learning accuracy of the system.

Misclassification Rate: This refers to the proportion of samples that are misclassified based on predefined decision rules during analysis. In the context of this paper, it indicates the percentage of normal clients mistakenly identified as poisoned clients out of the total sample size.

These metrics provide a comprehensive framework for evaluating the effectiveness of the proposed method in detecting poisoned clients and assessing the robustness of federated learning systems against various attack scenarios.

Experimental process

The poisoning method in reference8 is used and alternating poisoning modifications on their poisoning attacks to make their poisoning more covert. In the process of federal learning, the local model parameters of each iteration of the local client are recorded, and the singular values are calculated for each iteration of the parameters, and a trajectory can be formed when it is iterated more than three times, and a conventional model poisoning detection algorithm is used to detect whether the trajectory of the client is outlier to determine whether this client is poisoned. Record the local model parameters after each iteration and extract the singular values of the matrix for the local parameters, record the singular values in chronological order, and judge the trend of the trajectory formed by the singular values of the local model parameters after the latter iteration in conjunction with the singular values of the previous one.

The method involves recording singular values of client parameters chronologically. After each iteration, applying a conventional poisoning detection algorithm to detect significant outlier trajectories in parameter singular values. Simultaneously, calculating the difference between posterior and anterior iterations to obtain a delta value representing the trend of change. Applying the IForest algorithm to detect anomalies in delta values, identifying suspected anomaly points. Filtering these points based on differences between poisoning and normal clients’ parameters. Examining each suspected anomaly point to exclude falsely classified normal points. Recording identified anomalies and dynamically accumulating anomaly counts for each client. Marking a client as suspicious if anomalies persist for more than 2 consecutive times, reducing its weight in the federated learning model. After 3 iterations, if anomalies continue, the client is deemed as poisoned and removed.

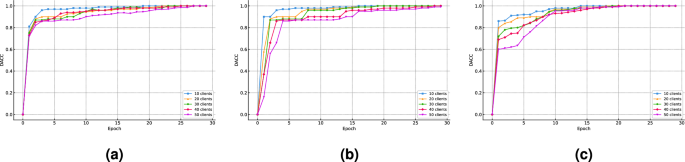

We conducted studies on different numbers of clients with poisoning rates of 10% under the MNIST, FasshionMNIST, and CIFAR-10 datasets. The comparative results are shown in Fig. 5, the figure shows that the number of clients has little impact on the filtering accuracy of our method, indicating its effectiveness in screening out malicious clients.

DACC on the MNIST,FashionMNIST,CIFAR-10 datasets with a 0.1 poisoning ratio. (a) DACC on the MNIST dataset with a 0.1poisoning ratio. (b) DACC on the FashionMNIST dataset with a 0.1 poisoning ratio. (c) DACC on the CIFAR-10 dataset with a 0.1 poisoning ratio

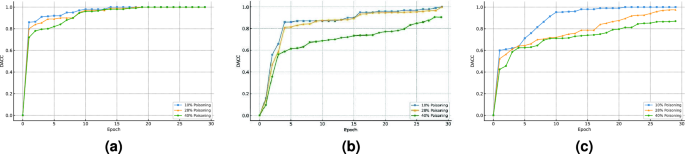

We conducted studies on 50 clients separately under the MNIST, FashionMNIST, and CIFAR-10 datasets with varying poisoning rates in Fig. 6, the figure indicates that the proportion of malicious clients in the client pool has a significant impact on the filtering accuracy of our method. The lower the proportion of malicious clients involved in poisoning, the higher the filtering accuracy. Additionally, the figure also shows that our method achieves better filtering accuracy on the MNIST dataset compared to the other two datasets.

DACC on the MNIST,FashionMNIST,CIFAR-10 datasets with 50 clients. (a) DACC on the MNIST Dataset with 50 Clients. (b) DACC on the FashionMNIST Dataset with 50 Clients. (c) DACC on the CIFAR-10 Dataset with 50 Clients.

In poisoning attacks, the proportions of malicious clients are set to 0.1, 0.28, and 0.4, respectively. The dataset is evenly distributed among 10 users (later increased to 50 for realism). To compensate for the low substitution rate, the training process is lengthened. Federated learning occurs over 50 global epochs, with all clients initially participating. Poisoning clients are determined after 5 local training epochs start. In the MNIST dataset, the proportion of poisoning clients is set to 0.1, and verification accuracy after 5 epochs is 94.3%. Real-time client detection is conducted throughout the federated learning process until the epochs are complete.

Experimental analysis

Model poisoning attacks are an important threat to federal learning, and the best solution to defend against poisoning attacks is still under discussion. In this paper, we propose a poisoning attack detection method based on trajectory anomaly identification matrix singular values. Through comparative experiments, we find that the method proposed in this paper can effectively defend against poisoning attacks and outperforms VAE34 and FLDetector35 in most cases. To evaluate the method in this paper, the datasets to be detected by the poisoning methods mentioned above that produce data with different percentages of poisoning are compared and analyzed with the method used in this paper. The performance of the algorithms is compared according to the following different metrics. As shown in Table 1.

Table 1 presents a comparison of our method with other approaches, with evaluation metrics including Detection Accuracy (DACC) and False Positive Rate (FPR). DACC represents the proportion of correctly identified poisoned clients, while FPR indicates the proportion of poisoned clients wrongly classified as benign clients.

From the table, it is evident that our method outperforms the other two approaches in terms of both filtering accuracy and false positive rate on the MNIST and FashionMNIST datasets. However, on the CIFAR-10 dataset, our method ranks second to the other two approaches.

Performance and scalability

This anomaly detection method is applicable across a range of domains including smart homes, smart cities, healthcare, and industrial automation. By identifying malicious behaviors, it ensures the security and stability of federated learning systems. To protect client data privacy, our anomaly detection mechanism avoids direct access to the original data by analyzing features of model updates. Specifically, key features of model updates are extracted using matrix SVD and combined with the Isolation Forest algorithm for anomaly detection, thereby preventing direct exposure of client data. Our method performs effectively in large-scale and diverse federated learning environments, accommodating data with various features and enhancing system security in a range of IoT scenarios.

Algorithm Performance: Client numbers minimally affected detection results, highlighting algorithmic robustness across scales. This stability stems from balanced client data contributions to global model training, essential for real-world mobile federated learning scenarios.

Scalability: Our method effectively detects up to 40% of malicious nodes, showcasing its robustness against interference. It can adapt to varying proportions of malicious nodes while maintaining federated learning effectiveness. However, we acknowledge that in extreme cases, such as when half of the nodes are malicious, existing methods may face challenges. For poisoning attacks executed by a large number of colluding clients, the current anomaly detection mechanism may struggle with identification. Consequently, additional defense strategies may be required to enhance federated learning security. This will be the focus of our future research.

link