An energy aware Q-learning framework for comprehensive coverage path planning in unknown complex environments

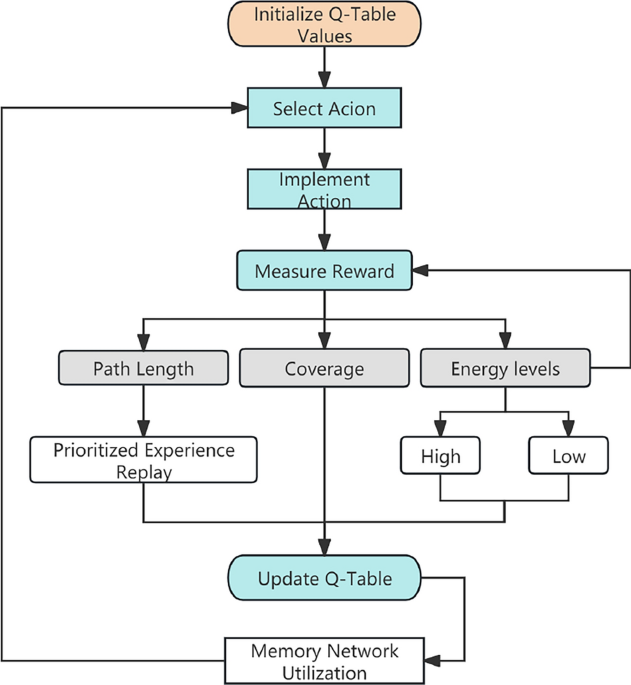

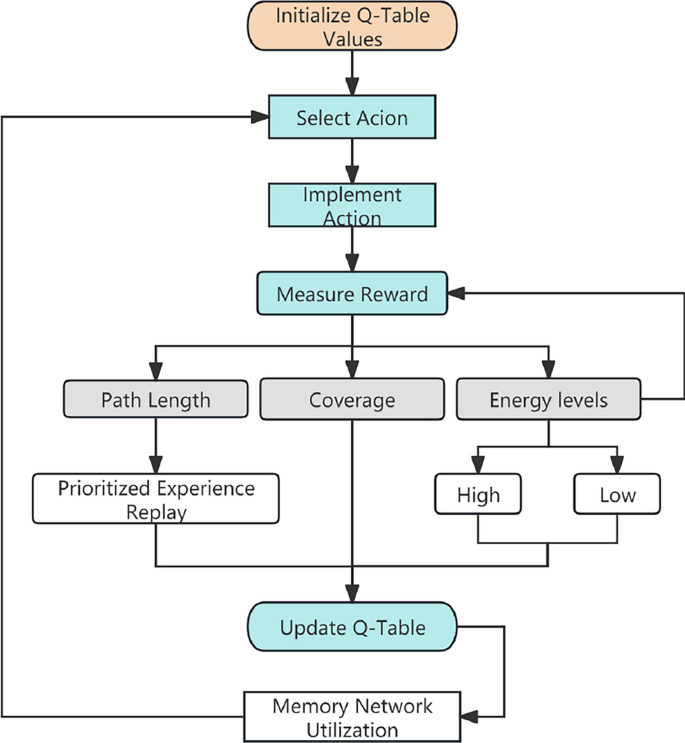

To enable full-coverage exploration in autonomous robotic systems operating in complex unknown environments, this paper proposes PERM-QN, a novel reinforcement learning approach that leverages Q-learning enhanced with prioritized experience replay and a memory network for efficient navigation and path optimization. By integrating Q-learning and memory network mechanisms, the proposed approach enables robots to achieve efficient path planning and exploration capabilities in complex unknown dynamic environments. Furthermore, the methodology incorporates multi-objective optimization considerations, specifically addressing coverage efficiency, energy consumption, and path length minimization. In essence, Fig. 1 shows the overall system architecture and the entire operational flow in this paper. The PERM-QN algorithm tackles the challenge of enabling autonomous robots to fully explore and efficiently navigate unknown complex post-disaster environments. The process begins with the initialization of a Q-table, which serves as a value function, estimating the long-term reward for each state-action pair. The robot then enters a cycle where it selects an action based on its current state and the Q-table, often employing exploration-exploitation strategies. This action is then implemented in the environment.

The robot receives a reward that reflects the effectiveness of its action with respect to several key objectives: minimizing path length, maximizing coverage of the environment, and managing energy levels (indicated as High or Low cost). These factors collectively shape the learning signal. The resulting experience (current state, chosen action, received reward, and the subsequent next state) is stored, and a Prioritized Experience Replay mechanism strategically samples these memories, prioritizing significant transitions to accelerate learning. Simultaneously, a Memory Network is utilized to store and retrieve information about the explored environment, enhancing the robot’s ability to make informed decisions in complex or partially observable scenarios.

The received reward and the information from the memory network are then used to update the Q-table, refining the estimated values of state-action pairs. This update rule forms the core of the Q-learning process. The updated Q-table, along with the insights from the memory network, guides the robot’s future action selection, creating a feedback loop that enables the autonomous system to progressively learn an optimal policy for comprehensive exploration and efficient navigation within the unknown grid world.

Robot exploration using Q-learning informed by path length, coverage, and energy levels, with prioritized experience replay and memory network.

Environment modeling

Grid environment

This research uses Matlab and its RL toolbox to model and simulate the entire algorithm. The robotic exploration scenario is emulated through a discrete grid-based representation of the environment. This grid environment is modeled as a two-dimensional discrete state space, where each cell represents a potential location for robot movement or exploration. To simulate autonomous exploration of completely unknown scenes, the initial state of all cells within the grid map is designated as the “unknown” state, which implies that the robot can only gradually reveal the unknown area through continuous movement and sensor acquisition. Within this simulated environment, the location and quantity of obstacles are randomly generated. The robotic agent learns the layout of the obstacle in the environment solely through exploratory actions, specifically by attempting to navigate to the target locations and detecting the presence or absence of obstacles in those locations.

Environment for simulation: (Left) Grid-based occupancy, (Right) Continuous space analogy.

As shown in Fig. 2, the left panel illustrates the obstacle configuration generated using MATLAB, while the right panel presents a schematic representation of the simulated post-disaster environment derived therefrom. This simulation is designed to mimic the complexities and uncertainties encountered in real-world post-disaster scenarios, including randomly distributed obstacles and initially unknown terrain. Through sufficient number of simulation training episodes, the robotic agent is enabled to learn effective navigation strategies for diverse obstacle shapes and dynamic spatial arrangements. This learning process enhances the robot’s capacity to effectively operate in completely unknown post-disaster environments.

Robot capabilities

In this research, the robot’s operation space is discretized into four cardinal directions of motion: forward (up), backward (down), leftward and rightward. The robot’s energy autonomy is simulated by imposing a finite limit on the total number of permissible movement steps. At the same time, the robot’s perceptual capabilities are modeled to emulate the function of onboard sensors in actual exploration. Within the scope of this study, the robot’s perception is restricted to the immediately adjacent cell in its forward direction, as shown in Fig. 2.

Energy-aware Q-learning framework

The core of the algorithm in this research is to use the Q-learning algorithm to acquire an optimal strategy for achieving full coverage search of the robot within a completely unknown environment. This is primarily accomplished through iterative updates to the Q-value table and subsequent strategic decision-making based on the learned values. The Q-value update rule is formally defined by Eq. 1.

$$\begin{aligned} \begin{aligned} Q(s,a) \leftarrow Q(s,a) + \alpha \big [\,&r + \gamma \max _{a’} Q(s’,a’)- Q(s,a) \,\big ] \end{aligned} \end{aligned}$$

(1)

where Q(s, a) is the Q-value associated with selecting action a under current state s; \(\alpha\) denotes the learning rate, which governs the step size of the Q-value update; r signifies the instant reward; \(\gamma\) is the discount factor, which balances the influence of immediate rewards and long-term rewards; and \(\max _{a’} Q(s’, a’)\) represents the maximum Q-value attainable in the subsequent state \(s’\), characterizing the optimal long-term reward.

Reward function

The reward function is a critical component of RL, directly affecting the direction of learning and the efficiency of the agent. Traditional reward functions, often based on a fixed, single-objective design, lack the adaptability and flexibility needed for dynamic environments. Consequently, they may be suitable for simple scenarios, but exhibit significant limitations when applied to the complexities of our setting. In our approach, the design of the reward function incorporates a multi-objective optimization framework. By introducing dynamic weights, non-linear functions, and path weights, we propose a novel reward mechanism that better addresses the needs of robots operating in complex environments. The reward function is mainly composed of the three components: coverage reward, energy reward, and path length reward.

Coverage rewards

The first component is the coverage reward, designed to encourage the robot to cover as much of the unexplored area as possible while discouraging revisiting previously explored region. The coverage reward function is determined by Eq. 2.

$$\begin{aligned} \begin{aligned} R_{\text {coverage}} = \text {DecayFactor}^{\text {StepCount}} \times \left( 1 + \sin \left( {\pi \times \text {StepCount}}\right) /{T} \right) \end{aligned} \end{aligned}$$

(2)

In Eq. 2, StepCount denotes the number of steps elapsed within the current episode, while the parameter T defines the periodicity of the sinusoidal modulation. This sinusoidal term is strategically introduced to act as a dynamic exploration bonus or reward shaping component within the coverage incentive. It serves as a soft perturbation mechanism that periodically amplifies or attenuates the agent’s immediate incentive to explore, thereby actively promoting behavioral diversity. This controlled, oscillatory behavior is particularly crucial during the mid-stage of exploration, where agents commonly encounter local loops, repetitive transitions, or premature stagnation in grid-based environments. By systematically varying the reward landscape, the sinusoidal modulation encourages the agent to re-evaluate its current local strategies and explore alternative, potentially more fruitful paths. The use of a sinusoidal function, specifically, ensures a smooth and bounded oscillation of this incentive, introducing controlled variability without destabilizing the learning process. This ultimately improves coverage completeness and effectively mitigates the aforementioned stagnation issues, facilitating a more thorough sampling of the environment.

The typical range of T values employed in our experiments, summarized in Table 1, was empirically selected to align with the expected duration of this critical mid-stage exploration phase, ensuring optimal perturbation during the period when it is most beneficial for policy optimization.

Path length reward

The second component of the reward function is the path length reward, quantified by Eq. 3.

$$\begin{aligned} \begin{aligned} R_{\text {path\_length}} = \text {PathLength} \times ( 1 +&\; \text {StepCount} \big / (K + \text {StepCount}) ) \end{aligned} \end{aligned}$$

(3)

where K is a regulatory factor that modulates the influence of step count on the penalty term.

Energy reward

In realistic post-disaster exploration scenarios, the robot energy status of the robot is also a critical consideration for rescue operations. Complex and unknown irregular terrains often lead the robot to engage in indiscriminate exploration of hard-to-reach regions, increasing the risk of energy depletion and potential mission failure. To address this, we introduce a novel energy-aware reward mechanism that adaptively modulates the robot’s exploration strategy according to its remaining energy, thereby encouraging extensive exploration when energy is sufficient to maximize coverage of unknown areas. Conversely, under low-energy conditions, exploration tends to be conservative, mitigating high-risk, long-distance exploration, and preserving energy for the robot’s return. The energy reward formula is defined in Eq. (4)

$$\begin{aligned} R_{\text {energy}} = {\left\{ \begin{array}{ll} \exp \left[ -3(1 – P_{\text {battery}})\right] + \lambda _1 \cdot P_{\text {battery}}^2, \text {if } P_{\text {battery}} \ge n, \\ \exp \left[ -3(1 – P_{\text {battery}})\right] – \lambda _2 \cdot (1 – P_{\text {battery}})^3, \text {if } P_{\text {battery}} < n. \end{array}\right. } \end{aligned}$$

(4)

where \(P_{\text {battery}}\) represents the percentage of remaining energy. Additionally, \(\lambda _1\) and \(\lambda _2\) are coefficients used to dynamically adjust the energy reward and penalty amplitude, respectively, which are determined by the following equations.

$$\begin{aligned} \lambda _1 = a_1 \cdot P_{\text {battery}} + b_1 \end{aligned}$$

(5)

$$\begin{aligned} \lambda _2 = c_2 \Big / \left( 1 + \exp \left[ -k \cdot (P_{\text {battery}} – n) \right] \right) \end{aligned}$$

(6)

where \(a_1\),\(b_1\) and \(c_2\) are constant. In our framework, n is primarily conceived as a configurable safeguard to ensure the robot retains sufficient power to reliably return to its base station without becoming lost or stranded within the exploration map. Rather than being derived from a formal theoretical model with a fixed value, n serves as a critical parameter that balances two competing objectives: maximizing coverage efficiency through deeper exploration, and minimizing the inherent risk of energy depletion before a successful return. A smaller value for n permits more aggressive and extensive exploration, but inherently increases the risk of the robot running out of energy. Conversely, a larger value prioritizes safety by ensuring greater energy reserves, though this may consequently limit the overall exploration range. This design intentionally offers flexibility, allowing for robust deployment in diverse environments where the desired trade-off between exploration aggressiveness and energy security can vary based on specific mission requirements and environmental characteristics. Through extensive simulation testing across a wide spectrum of obstacle densities and map sizes, we have empirically validated that setting n within a reasonable, empirically determined range consistently enables reliable return behavior without degrading overall coverage performance.

Conceptual diagram of the robot’s exploration performance within an unknown environment. a and b Performance without energy optimization, and c and d Performance with energy optimization.

Figure 3 illustrates the influence of energy optimization on robot exploration behavior at different energy levels. In each subfigure, the yellow star denotes the starting point, the green triangle marks the transition from high to low energy level, and the blue diamond represents the final position of the robot. The background shading indicates the spatial attractiveness of unexplored areas, where a darker blue corresponds to a higher attraction. In high-energy scenarios (Fig. 3a and c), the use of the segmented reward function enhances the attraction of remote unexplored regions, encouraging the robot to fully explore the map. This leads to more complete coverage and improved exploration efficiency. Notably, both the baseline and the energy-optimized strategies perform similarly under sufficient energy, as the robot has enough energy to explore and return. However, when the energy level drops to a critical threshold (Fig. 3b and d), the effect of energy optimization becomes more pronounced. Without energy-aware regulation (Fig. 3b), the robot continues to travel toward distant attractive areas, resulting in excessive energy consumption that could potentially jeopardize its return capability. In contrast, the energy-optimized strategy (Fig. 3d) dampens the attraction of distant targets in low-energy states. This prompts the robot to explore more cautiously, limiting needless motion and saving enough energy for the return journey.

By combining the above three reward functions and the weight coefficients, the overall reward function of our algorithm can be formulated as denoted in Eq. (7).

$$\begin{aligned} R_{\text {total}} = w_1 R_{\text {coverage}} + w_2 R_{\text {energy}} + w_3 R_{\text {path\_length}} \end{aligned}$$

(7)

In Eq. 7, the coefficients \(w_1\), \(w_2\) and \(w_3\) serve to dynamically balance the three reward components: coverage incentive, energy-aware exploration, and path efficiency. These weights are formulated as adaptive functions of the robot’s battery level and exploration progress, allowing the agent to prioritize different behaviors at different stages of the mission. The effective ranges and modulation characteristics of each weight are summarized in Table 2.

$$\begin{aligned} w_1&= 1 \Big / \left( 1 + \exp \left[ -k_1 \cdot (P_{\text {battery}} – n) \right] + \eta _1 \right) , \end{aligned}$$

(8)

$$\begin{aligned} w_2&= k_2 \Big / \left( 1 + \exp \left[ -P_{\text {battery}} \right] + \eta _2 \right) , \end{aligned}$$

(9)

$$\begin{aligned} w_3&= \gamma \Big / \left( P_{\text {battery}} + \epsilon + \eta _3 \right) \end{aligned}$$

(10)

where \(\eta _1\), \(\eta _2\), and \(\eta _3\) are constant offsets used to ensure that the weights have a minimum value in some states. The pseudo-code for Q-learning is outlined in Algorithm 1.

This enhanced Q-learning algorithm aims to balance multiple objectives dynamically based on an agent’s battery level. It initializes a Q-table and learning parameters. For each episode, the environment resets, and the agent selects actions using an epsilon-greedy policy. After taking an action, it observes reward components (coverage, energy, path length), which are weighted based on the current battery level to calculate a total reward. The Q-table is then updated using this reward and the estimated future rewards. The agent’s state is updated, and the exploration rate gradually decreases over episodes, allowing the agent to learn an efficient and energy-aware policy.

Enhanced Q-Learning with Dynamic Multi-Objective Rewards

Priority experience replay and memory network utilization

Priority experience replay

In the proposed algorithm, the robot operates within an initially unknown and dynamic grid environment, where a comprehensive exploration is required for effective decision-making. However, inherent complexity, environmental stochasticity, and sparse feedback often lead to instability during training and a tendency to converge prematurely to suboptimal policies. Furthermore, the non-stationary nature of the environment exacerbated by the agent continually updating its policy based on data sampled from its current strategy, causing the target distribution to drift over time, undermining learning stability.

To mitigate these challenges and support the multi-objective nature of the task (e.g., maximizing coverage, minimizing path length, and maintaining sufficient energy), we incorporate a prioritized experience replay (PER) mechanism into the learning framework. Unlike conventional uniform sampling, PER ranks transitions based on their temporal difference error and exploration utility (e.g., coverage efficiency, path reward), ensuring that high-impact experiences are sampled more frequently. This prioritization facilitates more efficient policy refinement, accelerates adaptation to novel environmental configurations, and enhances learning robustness-especially in the presence of sparse or delayed rewards. The basic formula is shown in Eq. (11).

$$\begin{aligned} \begin{aligned} P_i =\;&(E_i + R_{\text {multi}, i}) \cdot \text {TimeDecayFactor}^{\text {StepCount}_i} + \epsilon \end{aligned} \end{aligned}$$

(11)

where E is the exploration efficiency while \(R_{\text {multi}, i}\) is the multi-step rewards.

$$\begin{aligned} E = \text {C} \big / \text {PathLength}, \quad R_{\text {multi}} = \sum _{t=0}^{N} \gamma ^t R_t \end{aligned}$$

(12)

where C is the explored area of map, \(R_t\) is the immediate reward in step t, \(\gamma \in [0, 1]\) is the discount factor that controls the weight of future rewards and N is the number of future steps considered.

The priority selection probability formula is shown in Eq. (13).

$$\begin{aligned} \mathbb {P}_i = \text {Priority}_i \big / \sum _j \text {Priority}_j \end{aligned}$$

(13)

The probabilistic selection mechanism ensures that experiences exhibiting higher exploration efficiency are assigned a greater probability of being sampled from the priority replay buffer, prioritizing the repeated learning of key experiences. Simultaneously, the formulation inherently prevents the complete exclusion of low-priority experiences, thereby maintaining a degree of diversity within the experience pool. Therefore, the formula for updating the Q value after combined with replay of the experience is given in Eq. (14).

$$\begin{aligned} Q(s, a)\leftarrow & Q(s, a) + \alpha \cdot \mathbb {P}_i \cdot \left[ r + \gamma \max _{a’} Q(s’, a’) – Q(s, a) \right] \end{aligned}$$

(14)

Integrating the prioritized experience assignment with the multi-step reward framework, the proposed algorithm dynamically balances the imperatives of immediate exploration efficiency with the pursuit of long-term global optimization. This synergy enables the achievement of a rapid and efficient full coverage search strategy. The procedural outline of the algorithm is presented in Algorithm 2.

The Priority Replay Buffer algorithm enhances experience replay by prioritizing significant transitions. It initializes an empty buffer and, for each new experience, calculates its priority based on exploration efficiency and multi-step reward. This experience, containing state, action, reward, next state, done flag, and priority, is then added to the buffer. If the buffer is full, the lowest-priority experience is replaced if the new one has a higher priority, ensuring the buffer retains more valuable data.

When sampling experiences for training, the algorithm calculates sampling probabilities proportional to each experience’s priority. This non-uniform sampling strategy ensures that higher-priority experiences are sampled more frequently, allowing the learning process to focus on the most informative transitions. By prioritizing the replay of important experiences, the algorithm aims to accelerate learning and improve the stability of reinforcement learning agents.

Memory network utilization

In addition to the inherent complexity of the environment and the challenges posed by multi-objective optimization, empirical observations across multiple experimental runs indicate that structural similarities often emerge among exploration trajectories. However, the robot initially lacks any knowledge of its surroundings in each episode, necessitating repeated exploratory efforts to gradually acquire an effective policy. This leads to a substantial amount of redundant exploration in complex and partially observable environments, especially during early-stage learning.

To address this inefficiency, we introduce a memory network module to complement the prioritized experience replay mechanism. This module retains high-utility historical exploration trajectories from previous episodes and enables the agent to perform memory-guided recall during online decision-making. By retrieving structurally similar past paths and attempting local state-level matching, the agent can reuse previously successful behaviors in analogous substructures. This strategy improves sample efficiency, especially during early-stage exploration, and promotes generalization across common layout patterns. A schematic overview of this mechanism is shown in Fig. 4.

Figure 4 illustrates how the memory network module operates alongside the prioritized experience replay. The central grid represents the agent’s memory (M), storing several historical exploration trajectories (Trajectory 1, Trajectory 2, Trajectory 3) within different encountered environmental configurations. These trajectories, depicted by solid and dashed lines, represent sequences of states and actions that have previously yielded high utility, such as efficient exploration or reaching a goal. When the agent encounters a new environmental configuration (implied by the current state), the memory network employs a trajectory similarity-based retrieval mechanism. This process compares the current situation with the stored trajectories to identify those that closely match the present environmental structure, potentially based on the arrangement of obstacles or other relevant features.

The arrows pointing from the central memory grid to “Example 1,” “Example 2,” and “Example 3” illustrate this retrieval process. Each example shows a recalled segment of a past trajectory (blue dashed line), overlaid on a slightly different but structurally similar current configuration. Once a high-similarity trajectory is retrieved, the agent attempts to match its current state to locally relevant segments of that trajectory. If such a state is found and its successor is both valid and unexplored, the agent executes a short one-step reference action. This matching process is designed to be lightweight and opportunistic, allowing the agent to reuse partial strategies without requiring full trajectory reproduction or exact environmental alignment.

Schematic illustration of the trajectory similarity-based retrieval mechanism used to identify and utilize structurally similar paths from memory.

Trajectory similarity is assessed using a Euclidean distance-based metric (Eq. 15), which quantifies global structural similarity between stored paths and the current state context. This enables coarse retrieval of relevant trajectories without requiring exact environment identity. Execution, however, relies on a local match condition—only if a state \(s_t\) exactly matches a state \(s_j^i\) from a retrieved trajectory \(\tau _i\), and the successor state \(s_{j+1}^i\) is both valid and unexplored, does the agent adopt a short-term one-step guidance action. This layered retrieval-and-matching mechanism provides a lightweight form of episodic recall that remains compatible with the Q-learning policy.

$$\begin{aligned} d(s_{\text {current}}, s_{\text {memory}}) = \sqrt{\sum _{i=1}^{n} \left( s_{\text {current}}^{(i)} – s_{\text {memory}}^{(i)} \right) ^2} \end{aligned}$$

(15)

In this equation, \(s_{\text {current}}\) denotes the agent’s current state vector, while \(s_{\text {memory}}\) refers to a previously visited state stored in the memory network. The term n represents the dimensionality of the state space.

To ensure high-quality memory contents, we also implement a dynamic storage policy based on weighted utility scoring. New trajectories are assigned a path weight based on three criteria: success frequency, energy efficiency, and trajectory compactness, as defined in Eq. 16. When memory capacity is reached, the lowest-weighted trajectory is replaced, ensuring retention of only the most transferable experiences.

$$\begin{aligned} \text {PathWeight} = \mu _1 \cdot R_{\text {success}} + \mu _2 \cdot E_{\text {efficiency}} + \mu _3 \cdot \frac{1}{\text {PathLength}} \end{aligned}$$

(16)

where \(R_{\text {success}}\) is the success rate of the path and \(\mu _1, \mu _2, \mu _3\) are adjustable parameters used to balance the weights of different factors, and their sum is equal to 1, ensuring balanced contributions of success rate, efficiency, and path length.

Memory-Guided Recall Strategy

Algorithm 3 succinctly summarizes our proposed memory recall logic, a mechanism crucial for enhancing exploration efficiency. This process begins with the retrieval of high-utility trajectories from the agent’s memory module. Specifically, although the Euclidean distance (Eq. 15) does not directly reflect topological features, it is employed as a rough but computationally efficient metric to retrieve historical trajectories whose spatial distribution is broadly similar to the current environment. This initial retrieval acts as a first-stage filter, aiming to identify candidate objects that are structurally similar in terms of spatial layout. On this basis, the reuse decision further introduces the matching of local states, where the agent iteratively searches for a precise “match” between its current state and a segment within these stored, high-weight trajectories. Upon finding such a match, the agent identifies the immediate successor state along that same high-utility trajectory. Provided this successor state is both valid (i.e., within the known free space) and currently unvisited, it is adopted as a one-step guidance goal. This two-layer approach enables the agent to benefit from relevant previous experience without relying on strict topological equivalence. This opportunistic recall is intentionally designed to activate only when the agent experiences indicators of local stagnation, such as a plateau in coverage improvement or the occurrence of repeated transitions in its exploration. This helps the agent effectively escape local loops or dead ends. Crucially, this guidance step does not override the agent’s learned Q-policy; the agent seamlessly reverts to normal learning immediately after executing this single recall action. This modular design facilitates the effective reuse of valuable prior knowledge for targeted short-term guidance while preserving the inherent flexibility and adaptability of the ongoing online learning process. To ensure that only high-quality paths are retained in memory, we incorporated a multi-factor path evaluation scheme (Eq. 16), which judiciously balances prior success rate, energy efficiency, and path compactness.

The exploration strategy in our framework extends beyond a rudimentary \(\epsilon\)-greedy approach, incorporating several sophisticated mechanisms to effectively address the challenges of redundant exploration and to facilitate a progressive design from local to global exploration in unknown environments, thereby ensuring both coverage completeness and efficiency. Firstly, our reward function is meticulously shaped with dynamically weighted components (Eq. 7), where the coefficients governing different reward terms are adaptively varied based on the agent’s real-time energy state and its overall exploration progress. This sophisticated weighting scheme enables the agent to prioritize comprehensive coverage in the early stages of an episode, gradually shifting its focus towards energy-aware efficiency and path optimization as exploration advances. This dynamic adjustment effectively orchestrates a staged progression, guiding the agent from intensive local exploration to a broader spatial coverage. Secondly, the memory network provides an additional, crucial layer of exploration enhancement. It enables the episodic recall of structurally relevant, high-utility paths encountered in prior experiences. This intelligent reuse of trajectories in similar sub-regions significantly reduces the need for redundant re-exploration, optimizing the search process without necessitating global map knowledge, which is typically unavailable in our assumed unknown environments. Finally, a dedicated local stagnation detection mechanism is implemented, which monitors for indicators such as a decay in the coverage rate or the occurrence of repetitive state transitions. Upon detecting such stagnation, this mechanism actively triggers short-term, memory-guided deviations. These dynamic responses are designed to immediately break local loops and effectively guide the agent out of local minima, prompting it to seek out and explore previously unvisited regions. Collectively, these integrated mechanisms form a hybrid exploration strategy that transcends the limitations of a purely \(\epsilon\)-greedy policy. This comprehensive structure intelligently balances random exploration, reward-driven motivation, and memory-guided adaptation, thereby ensuring robust coverage completeness and maximizing exploration efficiency in complex, unknown environments.

link