Enhanced exploration in reinforcement learning using graph neural network based intrinsic reward mechanism

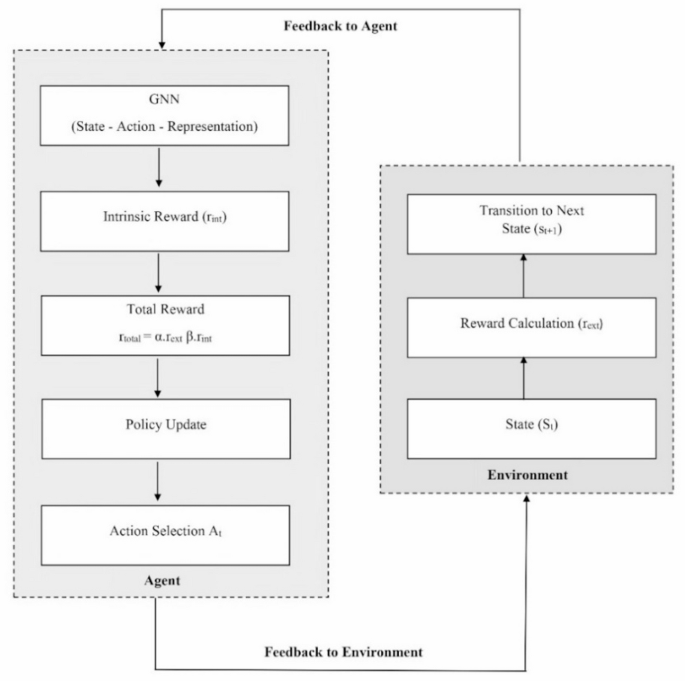

The proposed GNN-IRL exploration approach was implemented using the DQN algorithm to learn and generate a policy for action selection. Standard control environments, such as cartpole, mountain car, taxi, and lunar lander, were used to analyze the performance of the proposed GNN-IRL and baseline exploration strategies. The baseline exploration strategies used in this study were BiPaRS, Boltzmann exploration, count-based exploration, epsilon-greedy exploration, RND, entropy-based exploration, Thompson sampling exploration, and UCB exploration.

Experimental results

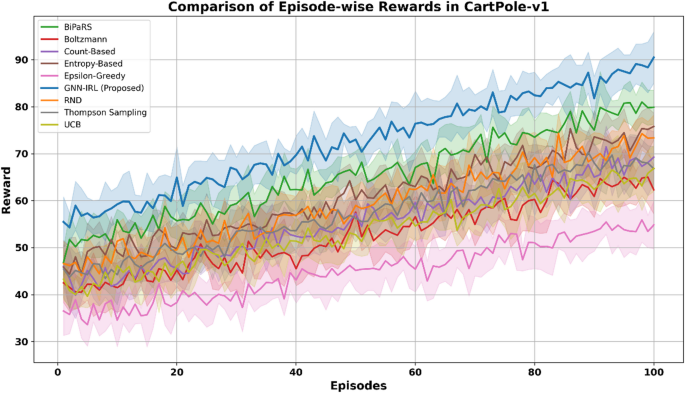

The episode-wise reward trajectories of the proposed GNN-IRL framework were compared with those of several state-of-the-art exploration strategies, including RND, BiPaRS, Boltzmann, Count-Based, Entropy-Based, Epsilon-Greedy, Thompson Sampling, and UCB, across four discrete-action benchmark environments: CartPole-v1, MountainCar-v0, Taxi-v3, and LunarLander-v3. The average episode-wise rewards over 10 independent runs were used to ensure a fair comparison and to mitigate the stochastic variance in individual trials. Both the mean reward and standard deviation were computed for each episode across the runs. A state discretization process was applied to facilitate the implementation of the GNN-IRL and enable graph construction from continuous or hybrid state spaces. In the CartPole-v1 environment, each dimension of the continuous state vector was discretized using 10 uniform bins per dimension, resulting in 10⁴ = 10,000 unique discrete states. Figure 4 illustrates the learning progression in CartPole-v1, with shaded areas representing ± 1 standard deviation, covering approximately 68% of the observed values to indicate variability. The GNN-IRL consistently outperformed the baseline methods, achieving faster convergence and higher final rewards, whereas strategies such as Epsilon-Greedy and Count-Based exhibited slower and noisier learning owing to less structured exploration.

Comparison of episode-wise rewards in CartPole-v1.

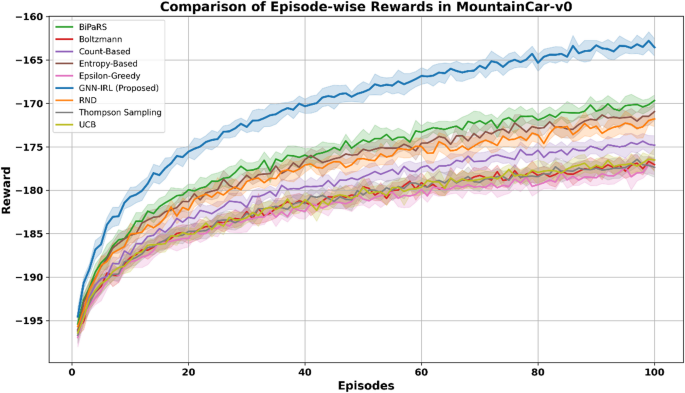

MountainCar-v0 uses 20 bins per dimension to capture finer motion and velocity resolution, producing 400 unique discrete states. These discrete states form the nodes in the environment graph, with edges representing state transitions owing to agent actions. Figure 5 presents the results for the MountainCar-v0 environment, which features sparse and delayed reward. GNN-IRL demonstrated superior performance by quickly discovering the optimal trajectory to reach the goal state. Its structured exploration, driven by graph-based intrinsic rewards, allows for more effective navigation of the state space compared to random or count-based methods, which often struggle with convergence in this domain.

Comparison of episode-wise rewards in MountainCar-v0.

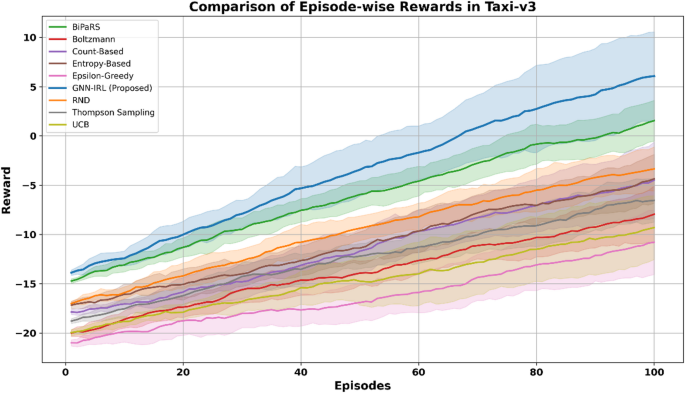

The Taxi-v3 environment is inherently discrete and grid-based, consisting of 500 distinct states defined by the position of the agent, passenger location, and the destination. Therefore, no additional discretization was required. The structured transitions of the environment allowed for straightforward graph construction, facilitating efficient GNN operations on the experience of the agent. The Taxi-v3 environment, characterized by a discrete grid layout with dynamic task-dependent transitions, such as varying passenger pickup and drop-off locations, presents a structured yet challenging exploration setting. As shown in Fig. 6, the proposed GNN-IRL framework achieved faster reward accumulation and a more stable learning curve than the baseline strategies. This improvement highlights the ability of the model to exploit topological information from the state transition graph of the environment, thereby enabling efficient navigation and consistent policy learning in discrete, goal-conditioned environments.

Comparison of episode-wise rewards in Taxi-v3.

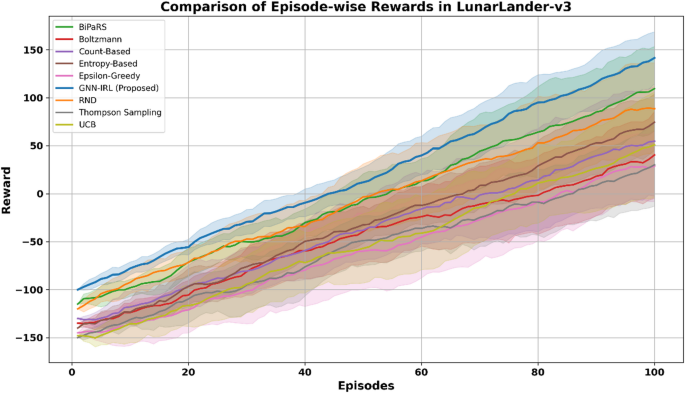

In LunarLander-v3, although the environment has continuous state components, a discretization scheme was applied by dividing each state dimension (e.g., position, velocity, angle, and leg contacts) into five bins depending on sensitivity to reward dynamics. This produced 5,000 distinct discrete states after removing unreachable combinations, enabling the GNN to compute intrinsic rewards based on the structural properties of the discretized environment graph. Figure 7 shows the performance in the LunarLander-v3 environment, which is characterized by stochastic dynamics and moderately sparse rewards. The GNN-IRL approach maintained a smooth and steady learning curve and attained higher cumulative rewards than all baseline techniques. Its ability to consistently identify and prioritize novel and informative states results in efficient policy development, even under uncertain transitions.

Comparison of episode-wise rewards in LunarLander-v3.

Overall, these results, averaged across multiple training runs, confirm the stability, scalability, and robustness of the GNN-IRL in diverse discrete-action environments. Structured discretization enables the effective construction of environment graphs, which, combined with intrinsic motivation based on graph metrics, allows GNN-IRL to significantly improve exploration efficiency and reward optimization across tasks.

Ablation study

An ablation study was conducted to evaluate the individual contributions of the intrinsic reward components to the proposed GNN-IRL framework. Specifically, this study examined three configurations: (i) centrality-based intrinsic rewards only, (ii) inverse degree–based rewards only, and (iii) a combined formulation using various α/β weight settings. The performance was measured using a cumulative reward metric. The cumulative reward measures the total reward accumulated over fixed episodes. The cumulative reward is computed using Eq. 11:

$$\:cumulative\:reward=\:\sum\:_\left^\left_\left(i\right)$$

(11)

Where E is the total number of episodes. The cumulative reward ranges from -∞ to +∞. A higher cumulative reward denotes a greater performance of the RL agents.

As presented in Table 5, the combined approaches consistently outperformed individual components across all benchmark environments. In particular, the equal weighting configuration (α = 0.5, β = 0.5) achieved the highest cumulative reward, highlighting the complementary nature of centrality and inverse degree metrics in driving effective exploration.

Table 6 presents an ablation study analyzing the impact of different weight configurations between intrinsic (x) and extrinsic (y) rewards in the GNN-IRL framework across the four benchmark environments. The results show that using only intrinsic or extrinsic rewards yields suboptimal performance. Equal contribution provides moderate improvements, whereas assigning a higher weight to intrinsic rewards (x = 0.7, y = 0.3) consistently leads to superior cumulative rewards in all environments. This highlights that placing more importance on intrinsic motivation enhances exploration and accelerates learning, especially in sparse or deceptive reward settings.

The two ablation studies collectively demonstrate the effectiveness of both the structural components and reward balancing strategy within the GNN-IRL framework. Overall, the findings confirm that leveraging a hybrid reward mechanism grounded in structural graph features and appropriately balanced with extrinsic feedback greatly improves the learning efficiency and agent performance across diverse environments.

Computational cost analysis

A comparative analysis of different discretization resolutions across benchmark environments highlights the inherent trade-off between the computational cost and cumulative reward. Table 7 presents the computational cost of GNN-IRL for various discretization configurations.

In CartPole-v1, increasing the number of bins from 5 to 10 significantly improved the performance, but further refinement to 20 bins yielded only marginal gains while causing a steep rise in the computational overhead. A similar trend was observed in MountainCar-v0, where the 20-bin configuration achieved the best balance, as finer discretization provided only slight improvements in the reward but at a substantial computational cost. For Taxi-v3, the environment is inherently discrete, eliminating the need for additional discretization while delivering strong results. In LunarLander-v3, increasing the number of bins from 3 to 5 markedly enhanced the cumulative rewards, but moving to 10 bins offered negligible additional benefits despite dramatically higher costs. Collectively, these findings confirm that the chosen discretization strategies—10 bins per dimension for CartPole-v1, 20 bins per dimension for MountainCar-v0, the natural grid for Taxi-v3, and five bins per dimension for LunarLander-v3—represent the optimal compromise, ensuring high rewards with manageable computational demands.

Performance analysis

The performance of the exploration techniques was assessed using standard performance metrics, such as convergence rate, convergence time, exploration efficiency, and state coverage. The average performance metric values of ten independent runs for each method were used for the comparison. The convergence rate represents the inverse of the number of episodes the RL agent takes to reach the threshold reward (\(\:_\left)\). The convergence rate is calculated using Eq. 12.

$$\:\textUnique\:States\:Explored\right\text\left\textUnique\:States\:Explored\right\text\text\text\left\text\text\left\text\left\text\left\text{e}\:\text{R}\text{a}\text{t}\text{e}=\:\frac{1}{{E}_{\text{t}\text{h}\text{r}\text{e}\text{s}\text{h}\text{o}\text{l}\text{d}}}$$

(12)

Where \(\:{E}_{\text{t}\text{h}\text{r}\text{e}\text{s}\text{h}\text{o}\text{l}\text{d}}\) is the episode taken for the agent to reach the \(\:{r}_{threshold}\). The convergence rate ranged from 0 to 1. A higher convergence rate indicates better performance.

The convergence time represents the total time (T) taken to reach the \(\:{r}_{threshold}\). Equation 13 is used to compute the convergence time of the exploration techniques.

$$\:Convergence\:Time\:\left(seconds\right)=\:T\left({r}_{threshold}\right)$$

(13)

The exploration efficiency indicates the number of unique states visited during the training of the RL agent. This refers to the percentage of episodes in which the agent successfully explores novel states. A higher exploration efficiency indicates that the RL agent explores more states during training. Equation 14 shows how the exploration efficiency is calculated.

$$\:\text{E}\text{x}\text{p}\text{l}\text{o}\text{r}\text{a}\text{t}\text{i}\text{o}\text{n}\:\text{e}\text{f}\text{f}\text{i}\text{c}\text{i}\text{e}\text{n}\text{c}\text{y}\:\left(\text{\%}\right)=\:\frac{\left|Unique\:States\:Explored\right|}{\left|Total\:States\:Visited\right|}\:\times\:100$$

(14)

Moreover, state coverage is the proportion of the environment explored during training. The state coverage of the RL agent was computed using Eq. 15:

$$\:\text{S}\text{t}\text{a}\text{t}\text{e}\:\text{c}\text{o}\text{v}\text{e}\text{r}\text{a}\text{g}\text{e}\:\left(\text{\%}\right)=\:\frac{\left|Unique\:States\:Explored\right|}{\left|Total\:States\:in\:Environment\right|}\:\times\:100$$

(15)

The comparison results of the proposed GNN-IRL and existing exploration techniques in the CartPole-v1 environment are presented in Table 8. The GNN-IRL framework demonstrated superior performance on all evaluation metrics. It achieved the highest convergence rate of 0.0089 and the lowest convergence time of 1344 s, indicating faster policy learning. Additionally, it obtained the highest cumulative reward of 1189 and state coverage of 76%, highlighting its effectiveness in balancing exploration and exploitation compared with the baseline methods.

Table 9 presents the results for the MountainCar-v0 environment. With 400 discrete states and a convergence threshold of 50, the proposed GNN-IRL method again demonstrated superior performance, reaching the convergence threshold in only 1469 s. It achieved a higher cumulative reward of -98, along with the highest exploration efficiency and state coverage among all the compared techniques. These results confirm the effectiveness of GNN-IRL in navigating sparse-reward continuous state space environments through graph-based intrinsic motivation.

Taxi-v3 is a grid-based environment with dynamic task-based transitions, which also benefits from the proposed approach. As shown in Table 10, the proposed GNN-IRL method achieved the highest cumulative reward of -9, the least convergence time of 1259 s, and the widest state coverage of 91%. These results highlight the ability of GNN-IRL to adaptively guide exploration in structured environments with sparse and delayed reward signals.

The GNN-IRL model achieved the best overall performance in the LunarLander-v3 environment, as presented in Table 11. It recorded the highest cumulative reward of 32 and led across all exploration metrics, including convergence rate, efficiency, and state coverage.

Table 12 also reports the standard deviations of the cumulative rewards from 10 independent trials. This demonstrates that GNN-IRL not only outperforms other techniques but also exhibits the highest consistency and stability across all environments.

The standard deviation values presented above demonstrate the robustness and consistency of the GNN-IRL technique in different environments. The GNN-IRL consistently exhibited the lowest standard deviation in the cumulative reward in all four environments, indicating higher training stability and more predictable performance. These results further reinforce that GNN-IRL not only achieves superior average rewards but also maintains a reliable learning behavior over multiple training runs.

The experimental results show that the proposed GNN-IRL achieves a superior convergence rate, convergence time, cumulative reward, exploration efficiency, and state coverage compared with existing techniques in sparse-reward and high-dimensional environments. The proposed GNN-IRL uses graph centrality and inverse degree to calculate intrinsic rewards. This technique prioritizes novel states and balances exploration and exploitation during the training process. This technique provides advantages such as systematic and adaptive exploration, scalability to high-dimensional environments, and interpretable reward design for RL agents.

Limitations of the proposed approach

Although the proposed GNN-IRL framework demonstrated superior performance across discrete-action environments, it has certain limitations. Its effectiveness depends on the quality of the constructed state-transition graph, and poorly structured or sparse graphs can reduce the informativeness of the intrinsic rewards. The computation of graph-based metrics at each step introduces additional overhead, which may affect the scalability of large or high-dimensional state spaces. The current formulation is designed for discrete or discretized states, limiting its direct applicability to continuous or hybrid environments, and maintaining the graph requires significant memory. Additionally, the performance can be sensitive to hyperparameters, such as discretization resolution and reward weighting. Despite these limitations, GNN-IRL provides a systematic and interpretable approach to exploration, with opportunities for future extensions to continuous actions and more efficient graph representation.

link