Robot movement planning for obstacle avoidance using reinforcement learning

Robot kinematic model

In this work, we employ a standard 6-degree-of-freedom industrial manipulator30 as our experimental platform. The kinematic model of the robot is derived using the standard Denavit–Hartenberg (DH) convention. According to this convention, each joint is assigned a coordinate frame, enabling the definition of spatial transformations between consecutive joints.

Using these DH parameters, we calculate the forward kinematics to determine the robot configuration. Each transformation matrix \({}^{i-1}\mathbf{T}{i}\) from joint \(i-1\) to joint i is defined as

$$\begin{aligned} {}^{i-1}\mathbf{T}_{i} = \begin{bmatrix} \cos \theta _i & \quad -\sin \theta _i \cos \alpha _i & \quad \sin \theta _i \sin \alpha _i & \quad a_i \cos \theta _i \\ \sin \theta _i & \quad \cos \theta _i \cos \alpha _i & \quad -\cos \theta _i \sin \alpha _i & \quad a_i \sin \theta _i \\ 0 & \quad \sin \alpha _i & \quad \cos \alpha _i & \quad d_i \\ 0 & \quad 0 & \quad 0 & \quad 1 \end{bmatrix}. \end{aligned}$$

(1)

The complete forward kinematic transformation from the base frame to the end-effector frame is obtained by multiplying these matrices sequentially:

$$\begin{aligned} {}^{0}\mathbf{T}{6} = \prod {i=1}^{6} {}^{i-1}\mathbf{T}_{i}. \end{aligned}$$

(2)

From \({}^{0}\mathbf{T}_{6}\), the end-effector position (x, y, z) is extracted and used for collision detection and distance computations in the obstacle avoidance algorithm. By incorporating forward kinematics directly into our methodology, we simplify computational requirements while ensuring accurate spatial data for our reinforcement learning framework.

Continuous obstacle avoidance with APF-DDPG framework

In this work, we propose an obstacle avoidance approach that integrates APF with DDPG reinforcement learning31. This integration addresses critical limitations of each method individually. APF methods often encounter local minima problems, particularly when attractive and repulsive forces oppose each other32. Pure DDPG methods, in contrast, typically require extensive exploration and lack direct spatial information, potentially slowing convergence and complicating navigation. The combined APF-DDPG framework thus leverages structured spatial information from APF and the robust exploratory learning ability of DDPG, facilitating efficient and reliable obstacle avoidance in continuous state-action scenarios.

Problem definition and state-action representation

We define the obstacle avoidance problem as a Markov Decision Process (MDP), described by a state space \(\mathscr {S}\), action space \(\mathscr {A}\), and a reward function R(s, a). The continuous state vector \(s \in \mathbb {R}^{9}\) comprises the robot s six joint angles \((\theta _1,\dots ,\theta _6)\) and the end-effector’s Cartesian coordinates (x, y, z) computed from forward kinematics. The action vector \(a \in \mathbb {R}^{6}\) specifies incremental joint angle adjustments within the interval of \([-0.5^\circ , 0.5^\circ ]\) per timestep, allowing smooth and controlled movements.

APF-DDPG framework

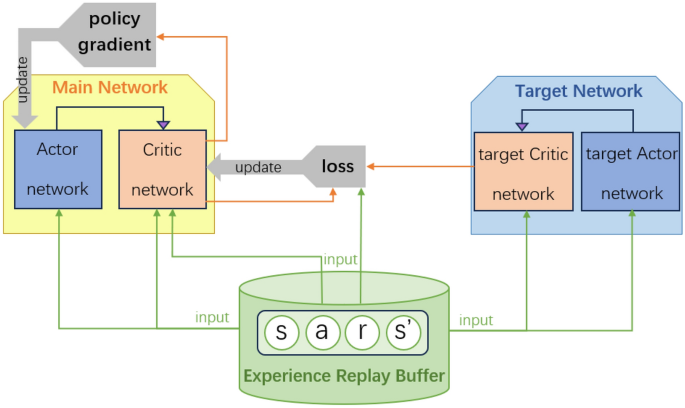

The APF-DDPG framework consists of actor and critic neural networks, their respective target networks, and an experience replay buffer for stable training, as illustrated in Fig. 1. At each timestep, the actor network outputs continuous incremental actions given the current state, which are then executed by the robot. The critic network evaluates state-action pairs by estimating the expected cumulative rewards (Q-values).

Integrated APF-DDPG framework for continuous obstacle avoidance. The main networks (actor and critic) use spatial information from APF directions to guide policy updates. Target networks stabilize training. Experiences \((s,a,r,s’)\) are sampled from the replay buffer to decorrelate training updates.

The networks are trained using mini-batches sampled from the replay buffer, where each stored experience tuple \((s,a,r,s’)\) captures interactions with the environment. To ensure stable training and smooth convergence, we follow32 and employ soft target network updates, defined by

$$\begin{aligned} \theta ‘ \leftarrow \tau \theta + (1 – \tau )\theta ‘, \quad 0 < \tau \ll 1, \end{aligned}$$

(3)

where \(\theta ‘\) are target network parameters, \(\theta\) are main network parameters, and \(\tau\) being the smoothing coefficient.

APF integration and compensation mechanism

We integrate APF into our DDPG framework to explicitly inform the actor network of desirable spatial directions. To do this, we first recall the standard artificial potential field definition10

$$U_{\textrm{att}}(q) = \tfrac{1}{2}\,k_{\textrm{att}}\bigl \Vert q – q_{\textrm{goal}}\bigr \Vert ^2, \qquad U_{\textrm{rep}}(q) = {\left\{ \begin{array}{ll} \tfrac{1}{2}\,k_{\textrm{rep}}\Bigl (\tfrac{1}{\rho (q)} – \tfrac{1}{\rho _0}\Bigr )^2, & \quad \rho (q)\le \rho _0,\\ 0, & \quad \rho (q)>\rho _0, \end{array}\right. }$$

where \(q\) is the end-effector position, \(q_{\textrm{goal}}\) the goal position, \(\rho (q)\) the distance to the nearest obstacle, and \(\rho _0\) its influence radius. Forces arise as the negative gradients of these potentials:

$$F_a(q) = -\nabla U_{\textrm{att}}(q), \qquad F_r(q) = -\nabla U_{\textrm{rep}}(q).$$

The net direction of the APF is then \(F_\textrm{net} = F_a + F_r\). However, when \(F_a\) and \(F_r\) directly oppose each other, the robot can become trapped in a local minimum (\(\Vert F_\textrm{net}\Vert \approx 0\)).

To systematically escape such traps, drawing inspiration from the classical BUG principle33, we add a small orthogonal perturbation. Specifically, we define

$$\begin{aligned} F_\perp \;=\; \frac{F_a \times F_r}{\bigl \Vert F_a \times F_r\bigr \Vert }, \qquad F_{\textrm{adjusted}} \;=\; F_a + F_r + \gamma \,F_\perp , \quad \gamma \ll 1. \end{aligned}$$

(4)

Because \(F_\perp\) is normal to the plane spanned by \(F_a\) and \(F_r\), this small perturbation guarantees movement off any planar saddle without significantly diverting the primary APF direction. The adjusted force \(F_{\textrm{adjusted}}\) is then provided to the actor network at each time step, biasing policy updates toward collision-free, goal-directed motion. The compensation mechanism is schematically illustrated in Fig. 2.

Illustration of perpendicular compensation in APF direction. When repulsive and attractive forces nearly oppose each other, a perpendicular correction is introduced, steering the robot away from local minima.

Reward function design

The reward function is carefully designed to balance obstacle avoidance and goal-oriented behavior by combining two components: proximity to the goal and directional alignment with APF guidance. Formally, we write

$$\begin{aligned} R(s,a) = -\lambda \,\textrm{distanceGE} + \mu \bigl (\cos (\sigma ) – 1\bigr ), \end{aligned}$$

(5)

where \(\textrm{distanceGE}\) is the Euclidean distance between the end-effector and the goal in Cartesian space, explicitly encouraging movements toward the goal. The term \(\cos (\sigma )\in [-1,1]\) measures the alignment between the executed displacement vector \(\overrightarrow{\textrm{EE}}\) and the APF-suggested vector \(\overrightarrow{\textrm{APF}}\), computed as

$$\begin{aligned} \cos (\sigma ) = \frac{\overrightarrow{\textrm{EE}}\cdot \overrightarrow{\textrm{APF}}}{\Vert \overrightarrow{\textrm{EE}}\Vert \;\Vert \overrightarrow{\textrm{APF}}\Vert }. \end{aligned}$$

(6)

We choose \(\mu >1\) so that \(\mu (\cos \sigma -1)\le 0\) with equality only when \(\cos \sigma =1\), ensuring every deviation from perfect alignment incurs a negative reward and thereby shaping the agent to follow efficient, APF-consistent motions. To incorporate safety constraints we distinguish collision scenarios by using separate distance-weighting parameters, defining

$$\begin{aligned} R(s,a)= {\left\{ \begin{array}{ll} -\lambda _{c}\,\textrm{distanceGE} + \mu (\cos (\sigma ) – 1), & \quad \text {if collision},\\ -\lambda _{nc}\,\textrm{distanceGE} + \mu (\cos (\sigma ) – 1), & \quad \text {otherwise}, \end{array}\right. } \end{aligned}$$

(7)

where \(\lambda _{c}>\lambda _{nc}\) is chosen based on the ratio between the workspace size and the robot arm length so that the distance penalty scales appropriately with the environment s spatial dimensions relative to the manipulator. We allow collisions during training rather than terminating episodes to promote extensive exploration of the state action space and to enable the agent to learn both avoidance and recovery strategies. Unlike pure APF methods that stall in local minima, our formulation maintains a negative distance term \(R_{\textrm{dist}} = -\lambda \,\textrm{distanceGE}\) until the goal is reached. We apply a per-step penalty to prevent hesitation or oscillation within shallow basins. We allow non-terminating collisions with a larger penalty \(\lambda _{c}\), encouraging risky detours and subsequent recovery to uncover escape pathways. We use the alignment term \(R_{\textrm{align}} = \mu (\cos \sigma – 1)\le 0\) to penalize moves that conflict with APF guidance. As a result, the agent converges on efficient, collision-free trajectories.

link