The proposed methodology for the IDS uses Deep Reinforcement Learning to increase the accuracy of intrusion detection as well as adaptability to dynamic networks. The method trains the DRL agent to inspect network traffic patterns and detect intrusion by constantly learning from experiences with the network. The agent uses a reward-based system in which it receives positive feedback for correct identification of intrusions and negative feedback for false positives or missed threats. Using a deep neural network architecture, the agent can handle complex, high-dimensional data, allowing it to identify known as well as unknown attack vectors. The detailed explanation of the proposed work SDN is described below.

Deep reinforcement learning-based intrusion detection scheme (DRL-IDS)

DRL-IDS is designed to face the challenges of detecting network anomalies and intrusions in dynamic and complex SDN environments. It uses centralized control and programmability, which make it efficient but expose it to possible security vulnerabilities. DRL-IDS utilizes Deep Reinforcement Learning (DRL) in the analysis of network traffic, allowing it to act as an intelligent agent autonomously learning from data patterns to detect malicious activities. This ability ensures that DRL-IDS adapts very well to the changing nature of network threats. The advantages of DRL-IDS lie in its ability to learn and improve through continuous interaction with the network environment. Unlike the traditional systems that rely on static rules or pre-trained models, DRL-IDS dynamically updates its strategies of detection by optimizing the action in a feedback loop. This adaptability makes it easier for the system to handle both known attack patterns as well as novel or emerging threats by generalizing beyond specific signatures that make it more robust against sophisticated or previously unseen intrusion techniques.

In addition, DRL-IDS incorporates state-of-the-art neural network architectures and optimization methods to optimize detection performance. DRL helps the system make decisions based on long-term benefits, which minimizes false positives and negatives. It not only detects anomalies in real-time but also continuously refines its detection model, making it a scalable and efficient solution for intrusion detection in SDN environments, where traffic patterns and security risks are highly dynamic. The bellman equation in (1) is derived from the principle of optimally in dynamic programming which states that the value of a state action pair is immediate reward plus the discounted future reward from the next state. In this equation is the reward for taking action at state and is the discount factor.

In addition, DRL-IDS incorporates state-of-the-art neural network architectures and optimization methods to optimize detection performance. DRL helps the system make decisions based on long-term benefits, which minimizes false positives and negatives. It not only detects anomalies in real-time but also continuously refines its detection model, making it a scalable and efficient solution for intrusion detection in SDN environments, where traffic patterns and security risks are highly dynamic. The bellman equation in (1) is derived from the principle of optimally in dynamic programming which states that the value of a state action pair is immediate reward plus the discounted future reward from the next state. In this equation \(\:\left\_\left\\) is the reward for taking action at state \(\:_\left\) and \(\:\gamma\:\) is the discount factor.

$$\:Q\left(^2_ T \right\,\left_\left\right)=E\left[ w \right\_\left\+\gamma\:\underset{^2}^2Q(\left_\left,a^{\prime\:})\right]\:$$

(1)

In Eq. (2), the policy based DRL is used to maximize the expected cumulative reward \(\:\text{J}\left({\uppi\:}{\uptheta\:}\text{}\right)\)

$$\:\text{J}\left(\uppi\:\uptheta\:\right)={\text{E}}_{\uppi\:\uptheta}\left[{R}_{t}\right]\:$$

(2)

In Eq. (3), the likelihood ratio trick is the gradient of J(πθ) with respect to θ where reward acts as a weighting factor.

$$\:{\nabla}_{\theta}J(\uppi \theta){=\text{E}}_{\uppi \theta}\left[{R}_{t}{\nabla}_{\theta}log {\uppi \theta} \left({a}_{t}\right|{s}_{t})\right]\:$$

(3)

The loss function minimizes the difference between predicted Q values and the target value where \(\:{y}_{t}\) is defined using Eq. (5). The loss is derived from the Mean Squared Error(MSE) for supervised learning.

$$\:L\left( \theta \right)=E\left[{\left(Q\left({s}_{t},{a}_{t};\theta \right)-{y}_{t}\right)}^{2}\right]$$

(4)

$$\:{y}_{t}={r}_{t}+\gamma \underset{{a^{\prime\:}}}{\text{max}}Q({s}_{t+1},{a}^{{\prime\:}}; \theta)\:$$

(5)

The reward function is designed to reinforce correct classification using the Eq. (6). This comes from the requirement to penalize misclassifications while rewarding correct classification and aligning with reinforcement learning.

$$\:{r}_{t}=\left\{\begin{array}{c}+1\\\:-1\end{array}|\begin{array}{c}if\:correct\:classification\:\\\:if\:incorrect\:classification\end{array}\right\}\:$$

(6)

In Eq. (7), the weight update rule is used for minimizing a loss L(θ). this comes from differentiating L(θ) with respect to θ to find the direction of steepest descent.

$$\:{\theta}_{t+1}={\theta}_{t}- \alpha {\nabla}_{t}\:L\left(\theta \right)\:$$

(7)

The joint cost function combines feature selection, time optimization and detection accuracy as given in Eq. (6). The regularization terms \({\left\| w \right\|^2}\) and \({\left\| T \right\|^2}\) penalize over fitting for weights and time variables.

$$\:\text{C}\left(\text{w},\text{T}\right)={\lambda}_{1}{\left|\left|w\right|\right|}^{2}+{\lambda}_{2}{\left|\left|T\right|\right|}^{2}+{L}_{detection}\:$$

(8)

In Eq. (9), the softmax function assigns probabilities to action based on the Q values. It is derived by normalizing exponential scores of Q values to ensure the sum of probabilities is 1.

$$\pi\left({a}_{t}|{s}_{t}\right)=\frac{\text{exp}\left(\text{Q}\left({a}_{t},{s}_{t}\right)\right)}{\sum_{{a}^{{\prime\:}}}\text{exp}\left(\text{Q}\left({a}^{{\prime\:}},{s}_{t}\right)\right)}\:\:$$

(9)

The anomaly detection uses z score as given in Eq. (10) where µ and \(\:\sigma\:\) are the mean and standard deviation of normal traffic. This score measures how far a data point x deviates from the mean in terms of standard deviations.

$$\:\text{A}\left(\text{x}\right)=\frac{\parallel\:\text{x}-{\upmu\:}\parallel\:\text{}}{\sigma\:}\:\:\:$$

(10)

In Eq. (11), the sigmoid function is derived and the function maps any real values z to the range(0,1).

$$\:y=\frac{1}{1+epx(-{w}^{T}x+b))}$$

(11)

The Particle Swarm Optimization (PSO) velocity and position update rules are given in Eqs. (12 and 13).

$$\:{v}_{i,j}\left(t+1\right)=w{v}_{i,j}\left(t\right)+c1r1\left({p}_{i,j}-{x}_{i,j}\right)+c2r2\left({g}_{j}-{x}_{i,j}\right)\:$$

(12)

$$\:{x}_{i,j}\left(t+1\right)={x}_{i,j}\left(t\right)+c{v}_{i,j}\left(t+1\right)$$

(13)

\(w{v}_{i,j}\left(t\right)\) encourages exploration of the search space, \(c1r1\left({p}_{i,j}-{x}_{i,j}\right)\) pulls \(c2r2\left({g}_{j}-{x}_{i,j}\right)\) particles toward the personal best and pulls particles toward the global best.

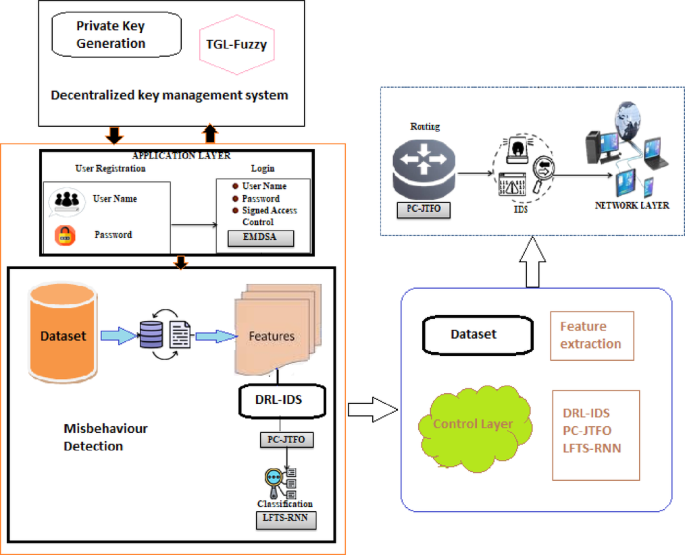

Software defined network

Software-Defined Networking (SDN) is a contemporary notion of network paradigm that has three separate planes: application plane, control plane, and network plane, which altogether enrich network management. The proposed Intrusion Detection Scheme in SDN identifies and classifies attackers into three categories: Masqueraders or unauthorized outsiders accessing private information, Misfeasors or insider misuse of their privileges – either through unauthorized access or an abuse of legitimate permissions-and Clandestine users who could be insiders or outsiders seizing supervisory control and abusing authority. To ensure robust detection, an IDS would run on each SDN plane ensuring that security and reliability improve by tracking threats specific to each plane.

User registration

In the proposed SDN framework, the registration of user at the application layer through a combination of username and password is utilized. The registration process actually distinguishes between the rightful users and intruders. Thus, only authorized people get access to the network. The total number of registered users (NR) is as a function of the usernames registered (U) with their corresponding passwords (P), indicating that unique user credentials are required. This scheme reinforces the first layer of security through the building of a strong, authenticated database of users.

The proposed model further enhances security through the incorporation of a Dynamic Key Management System (DKMS). The DKMS issues and binds a Secure Access Credential (SAC) and a private key to each legitimate user. The cryptographic components prevent an attacker from accessing the network even if the username-password combination is compromised, as the SAC and private key are required to access the network. This two-layered security strategy ensures a higher level of protection for the SDN environment.

User login

Once registered with a username, password, and SAC, the users log in to the SDN environment. For every legitimate user, the DKMS issues a private key and SAC. In order to authenticate the said users at the time of login, an Enhanced Digital Signature Algorithm is presented. The basic underlying algorithm for this new DSA is based on an algorithm that has a history in data integrity and security; however, its main problem and weaknesses include small key sizes as well as slow signature computations that make it vulnerable to various attacks. Thus, using the Entropy Makwa EM key stretching technique with the design of EMDSA provides strong security and efficiency improvements during verification.

The key generation phase in EMDSA creates a private-public key pair for each user. These keys are then strengthened using the EM key stretching technique, which mathematically defines the enlargement of original keys for better security. In order to derive the digital signature after generation of the private key and a hash value of the message, it is made such that the signature can occur only once with that specific private key and message. A new hash value is derived by the receiver, upon signature verification, and compared using the public key of the user with the signature. Successful verification, by making the user legitimate and letting the access pass to the SDN; otherwise, the user gets flagged as an intruder. The layered approach offers better authentication and protection against intrusions in access.

Misbehaviour detection

The process of misbehavior detection in SDN is multi-critical phases, including data acquisition, feature extraction, feature selection, and classification, for the proper identification of misbehaving legitimate users. Data acquisition starts with collecting historical datasets from publicly available resources containing information about phishing attacks. This data serves as the basis for the model, giving insights into malicious user activities. Feature extraction is done, thereby extracting important behavioral features such as IP addresses, URLs, port addresses, DNS, and web traffic to classify user behavior and train a detection model. Feature selection is done by using PC-JTFOA-modified Japanese Tree Frog optimization algorithm. PC-JTFOA optimizes in the process of improving distribution uniformity of selected features enhancing classification accuracy and overall performances. The optimization process includes defining local solutions, communicating between features (frogs), and selecting the best community of features based on classification accuracy, which finally leads to a set of optimal features for the detection model.

Following selection of the features, comes the classification phase where the feature data are passed to a LFTS-RNN classifier. The traditional RNN is good at sequential information processing, but it sometimes suffers from vanishing gradients that negatively affect its performance. To address this, LogishFTS (LFTS) activation function was introduced to replace the standard sigmoid function, thereby avoiding gradient problems and increasing accuracy in the model. It allows the RNN to process large data with more efficiency without discontinuities and enhances stability in the training process. The structure of the network involves passing features through input layers to the hidden layers, with the recurrent connections between the layers further improving the detection process. The number of hidden layers adjusts at each timestamp to ensure dynamic learning and better representation of the data. The final output layer classifies users as legitimate or misbehaving based on the features and interactions learned by the network, effectively identifying threats within the SDN environment. When there is misbehavior by the users, such an instance identifies the users whose access control needs to be changed from SAC to DAC in order to apply more significant security measures.

Decentralized key management system

The Traf Gauss Lyapunov (TGL)based Fuzzy system is used to implement the dynamic access control mechanism for misbehaving users. It is included in the Dynamic Knowledge Management System (DKMS). It has been chosen because of simplicity, flexibility, and giving the optimal solution for a complex problem. Traditional fuzzy algorithms are often handicapped by the complexity of the tuning of the Membership Function (MF), which reduces the efficiency. To address this, the TGL membership function is introduced into the model to improve its performance and capture the fuzziness and uncertainty inherent in the data more effectively. In the TGL-Fuzzy system, the decision-making rules are developed in terms of logical IF-THEN conditions. Only the full access is allowed with the username, password, and access control being authenticated; otherwise, the users obtain limited access.

The TGL membership function serves as a critical element in the process of fuzzification because it helps map crisp data into fuzzy values and makes efficient decision-making possible. Unlike the ordinary fuzzy systems, TGL membership function has nonzero values at all points, improving its ability to capture uncertainty in the data and respond to it. It makes use of Gaussian functions and trapezoidal parameters and transforms crisp data into fuzzy values. The parameters in the TGL function, including the base points of the trapezoid and the Lyapunov candidate function, will help model the dynamic behavior of the system. The function makes the fuzzy system represent more precisely the degrees of uncertainty and control within the decision-making process.

The TGL-Fuzzy system consists of three key units: fuzzification, inference, and defuzzification. The fuzzification unit changes the crisp input data into fuzzy data, allowing the system to cope with imprecision. The inference engine uses interference operators to perform fuzzy operations on the fuzzified inputs in order to make appropriate decisions about access control. The final unit is the defuzzification unit, which transforms the fuzzy results back into crisp data that will determine the level of access for the user. It dynamically blocks misbehaving users from logging into the system by allowing adjustment of the DAC mechanism using the TGL-Fuzzy system, thus enhancing overall network security.

Data security

The proposed system will employ the Efficient Implicit Curve Cryptography (EICC) technique, which has more improved encryption tasks compared to the traditional Elliptic Curve Cryptography (ECC). Although ECC has been chosen for its smaller memory requirement, rapid encryption speed, and high security, it is burdened with high computational complexity because of the negative points in the variables used in the conventional ECC. This makes an efficient implicit curve appear with EICC that curbs the computational complexity for system performance. This means, in the context of a set of mathematical variables for curve, it is easy for processing encryption processes. Its basic idea is to help it encode information safely while not putting in extra efforts on computation due to the curve.

With this, the generation process in EICC goes around forming a public and a corresponding private key. The public key is used to encrypt messages while the private key is used for decryption. A random number within some specified range generates the private key, while the public key is a derivation from this private key and point on the implicit curve. In encryption, an original message is represented as a point on the curve, with two cipher texts being generated in order to ensure safe communication. The system makes use of a private key and a particular mathematical expression to retrieve the original message for decryption. Mathematically, the encrypted data is represented so that user information and privacy are maintained.

Load balancing

To overcome the issues created by network traffic and heavy burdens of SDN, the PC-JTFOA Load Balancing Algorithm is adapted in the proposed system. With SDN, the possibility of network congestion and latencies often leads to non-reliability in the whole system. By load balancing, the system efficiently distributes its network traffic, thereby decongesting the concerned network components and thus enhancing general performance. The PC-JTFOA is utilized in reducing the response time, which is critical to optimize the network efficiency. The fitness value of the system is approximated using the minimization of the response time, and the resultant load-balanced data is mathematically represented in order to have efficient data flow and improve network stability.

Intrusion detection system for control layer

The process of an IDS begins with data acquisition. The input data is obtained from publicly accessible sources. Such data entails historic data related to the occurrence of security incidents and cyber threats that have involved the internet, which makes a good basis for the training of the IDS model. Collected data makes all the difference in developing an attack as well as non-attacks-detecting network. The data includes different kinds of network behaviors and signatures for the attacks, therefore allowing broad coverage of any kind of security issues related to the system. Second, features are extracted that enable enhancement of the IDS model. Some of these essential features include protocol type, service, flag, host, login details, source, and destination bytes of collected data. These features facilitate recognizing the patterns that signal potential attacks or anomalies in the network. The extracted features play a very important role in the detection of malicious behavior, especially by pointing out critical aspects of the data that influence security, so that the IDS focuses on the most relevant attributes.

The final steps are feature selection and classification. PC-JTFOA is used for selecting the optimal features from the extracted data to maximize classification accuracy. The system can then improve its attack detection performance and decrease false positives by focusing on the most relevant features. After feature selection, data is input into the proposed LFTS-RNN classifier, which classifies the data as either attacked or non-attacked. This step enables the IDS to make precise predictions about network security, with timely and accurate detection of intrusions.

Routing

The proposed Particle-Collaboration Joint Task Flower Optimization Algorithm (PC-JTFOA) ensures optimally routing non-attacked data for efficient data transmission in terms of energy. The main idea for this routing process is that it minimizes the distances over which data travels in a network, thereby reducing its consumed energy. With the PC-JTFOA, the optimal routing path is determined in the SDN network layer to ensure data is transmitted using the most energy-efficient routes. This approach is based on the topology and available resources of the network to minimize energy usage while ensuring data integrity and performance.

The fitness function used in PC-JTFOA focuses on distance minimization, which directly correlates with energy savings in the network. The system optimizes the path for data transmission and, hence, reduces the total energy expenditure in routing data. This is crucial in the context of large-scale networks where data routing becomes a significant overhead. After optimizing the routing path, the system routes the non-attacked data efficiently through the network, minimizing energy consumption while maintaining reliability and speed of transmission.

Intrusion detection system for network layer

An overview of deep reinforcement learning-based intrusion detection scheme.

Figure 1 shows a general overview of the DRL-IDS, which integrates a DRL agent for detecting and mitigating network intrusions within SDN environments. The scheme adopts a centralized control model, using DRL to analyze network traffic, dynamically adapt to emerging threats, and optimize detection strategies over time. The DRL agent continuously learns from network data, improving its ability to identify known and novel intrusions by adjusting its decision-making policy through a feedback loop. The primary aim of the system is to reduce false positives and negatives, ensure real-time anomaly detection, and provide an effective and scalable solution for securing dynamic SDN environments. The routed data is input into the Intrusion Detection System (IDS), similar to the process in the control layer, to identify data attacks in the network layer of an SDN. The IDS for the network layer follows a structured approach, starting with data acquisition, where data related to network activities is collected. This information primarily includes previous records of the threats, network traffic patterns, and attack vectors. Next comes the process of feature extraction of all those key attributes, that would include protocol type, packet size, source-destination IP addresses, henceforth to distinguish between network behavior normal and malicious behavior.

Optimization techniques like PC-JTFOA are conducted for feature selection in order to identify the most relevant features responsible for detecting attacks at the network layer. Then, features selected are passed to some classifier, for example the LFTS-RNN, which then classifies the data as attacked and non-attacked respectively. The proposed system applies the same IDS procedure across the application, control, and network layers to ensure comprehensive security. It detects attacks along multiple layers of the SDN architecture, which effectively means enhancing the overall security across the network by detecting probable threats and mitigating those threats in a timely fashion.

Theoretical foundations of DRL-IDS

The DRL-IDS system can quickly adjust to new or altering threats since reinforced learning enables it to change its approach after each choice.

By collecting persistent temporal patterns in SDN data using LogishFTS initialization, LFTS-RNN improves the identification of discontinuous and consecutive invasions. By improving choosing features and route configurations, PC-JTFOA lowers computational cost and response time.

These components collaborate in order to form a closed cycle whereby the agent running the DRL gets reliable temporal representation from optimised parameters. Throughout SDN networks, this combination increases scaling, accuracy, and flexibility.

link